Edge is Driving an Infrastructure Renaissance

In ancient times, access to the Internet was restricted to those who could afford and operate a hardware modem. The disharmonic shrieks of the hardware and the Hayes command set are indelibly etched in the minds of those who suffered through those dark times.

Broader access to the burgeoning Internet would not occur until the advent of software like CompuServe and AOL, whose software enabled those who could afford—but not operate—a modem the ability to "surf the world wide web."

Fast forward and today there are now over 4 billion—more than half the world's population—with access to the Internet.

The means by which they do so hasn't changed. There is still infrastructure that conducts the hard work of maintaining a connection between the average 7.8 devices in the home and the apps on the Internet they connect to. What has changed is the demands on the user to operate the infrastructure and the requirements for software to take advantage of the services it offers. Modems and routers are now a commodity, with ease of use and operation as their primary selling points.

But there is a growing subset of consumers that are more interested in technical capabilities, in optimization and options to improve performance even at a higher cost. They want optimized infrastructure that offers an answer to performance problems. Those "pro" devices that tout such benefits can command—and receive—a higher price. To wit, Netgear currently holds 51% of the "high end" WiFi router market, largely attributable to its "pro" line of gaming-focused routers. I confess here to owning one… or two … because we all know that in gaming, lag kills.

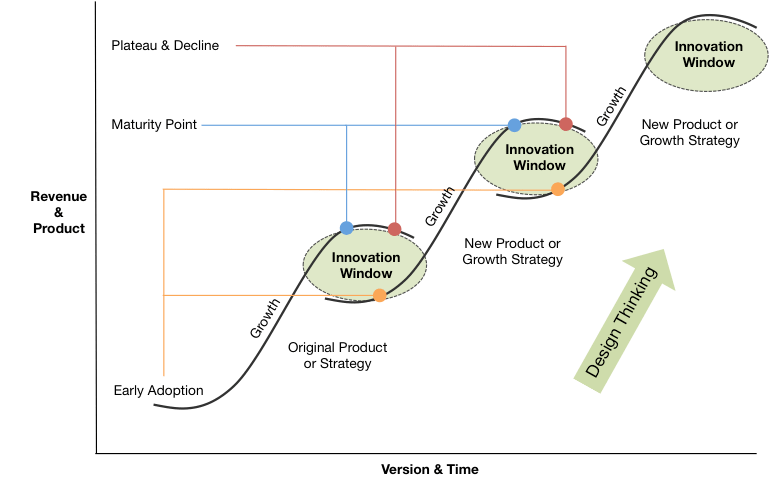

This technological cycle is often represented by the S-curve theory of innovation.

This cycle seen in just about every industry. From specialization to commodification to innovation, products go through the same transformation. We currently stand on the precipice of an innovation window; an infrastructure Renaissance, if you will.

Extracting Value

Commodification of infrastructure has resulted in inefficiencies even as application workloads diverge and specialize.

Infrastructure is no longer focused on providing resources for a specific type of workload such as cryptography or advanced analytics. The increase in speed and volume of Internet traffic has forced scaling strategies to shift from one that relies on vertical scale to a horizontal model. With Moore's Law in decline (or at the very least not keeping pace with demand for processing power), the economy of scale of a horizontal, software only system is limited. If performance is not negatively impacted, profit will be as the costs to scale rise along with demand for digital services. The model works, but it is inefficient and leaves untapped processing power on the table that could be used to improve the economy of scale.

The cyclical nature of technology indicates that the need for optimized infrastructure, capable of harnessing that untapped processing power, is imminent.

There is value in infrastructure. We see it in our homes, in the marketplace, and in our schools. The value of infrastructure and optimized components is not the question. Consider that:

“NVIDIA (NASDAQ:NVDA) stock skyrocketed 76.3% last year, according to data from S&P Global Market Intelligence. Including dividends, the graphics-processing unit (GPU) specialist and artificial intelligence (AI) leader returned 76.9%. For context, the S&P 500 returned 31.5% in 2019."

Motley Fool, Jan 2020

In the public cloud, providers are aware and putting in place the foundations for optimized infrastructure. Of the 266 instance types offered by Amazon, there is already a significant number of “optimized” instances. Each instance focuses on extracting value from infrastructure by optimizing a resource: memory, I/O, compute, storage. Cost-efficiencies are gained by matching workload requirements with the right optimized infrastructure. There are also a growing number of GPU and FPGA-enabled instances, fueled by the need to optimize for high-volume, real-time analytics.

These targeted instances exist because performance and capacity needs are largely determined by what type of AI/ML functions need to be processed. Some functions are better served by higher storage bits and faster memory bandwidth while others perform better with faster clock speeds or more CUDA cores. The specific application dictates the requirements for infrastructure.

Consider the recent acquisition of Arm by NVIDIA. The staggering $40B price is justified as an investment in a future driven, in part, by distributed AI. That capability will be enabled, in part, by taking advantage of specialized hardware:

"Simon Segars and his team at Arm have built an extraordinary company that is contributing to nearly every technology market in the world. Uniting NVIDIA’s AI computing capabilities with the vast ecosystem of Arm’s CPU, we can advance computing from the cloud, smartphones, PCs, self-driving cars and robotics, to edge IoT, and expand AI computing to every corner of the globe."

Expanding to the Edge

As organizations ramp up their generation of data and seek to extract business value from it, analytics and automation powered by AI and ML will certainly be on the table as technologies put to the task. These are exactly the type of workloads that will benefit from optimized infrastructure, and yet they are the ones least likely to be able to take advantage of it today.

While data centers and public cloud are today the most capable in terms of taking advantage of this infrastructure renaissance, recent moves to expand the ability of all enterprises to enjoy the benefits of high-powered hardware indicate this movement will continue. Just as technology is no longer confined to data centers and now resides in our coffee pots and smart phones, a renewed focus on optimization of hardware will enable the distribution of powerful compute to the very Edge of the internet, where it will provide enhanced processing power and data to fuel AI-based analytics.

The ability of every enterprise to reap the benefits of AI and analytics is one of the challenges that needs solving as we seek to accelerate the customer journey through the three phases of digital transformation. And it is a challenge that will ultimately be answered in part with an Infrastructure Renaissance.