The Surprising Truth about Digital Transformation: Diseconomy of Scale

This is the fourth blog in a series on the challenges arising from digital transformation.

- The Surprising Truth about Digital Transformation

- The Surprising Truth about Digital Transformation: Cloud Chaos

- The Surprising Truth about Digital Transformation: Skipping Security

Diseconomy of Scale.

When you’re growing a business, especially over time, you tend to pile up baggage that needs tending to. You rarely decrease the number of applications you have to support. Usually it increases, and sometimes exponentially in the span of a few years. In unremarkable times, applications tend to double every four years. When a new technology forces its way in, that number increases.

Each app requires more support in operations (that’s network, compute, storage, and security, for the uninitiated). But just as we know that vertical scale only takes you so far (you can only throw so much hardware at the problem) we know that the same is true for human resources. We can’t keep just adding ops to match the growth of apps. Doing so increases management (overhead) and slows down communication as we struggle to figure out who is responsible for what, let alone actually have a conversation with that person.

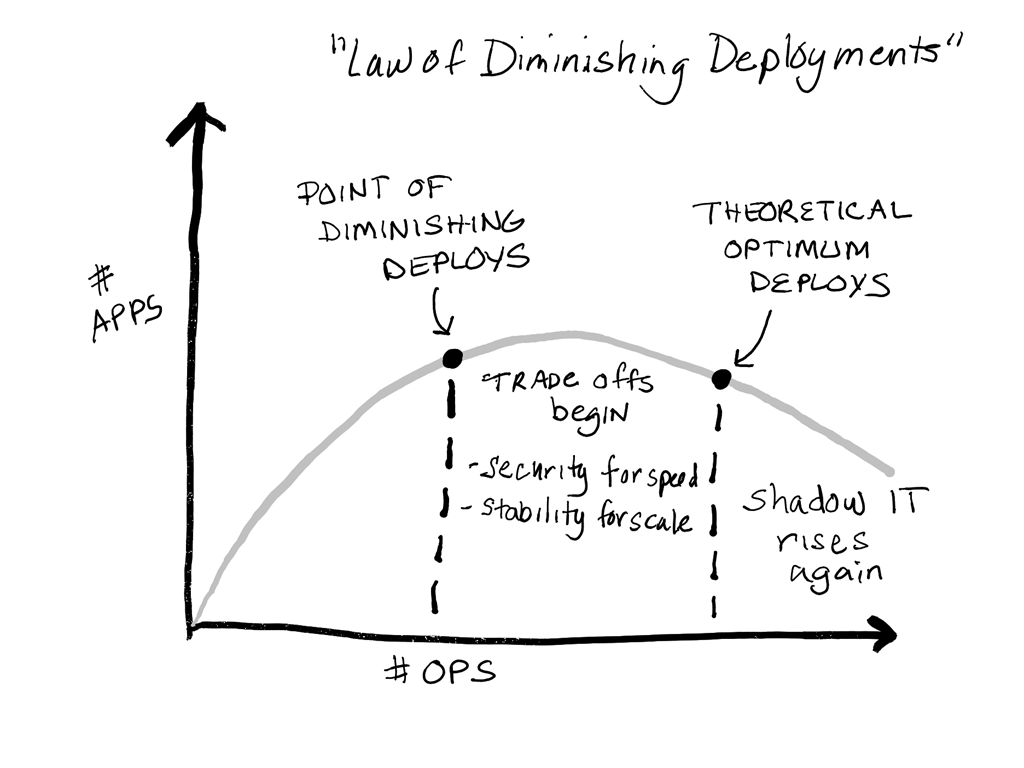

That’s what the Law of Diminishing Deployments shows us. There comes a point at which the number of ops actually impedes deployment and we see trade offs begin. Like Faust bargaining with the devil, we opt for speed over security and scale over stability. Eventually we hit an inflection point at which we’ve reached the end of our theoretical rope and dev and ops and the business begin looking elsewhere for solutions.

In a nutshell you can only hire so many ops to deal with the rising number of apps before you run afoul of the Law of Diminishing Deploys.

This is why automation is critical for operations to keep in step with the increase in applications and demand for speedier deployment. Because no matter how fast you type, compute is faster.

But keep in mind that automation is more than scripting.

We already do a lot of scripting, and it certainly has been a factor in keeping us at least in the race with deployments. For automation to truly be successful it shouldn’t be executed on a per-device or application basis. It just shouldn’t. That doesn’t scale. If you’re writing one script per device or app, you’re not doing automation right.

Automation needs to be about the process (so it’s really orchestration, but that’s a windmill I keep tilting at that refuses to fall) and about consistent execution. That means you need to adopt automation – not just script changes. And that means a new approach that incorporates principles from DevOps like infrastructure as code.

Infrastructure as Code

Infrastructure as code is a simile. That means the actual infrastructure might be software, or hardware, cloud or on-premises. The implementation is irrelevant for purposes of deployment, because we’re going to be using APIs and deployment artifacts to achieve the behavior of infrastructure as code. By decoupling the configuration from the device, we can treat the device as though it were a black box.

What’s important is the deployment artifacts – the templates, scripts, and policies used to provision and configure the device for the desired service. Those are stored in a repository (ON-PREMISES). These fully describe a given service – whether network or security or storage or app infra – and can be used to recreate the service at will. These artifacts can be used to automate individual tasks – add a firewall rule, deploy a load balancer, configure the WAF – and then be combined into an orchestrated process to achieve continuous deployment.

The reason this is important in addressing the diseconomy of scale is that pure scripting is still a per-device task. Having a script makes a five minute chore a five second task. But it’s still manually invoked, and requires people to drive the process. It doesn’t address the greater need to automate the entire *process* - and that’s what you need to achieve the economy of scale required to compete in a digital economy.

Stay tuned for the last post in this series, in which we’ll dig into how you can safely and securely deliver the increasing number of apps deployed in containerized environments.