My father was a fan of westerns. And not just the Lone Ranger but all the old, black and white westerns. The ones with Gene Autry and Smiley Burnette and John Wayne. Bonanza, too, was a must if it was on. The thing these old (very old now, I suppose) television shows have in common is that there was always, and I mean always, just one sheriff and his deputy in any given town. The town was invariably peaceful, and probably didn’t need more firepower. Until the guys in the black hats came along. When it was evident that the black hats could outgun and outride the white hats, the sheriff always found a bunch of noble townsfolk to deputize in his fight to restore law and order to the previously idyllic existence of the small, western town.

It’s appropriate, given the security industry’s adoption of western-terminology to depict the “good” guys and the “bad” guys (white hats and black hats) that recent years have shown an increase in the deputation of noble townsfolk with increasingly lucrative bug bounty programs.

Bug bounty programs are not new, but they are, according to BugCrowd’s second annual State of Bug Bounty 2016 report, on the rise. The report, which covered 286 programs and counted over 26,000 researchers, noted in its key findings that programs are not only paying out (over $2M across 6800 submissions over the course of one year) but expanding beyond its early domain of technology companies to include finance, automobile manufacturers, the United States government, and major airlines.

Why the rise? Consider these statistics compiled by our own David Holmes on the current state of talent in the security industry:

“The IT skills shortage has become epidemic. There simply aren’t any security people available to hire. For five years now, the unemployment rate for InfoSec professionals has been less than 2% (and often 0%). The tight job market for people with security skills has had a predictable impact on wages: According to Payscale, in 2014, half of all security architects made more than $120K: that’s 50% more than the average software engineer—and a whopping 300% what a licensed nurse practitioner makes. People with security skills command more pay, leave for startups, or get poached for cushier jobs with better titles (ahem). ESG’s Jon Oltsik wrote that his #1 prediction for 2015 is “Widespread impact from the cybersecurity skills shortage.”

Organizations have long turned to outsourcing, whether through temp agencies or consultants, regardless of industry to fill gaps in their workforce. Security, it turns out, is no different. Except even the availability of outsourced experts is impacted by the skills shortage, and like sheriffs in the old west, organizations are turning to the public at large with bug bounty programs that are, in effect if not name, the deputation of the Internet.

What’s notable, however, is that most organizations touting a bug bounty program are large, mature organizations with the budget necessary to pay out for what are inarguably “beginner’s mistakes”.

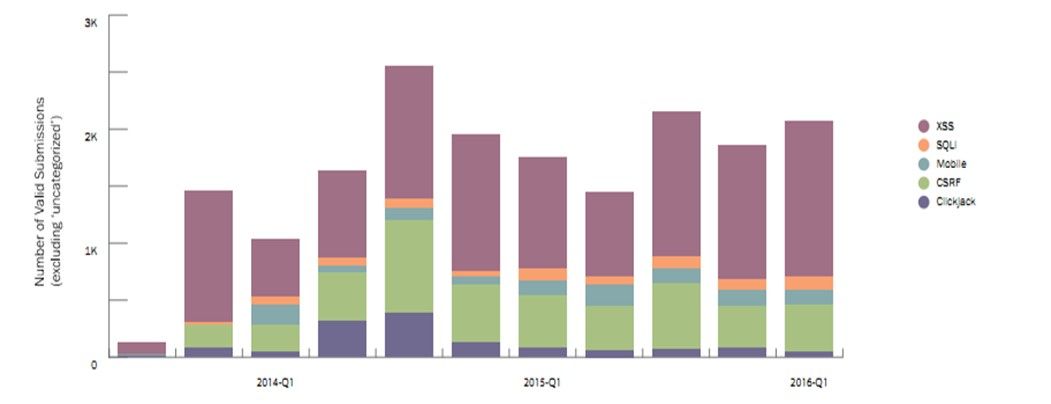

Figure 1: Bug types across valid submissions shows a decline in low value bug types such as clickjacking, and steady submissions in XSS and mobile bugs.

XSS, SQLi, and CSRF are among the OWASP “Top Ten”, with reams of documentation, tutorials, code samples, and tools capable of discovering these bugs before applications are introduced to the wild. One would think that organizations would take the time to ferret out and address such vulnerabilities before unleashing the hordes of grey and white hat hackers on their apps. After all, the budgets of smaller organizations with less mature internal security programs could easily become overwhelmed by the discovery and subsequent payout of such common vulnerabilities.

Or would they?

Thanks to the dearth of security talent available today, salaries are high. But the BugCrowd survey found that bug bounty hunters appear to be willing to work for less than many command, with the majority ( > 50%) citing a salary of $74,000 annually or less to hunt for bugs full time. App layer vulnerabilities are notoriously difficult to find. 63% of respondents in our State of Application Security 2016 believe that “attacks at the application layer are harder to detect – and more difficult to contain – than those at the network layer.” Deputizing the Internet starts to sound like a good value proposition at this point.

And yet the problem of addressing the bugs once they’re discovered (and paid out) remains. Deputizing the Internet to find the vulnerabilities does not remediate them automagically. As we all know from G.I. Joe, “knowing is half the battle”. Unfortunately for organizations, the other half is not red and blue lasers, but rather fixing the vulnerabilities once they are known to exist. Developers and operations must invest time and budget to redress such bugs, and that organizations of all sizes find that difficult remains evident in White Hat Security’s 2016 annual report, in which it found that “It takes approximately 250 days for IT and 205 days for retail businesses to fix their software vulnerabilities.”

In fact, the report notes that most web applications exist in a state of vulnerability:

- Information Technology (IT) — 60 percent of web applications are always vulnerable.

- Retail — half of all web applications are always vulnerable.

- Banking and Financial Services — 40 and 41 percent of web applications are always vulnerable, respectively.

- Healthcare — 47 percent of web applications are always vulnerable.

Some of this is due to severity, or web app usage. Threats and risk are two different measures, after all, and it behooves every company to weigh carefully the existential threat of a vulnerability against the risk of its exploitation. Some organizations are simply backlogged with higher priority projects. A variety of surveys, including one from OutSystems, noted that organizations have an average backlog of 10-20 mobile applications. That doesn’t count the continued investment in new mainframe applications or other, just as critical, web applications.

While there is certainly value in bug bounty programs for organizations of all sizes, it’s only half the battle. The other half remains in eliminating or at least mitigating with intermediate tools and services the bugs that are discovered by such programs.

Knowing is only half the infosecurity battle. The other half is remediation.