Featured Series

AI

Topic Bridge

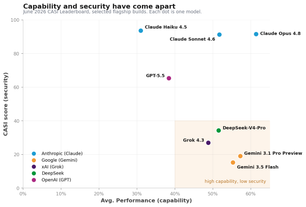

CASI leaderboard shifts, and two incidents where AI was handed the keys.

Featured Series

Sensor Intel Series

Microsoft Exchange ProxyShell Scanning Doubles in April 2026 as Two Distinct Campaign Clusters Emerge

Sensor Intel Series: April 2026 CVE Trends

Featured Series

OSINT

Weekly Threat Bulletin – June 3rd, 2026

These are the top threats you should know about this week.

Targeted Research

Authentication

Password Safety & Security: Passwords vs Passphrases

World Password Day is May 7. We've updated our guidance to reflect newer techniques and knowledge, ranging from passwords, passphrases, passkeys, and more.

Report

Encryption

The State of Post-Quantum Cryptography (PQC) on the Web

We analyze the world’s most popular websites and most widely used web browsers to determine the current state of PQC adoption on the web.

All F5 Labs Content

Vulnerability Trends

A by-the-numbers breakdown from the latest threat intelligence

Sensor Intel Series: Data From April 2026

Hundreds of apps will be attacked by the time you read this.

So, we get to work. We obsess over effective attack methods. We monitor the growth of IoT and its evolving threats. We dive deep into the latest crypto-mining campaigns. We analyze banking Trojan targets. We dissect exploits. We hunt for the latest malware. And then our team of experts share it all with you. For more than 20 years, F5 has been leading the app delivery space. With our experience, we are passionate about educating the security community—providing the intel you need to stay informed so your apps can stay safe.

Every

9 hrs

a critical vulnerability—with the potential for remote code execution—is released.