Unpacking Zero Trust As A Concept

Since the term “zero trust” was coined in 1994 by Stephen Paul Marsh in his doctoral thesis, it’s gone through a lot of changes. So many, in fact, that security practitioners often find themselves with a mandate to implement it without a good understanding of how to do so. Thankfully, with the publication of NIST SP 800-207 in August 2020, we have a document that may help CISOs, operations teams, and architects bridge the gap between the theory of zero trust and the practicalities of implementation.1 This article is an attempt to briefly explain zero trust principles, discuss the architectural patterns that arise from those principles, and use some concrete examples to help practitioners make sense of this paradigm. Since the term “zero trust” can apply to principles, architectural designs, initiatives to implement those designs, and products, we are going to lean heavily on the NIST 800-207 document, since it is uniquely clear in distinguishing between these and providing useful guidance.

How Much Trust Did We Have Before?

Zero trust architecture (ZTA) is an attempt to address some of the shortcomings of what we could call a more traditional security architecture, so it makes sense to start by describing that older form.

In a traditional security architecture, broadly speaking, there is a hard perimeter, usually defined by one or more firewalls, along with a VPN for remote access, and some centralized authentication management that identifies the user and grants access. Generally speaking, once an authenticated user is inside the security perimeter, they have very few controls placed on them—they are in a “trusted” zone, and so may access file servers, connect to other nodes within the network, use services, and so forth.

Of course, some enterprises have been aware of the shortcomings of this general approach for quite a long time, and so there may be perimeters within perimeters, or points of control and re-authentication in some architectures, but overall, the general tongue-in-cheek description of the traditional model is “hard on the outside, soft on the inside.” One occasionally hears this described as a “walled garden.” The walls are supposed to keep the bad actors out and allow friction-free productivity inside.

Whatever way we picture this, and however we might have implemented it, there are several downsides to such a design. The main downsides are the following:

- If an attacker can penetrate the perimeter, they often have unimpeded access to explore, use lateral movement, attack machines inside the perimeter, and elevate their privileges, often without much chance of being detected.

- There is little attention paid to the behavior of an individual authenticated entity. An authenticated user may do things that are very out of character, and not be detected.

- The overall lack of granular access control allows users (malicious or not) to access data and services that they do not strictly have a need for.

- The distinct focus on external threats does not address malicious insiders, nor external attackers who may have already gained a foothold.

- This security architecture is difficult to integrate, implement, and maintain as enterprises make the transition from on-prem to cloud, multi-cloud, or hybrid environments.

- The assumption that all devices within the perimeter can be trusted is often difficult to assert, given the popularity of bring your own device (BYOD) and the need to grant access to external vendors, contractors, and remote workers.

What Is Zero Trust Architecture?

ZTA addresses these challenges by redefining the overall architecture, removing the concept of a trusted zone inside a single large perimeter. Instead, ZTA collapses the trust boundary around any given resource to as small a size as possible. It does this by requiring re-authentication and authorization each time a user wants to use each resource. This places identity and access control of individual entities at the center of the architectural concept.

Advantages of Zero Trust Architecture

This new architectural paradigm addresses many of the gaps in the older model. To begin with, it can prevent lateral movement by attackers. If an attacker (insider or external) can manage to get “inside,” they will be continually confronted with barriers to gaining further access, having to re-authenticate and prove their identity constantly for each resource.

ZTA also uses behavioral analytics on entities, whereas traditional access control often uses only a set of credentials for its criteria. User parameters such as time of day, pattern of access, location, data transfer sizes, and many other observable phenomena are evaluated to determine if the entity that is attempting to access the resource is doing so in acceptable manner. If an entity starts attempting to access resources they usually don’t, or at times of the day they are usually not working, these behaviors trigger an alert, and possibly an automated change in authorization.

Shrinking the perimeter to the individual resource level makes it possible to achieve very fine-grained access control. A specific set of APIs may be allowed for some users, and a different subset allowed for others, for example. Or a contractor may be allowed access only to the system they support and nothing else. While this is possible and often attempted in traditional architectures, the extremely fine-grained access control offered by ZTA style architecture can make this easier to manage and monitor.

In addition, BYOD becomes easier to manage. Non-corporate devices can be limited to access only a subset of resources, or prevented from any access at all unless they meet certain specific requirements in terms of patch level or other qualities.

Provided the ZTA implementation is sufficiently extensive and complete that the external perimeter can be removed entirely, remote workers and contractors no longer need to be granted VPN access. They can simply be granted access to only those resources they require, and no others, which can simplify and even remove the need for VPNs entirely, although the latter is rarely achieved in practice outside of cloud environments. Similarly, guest networks for visiting customers are reasonably easy to implement using this architecture; they are allowed access only to outbound connectivity.

Disadvantages of ZTA

This radical departure from a traditional architecture comes at some cost, however. Given that this is, at least in some regards, an entirely new paradigm, it will take time for current security administrators to adapt to it. The actual implementation of this architecture usually requires significant investment in additional controls, with attendant learning, training, and support costs. Integrating ZTA is complex, and maintaining it promises to be complex as well, with an entirely different model of access granting, and many more places where robust monitoring must take place. The very advantages of fine-grained access control may be offset by the difficulties of managing such complexity. And behavioral access control has not been widely implemented so far, and rarely has it been implemented easily.

Finally, any large architectural change requires a balance between keeping the business running and transitioning to the new architecture. The move to ZTA requires careful planning, change control, and a great deal of time and effort.

How New Is Zero Trust, Really?

If you have read this far and are rolling your eyes because it all sounds very familiar, you are not alone. At the level of principles, ZTA sounds less like a completely new architecture and more like an incremental advancement of previous principles, such as role-based access control (RBAC), defense in depth, least privilege, and “assume breach.” However, once we start to sketch out the options to implement re-authentication for every request, it becomes clearer what a substantive change this really is. For this reason, one of the strongest aspects of the NIST document published in 2020 is its emphasis on a few core components that are necessary to accomplish a true ZTA: Policy Decision Points (PDPs) and Policy Enforcement Points (PEPs). The presence of PDPs and PEPs in front of every enterprise asset, through which every request must pass, is the most significant and indicative differentiator between ZTAs and previous attempts to implement similar, though narrower principles.

PDPs and PEPs are abstract capabilities that can take different forms depending on the needs of the enterprise (more on that below). But in all cases, Policy Decision Points are components that evaluate the holistic posture of the requesting subject and the requested object and produce an access control decision. Policy Enforcement Points are the components responsible for opening and closing connections to specific resources based on the output of the Policy Decision Point. PDPs and PEPs can be consolidated or distributed, and in some cases the PEP will be split between an agent on the subject system (such as an employee laptop) and a gateway of some form on the resource. But in all cases, PDPs and PEPs represent the capabilities through which the basic goal of re-authenticating and re-authorizing every request is accomplished.

Approaches, Variations, and Scenarios

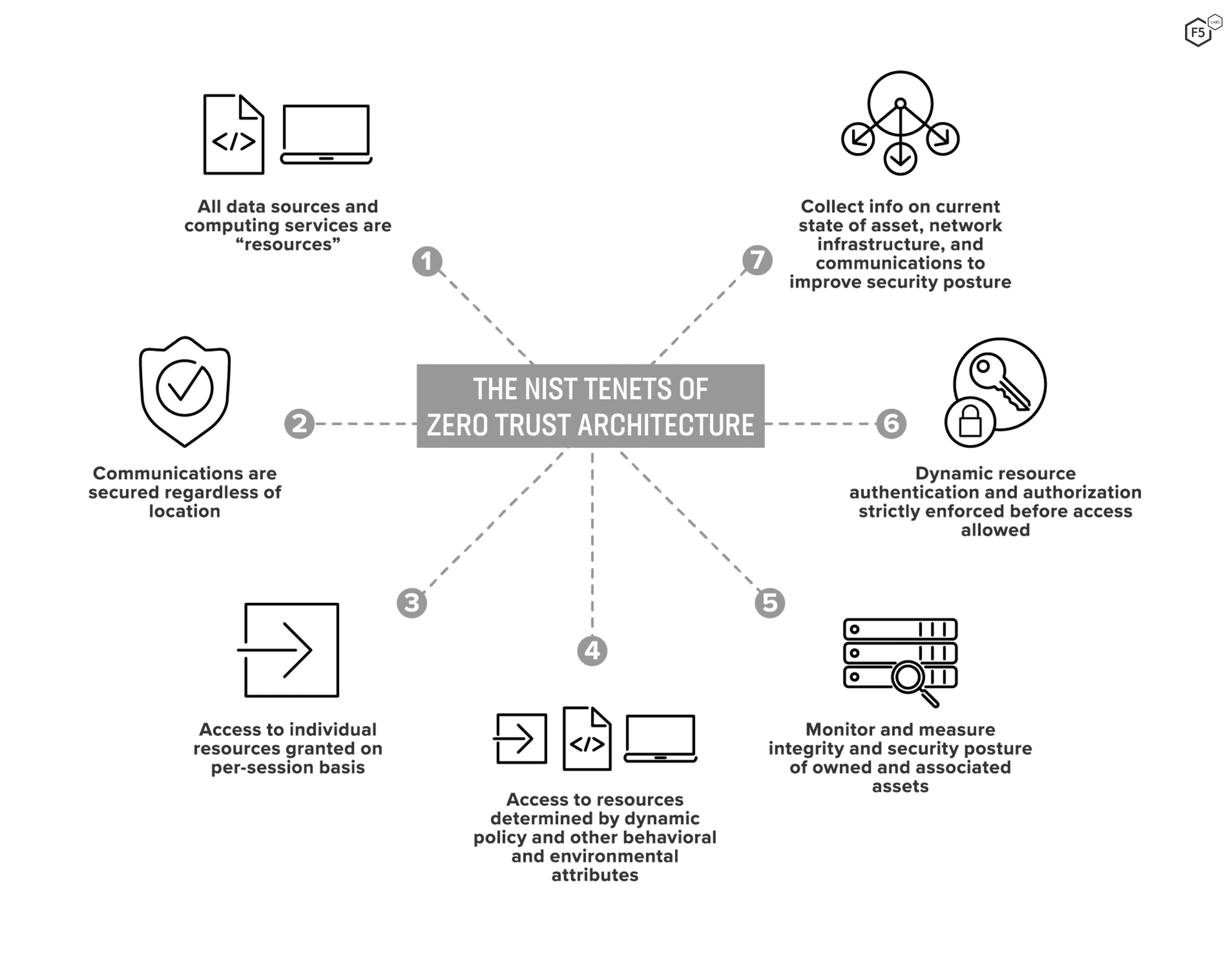

By now it should be clear that zero trust (as a set of abstract principles) can manifest in many different ZTAs (as an actual design). This is a strength of zero trust, since it places the initiative in the hands of individual architects and practitioners to evaluate and prioritize the various core tenets (Figure 1) in ways that suit each organization.

Figure 1: NIST tenets of zero trust architecture.

At the same time, the variability of this architecture is part of the reason there seems to be so little clarity about how to actually implement zero trust. In all cases, the goal of pushing the access control perimeter out to envelop each resource separately will make use of at least one, and most likely many, PDPs and PEPs, but the arrangement of technical components and their interconnections will be unique for most organizations. Fortunately, the NIST document also fleshes out three different architectural approaches, four separate variations in deployment, and five different business-level scenarios to show how principles can be put into action. We will just touch on each briefly here, to illustrate how different organizations can begin to approach their own zero trust project.

Approaches

These approaches represent high-level, enterprise-architecture concerns and denote the overall zero trust strategy.

Enhanced Identity Governance

This approach places the bulk of the policy decision on user identity. Other request parameters such as device posture and behavior can factor in, but they are not the principal criterion. This means that the crux of the policy decision will hinge on the assigned permissions of the claimed identity. This approach is comparatively centralized, with a single or small number of identity provision services controlling access for all identities.

Micro-Segmentation

This approach primarily uses gateway components (such as intelligent routers or firewalls) as the PEPs for groups of similar assets, and the management of those components serves the PDP role. This approach is comparatively decentralized, and network segments can be as small as a single asset if software-based enforcement agents are used.

Network Infrastructure and Software-Defined Perimeters

This approach also uses network infrastructure to enforce policy, similar to the microsegmentation approach above, but does so by dynamically configuring the network to allow approved connections.

Deployed Variations

These deployed variations are smaller scale vignettes for setting up individual components or groups of components and are supposed to provide more tactical guidance than the approaches above.

Device Agent/Gateway-Based

This style of deployment splits the PEP into two agents, one on the requesting side and one on the resource side, which communicate with one another at the time of the request. The agent on the requesting asset is responsible for routing the request to the appropriate gateway; the gateway on the resource side communicates with the PDP to evaluate the claims of the asset. This deployment often works well with the microsegmentation and software-defined perimeter approaches listed above.

Enclave-Based

This deployment model is similar to the device agent/gateway model we just discussed, except that the gateway protects a resource enclave, a grouping of similar resources or functions. This approach represents a sort of compromise of zero trust principles (A Little Bit of Trust Architecture?), since it theoretically makes it possible for an actor who has permissions within the enclave to act on any of the resources within that enclave. This represents a way to implement zero trust on systems that are not amenable to some of the more granular approaches, but it places an extra burden on the permissions and role-based access control, which must be both accurate and precise.

Resource Portal

This deployment is comparatively centralized, with one single system representing the PEP for all assets (or a large group of them). This approach has the advantage of flexibility, since it does not require agents on all client assets, but it also limits the visibility and control over user postures and actions compared with other approaches.

Device Application Sandboxing

This approach uses virtualization, such as virtual machines or containers, to isolate the application from the asset it is running on. Whereas virtualization has largely been seen as a way to protect servers from workloads, in a zero trust architecture this approach protects the workload from the untrusted asset on which it needs to run.

Scenarios

NIST’s scenarios are the most strategic, business-level examples they provide, and are useful for helping business stakeholders understand what zero trust means in practical terms.

Enterprise with Satellite Facilities

In this scenario, an organization with a single primary facility might need to grant access to secondary facilities or remote staff. While access to some resources is necessary for the satellite workers, more critical assets might be restricted to on-site access where other controls can contribute to the overall posture. In such a scenario, the PDP is most likely to be a cloud service, and the PEP will either manifest as agents on user endpoints or as a resource portal such as a webtop. Many organizations have already implemented the architecture necessary to accomplish this, and only need to extend their authentication processes a little further to achieve a true ZTA.

Multi-Cloud/Cloud-to-Cloud Enterprise

In this scenario, an organization’s resources might be hosted in two separate cloud environments, which makes it undesirable to route all traffic through a centralized, on-premises access point. As a result, each application, service, or data source would have a PEP controlling access, and users would connect to each PEP, which would communicate with a cloud-based PDP.

Enterprise with Contracted Services/Nonemployee Access

This is a specific scenario in which staff from an external organization requires access to certain resources (potentially as simple as basic access to the Internet) but should not have access to all assets in a corporate LAN. Either a resource portal or installed agents on vetted assets would serve as PEPs, and those users lacking either the right credentials at the portal or the right agent would have access to the Internet and any public-facing resources, but not to corporate assets.

Collaboration across Enterprise Boundaries

This represents a situation in which workers from one organization need access to specific resources under the control of a partner organization, but the partner needs to control the risk associated with opening up their environment. A cloud-based PDP, paired either with local agents or a web portal for the PEP, would allow organizations to grant access to specific resources without having to change their network or enterprise architecture.

Enterprise with Public/Customer-Facing Services

In such a situation, enterprises need to grant access to users on assets that are completely beyond the control of the enterprise. If the resources are completely public and not access controlled, there is no risk and therefore no need for zero trust, but once users or customers are authenticating to access specific resources, user management in decentralized environments becomes laborious. Given the difficulties of controlling the security posture of the user assets, a ZTA in such a scenario would make heavy use of behavioral metrics to assess whether the requests are innocuous. In practice this would look like a resource portal or webtop, but with enhanced PEP functionality behind the scenes.

The Federal Zero Trust Mandate

In some recent discussions between F5 Labs researchers and U.S. Government security architects and practitioners, one of the main topics of conversation (and significant concern) was the recent Memorandum for the Heads of Executive Departments and Agencies (M-22-09) “Moving the U.S. Government Towards Zero Trust Cybersecurity Principles”, which essentially requires that all agencies must meet specific cybersecurity standards and objectives by the end of FY 2024. At the time of writing, that gives American government organizations approximately a year and a half to scope, design, and implement a ZTA that not merely fits those principles, but also serves the agencies’ missions in a performant way.

Hopefully this article has clarified some of the confusion surrounding zero trust and the specific elements necessary to turn principles into architectures, and architecture into operations. Perhaps the key to understanding the concept of ZTA is to focus on PEPs, PDPs, their degree of centralization, and the fundamental objective of authenticating and authorizing requests instead of users. Zero trust can be a transformative approach to controlling risk, or it can become another trend without much substance, and our ability to understand the differences between its theory and practice will determine which way it goes.