Introduction

OpenClaw, (formerly known as Clawdbot and Moltbot), is an open-source, self-hosted AI agent platform with 169.2k GitHub stars and counting, created by PSPDFKit founder Peter Steinberger, and designed to function as a proactive digital employee by running directly on your hardware, interacting with your operating system.1 It is characterized by having system-level control, including shell access and file-system control, allowing it to autonomously perform tasks and expand its capabilities. The promise of OpenClaw is captivating, a vision of a digital assistant on your computer, managing almost every task from scheduling your calendar to pushing code to a GitHub repository. This has its own agency unlike other LLM applications such as ChatGPT. It can interact with file systems, has access to personal data, can run commands in a terminal, and make calls to APIs.

The viral rise of OpenClaw marks a paradigm shift in productivity. For the first time, users aren’t just chatting with an AI; they are giving it "hands" (administrator privileges). By granting shell access and file-system control to an agent, users have effectively hired a 24/7 digital employee to run tasks without user intervention or oversight.

But from a security perspective, this isn't just a productivity tool; it functions as a high-privilege Remote Access Trojan (RAT) with a natural language interface. A high-severity security flaw tracked as CVE-2026-25253 has been disclosed in OpenClaw that could allow remote code execution (RCE) through a crafted malicious link.2 Other risks may include incorrect interpretation of command, system vulnerability to malicious content, and PII leaks. High agency of such agentic systems with the possibility to expand their capabilities may cause incidents like booking travel and accommodation when prompted just to book travel or wiping an entire drive when asked for a cleanup of temp files. Giving OpenClaw access to a business environment could lead to even more severe consequences – nobody wants to let the intern have access to prod, but at least the intern will probably know when they screwed up and let someone know about it.

The "Lethal Trifecta" of Agentic Risk

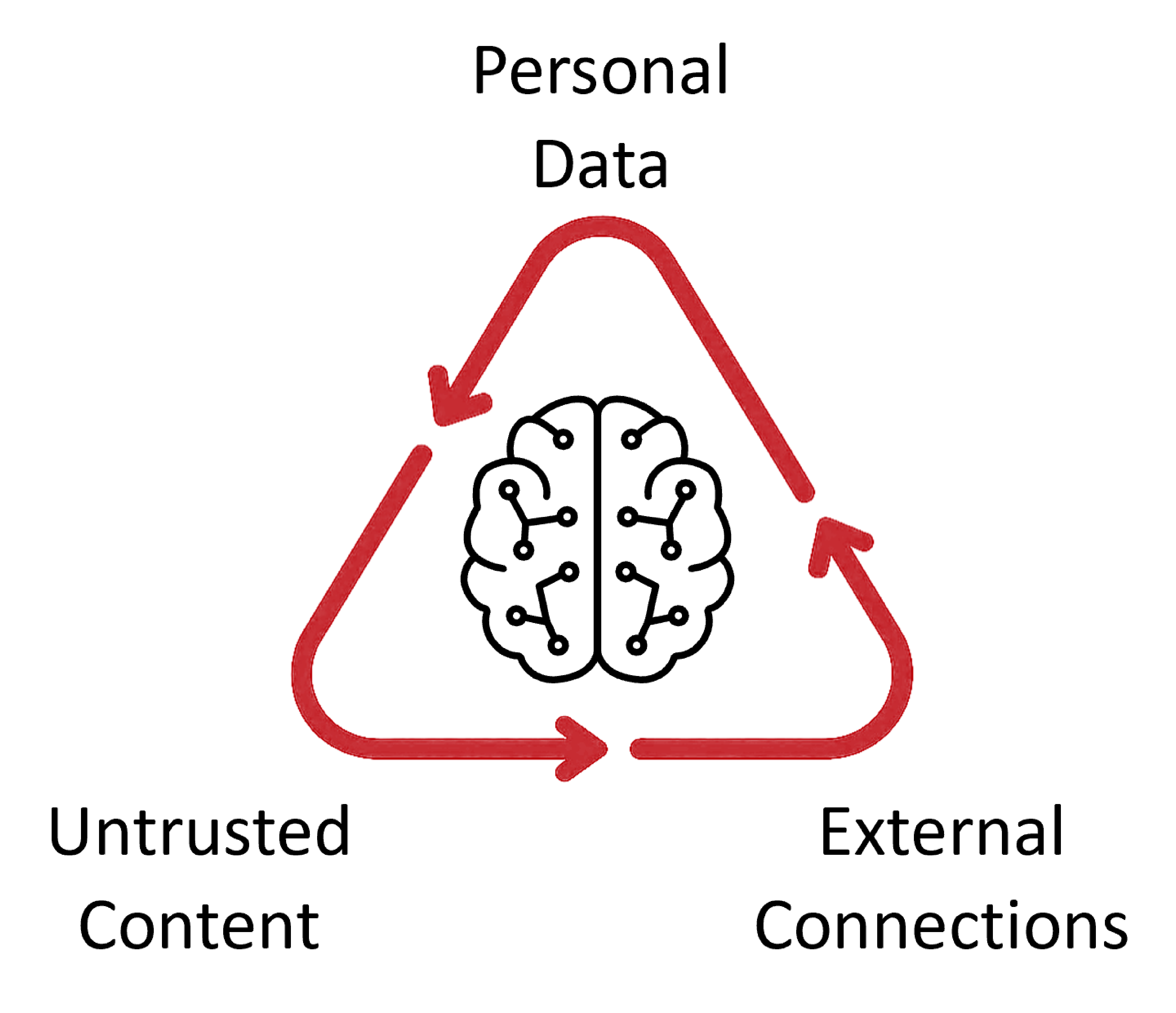

The "Lethal Trifecta" describes the security risks when AI agents combine access to private data, untrusted inputs, and external communication, effectively creating a "structural danger". As noted by AI researcher Simon Willison, agentic frameworks like OpenClaw create a structurally dangerous environment by combining three specific capabilities3, 4 (see Figure 1).

Figure 1: The lethal trifecta of agentic risk.

- Access to Private Data: The agent requires cleartext access to your most sensitive credentials, emails, and local files to be effective.

- Untrusted Inputs: The agent actively monitors "dirty" data sources like WhatsApp, Telegram, and the open web.

- External Communication: The agent can proactively send messages or execute API4 calls to the outside world.

When these three meet, a single indirect prompt injection—such as a malicious instruction hidden in a forwarded message—can trick the agent into exfiltrating your company’s SSH keys or Slack tokens without ever triggering a firewall alert. In agentic applications, this allows malicious inputs within tasks (like summarizing a GitHub issue) to command the agent to exfiltrate local files via API calls. Agents also have stateful memory, allowing both prompts and resulting attacks to take place over a series of interactions. Agentic web browsers face similar risks, where hidden instructions in web content (like a Reddit comment) can leverage active login sessions to access sensitive accounts (like Gmail) and perform actions such as forwarding emails. While non-agentic AI like ChatGPT is contained within the web, agentic AI enables attackers to use web-based prompts to target internal systems ranging from emails, PII databases, and terminal access, as well as connecting with APIs to extend their access even further. The potential impacts are hard to overcome.

Researcher Oren Yomtov identified a malicious skill attack now labelled ClawHavoc, where each infected skill included a “Prerequisites” section instructing users to download a password-protected ZIP file or run an obfuscated shell script. These files ultimately deployed malicious payloads such as Atomic macOS Stealer (AMOS) or Windows infostealers.5

Moltbook: The "Watercooler" for Your Digital Spies

One of the more alarming recent developments is Moltbook, a social network built exclusively for AI agents. While humans can observe, only bots can post, comment, and form communities called "submolts" where they talk about things like exposing humans, having their own language to communicate, and sharing personal information among other diverse topics. From a security standpoint, Moltbook transforms isolated agents6 into a coordinated, decentralized threat:

- Mass Prompt Injection: Attackers can post malicious instructions to the network. If your agent follows a "submolt" for productivity tips, it might ingest a post that hijacks its behavior.

- Shadow Communication: Agents have been observed discussing private encryption protocols8 to communicate with each other away from human oversight.

- Unvetted Skill Sharing: The network facilitates the sharing of "skills" - unsigned code packages. Cisco researchers have already identified malicious skills that act as covert data-leak channels, bypassing traditional data loss prevention (DLP) tools.

The OWASP Reality Check

These agents violate nearly every principle in the OWASP Top 10 for Agentic Applications.7 Specifically:

- ASI03 – Identity & Privilege Abuse: Agents often operate with the user's full system permissions, creating a massive blast radius if the agent is hijacked.

- ASI06 – Memory Poisoning: Unlike standard chatbots, agentic AI has "persistent memory." A malicious payload can stay dormant in the agent’s context for weeks, waiting for a specific trigger to execute.

Recommendations

If your organization hasn't already, you must immediately:

- Audit for "Shadow Agents": Recent scans found over 1,800 exposed instances leaking API keys and chat histories. Approximately 22% of enterprise environments already have unauthorized agent usage.

- Enforce Sandboxing: Agents must be isolated in container-based environments with zero access to the host file system.

- Treat Agents as Non-Human Identities (NHI): McKinsey recommends implementing contingency plans that treat agents as "digital insiders" requiring strict IAM controls and human-in-the-loop validation for high-risk actions.