Automating the deployment and configuration management of application delivery and security devices has become a near-mandatory practice. In the 2017 IDG FutureScape report, automation and multi-cloud management ranked as some of the key initiatives that will impact businesses by 2021.1 Automation brings scale, reliability, and integration to the deployment of the essential security, optimization, and availability services that applications need—and makes their delivery part of the orchestrated build, test, and deploy workflows that are emerging as the dominant model of application deployment.

Even simple automation of basic tasks like adding new virtual servers or pool members can enable operations to provide self-service capability to application owners or other automated systems—and free time for more productive work, such as building the next wave of automation tools.

The need to automate takes on even greater significance when an organization begins to adopt multiple cloud platforms to deliver IT services. When you are trying to deploy services into multiple locations with different platform characteristics, automation can help reduce the impact of increased operational overhead and decrease errors due to unfamiliarity with new platforms.

But how and what to automate? With different operations models, interfaces, and languages, automation software can work at a single device layer, or as more complex, multi-system tools. Infrastructure-as-a-Service (IaaS) cloud platforms offer their own native tools to deploy virtual infrastructure and services. In addition, F5 offers a range of interfaces and orchestration options. While this breadth of tools and options gives you the opportunity to automate in a way that best suits your organization, choosing the right tool can be a daunting task—and the risk of complexity and tool proliferation is real.

In this paper, we will provide an overview of ways you can automate the deployment, management, and configuration of F5 BIG-IP appliances (both physical and virtual) along with some advice about how to choose the path that’s right for your business.

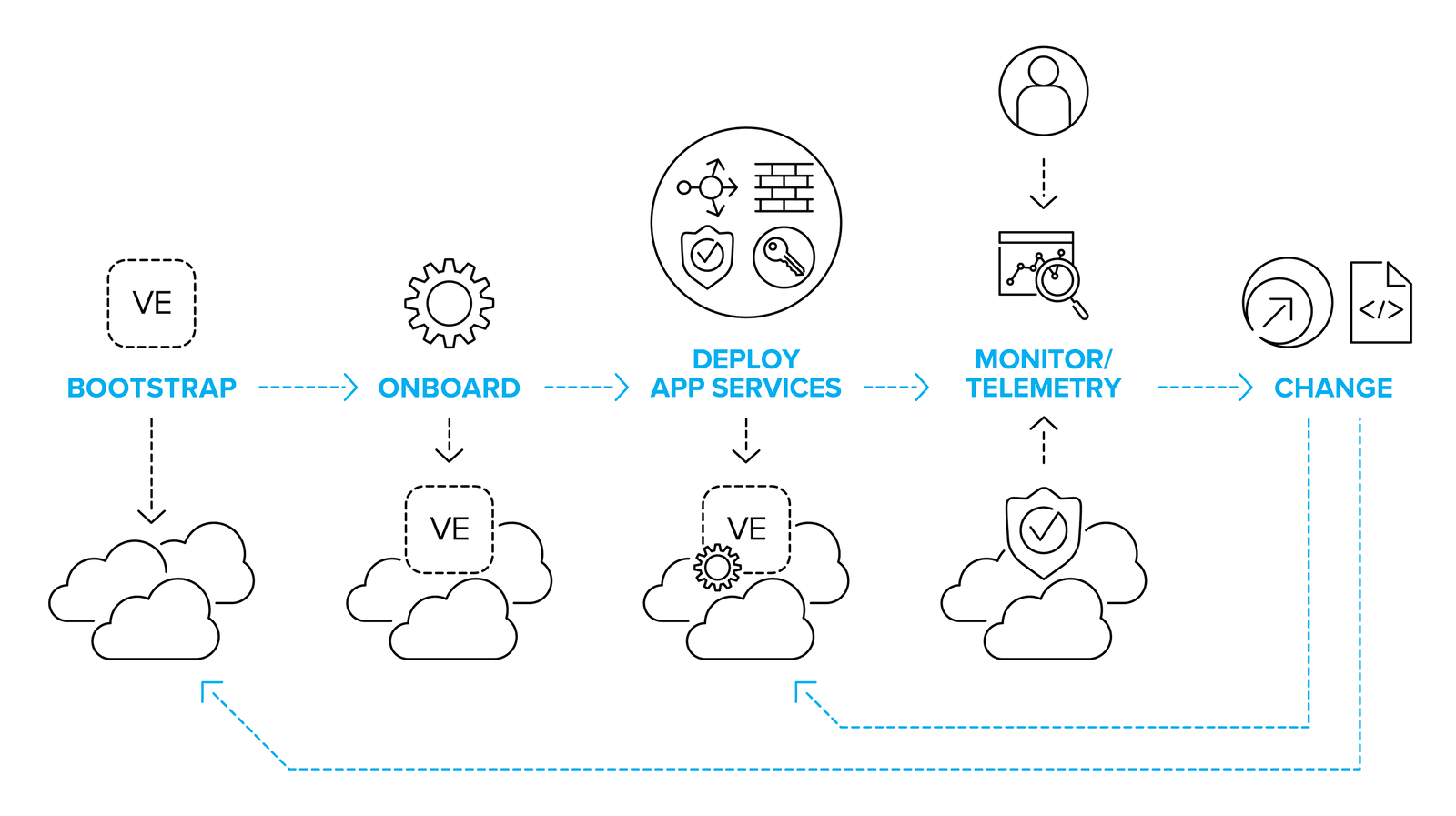

There are a number of key automation points in the lifecycle of delivering application services. Depending on your infrastructure model and application deployment methods, you might need to develop all, or only a few of them.

If you’re deploying services onto a high capacity, multi-tenant physical or virtual BIG-IP, then bootstrapping and onboarding activities won’t be a high priority, compared to deploying, monitoring and updating the application services configurations for the hundreds or thousands of applications they support.

If you’re looking at a deployment model where every application has dedicated BIG-IP instances in multiple environments – including potentially ephemeral instances created on-demand for test and QA – then automating bootstrapping and on-boarding processes are as much a part of the critical path as deploying the app services themselves.

Whatever your environment and however many processes you need to automate, there are some key topics you need consider.

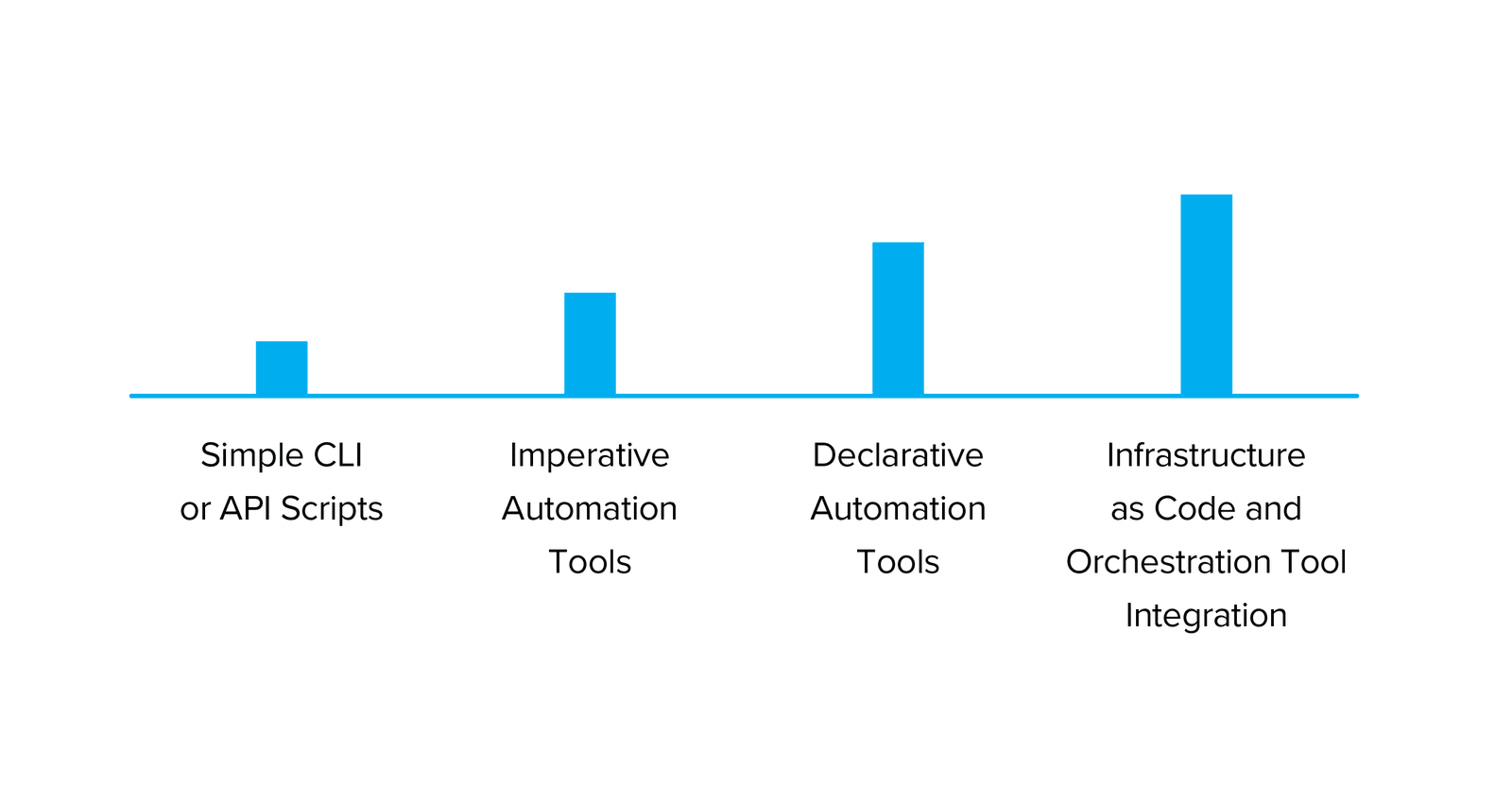

Automation covers a range of activities. At one end of the spectrum is the development of simple scripts written in Bash, TMSH, Python, or other languages that might be run locally to speed up manual configuration activities. At the other end of the spectrum lies a full “infrastructure-as-code” system that combines source code management, workflow orchestrators, and (potentially) multiple automation tools to create a system where the configuration of the infrastructure is defined by, and changed with, text files contained within a repository. Between these two extremes lie a number of different options to help you manage the deployment and configuration of a BIG-IP platform.

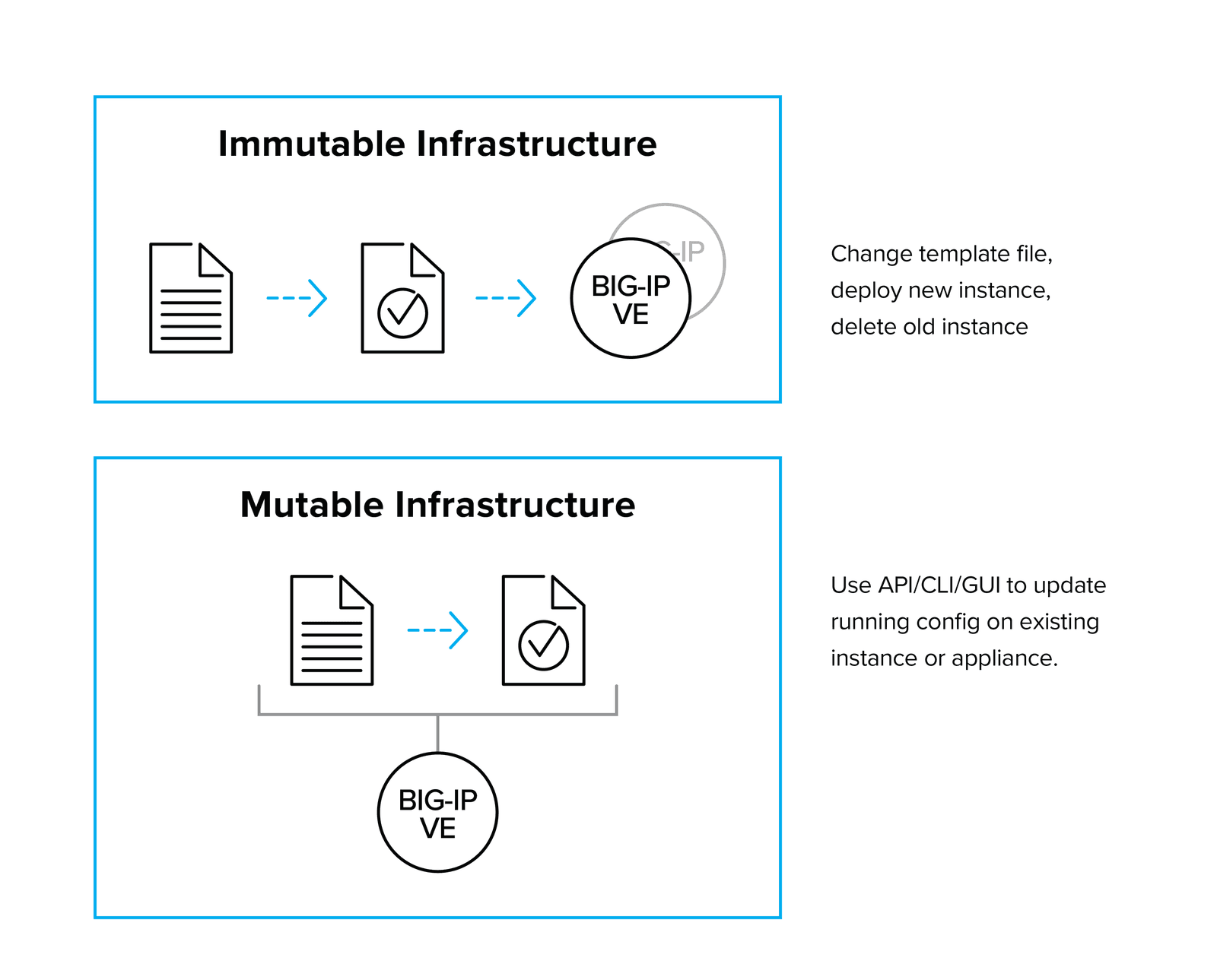

Most current BIG-IP deployments could be considered mutable, which means that we can expect their configuration to change over time. This is because the BIG-IP platform is mostly deployed as a multi-tenant device that supplies services to multiple applications. As new applications are deployed—or the existing applications scale or require additional services—the configuration of the BIG-IP will be updated to correspond. This method of deployment enables infrastructure teams to manage a set of centralized infrastructure, which supplies services to applications from a common platform.

However, sometimes BIG-IP platforms are deployed as part of a discrete application stack, where the services of a particular BIG-IP are tied to a specific application or service. In this situation, we could treat the BIG-IP configuration as immutable; that is, the configuration is installed at boot, or as part of the software image, and is not changed during the lifecycle of the BIG-IP instance. Configuration changes are effected by altering the software image or startup agent script contents, and then redeploying. This model is often referred to as “nuke and pave.” While less common overall, the availability of new BIG-IP licensing models to support per-app instances, enhanced licensing tools, and tools like F5 cloud libs (https://github.com/F5Networks/f5-cloud-libs) (a set of Node.js scripts and libraries designed to help you onboard a BIG-IP in a cloud) are making this deployment model a viable option for organizations that require an application to have a tightly bound, isolated stack of both code and infrastructure.

There are two conceptual models for how automation interfaces are exposed to consumers. The most common “first wave” automation schemas tend toward an imperative model. In imperative automation models, the automation consumer usually needs to know both what they want to achieve, and the explicit steps (usually by API calls) to achieve them. This often places the burden of understanding the configuration details of advanced services—as well as the additional complexity and effort to integrate the services with automation tools—on the consumer. It’s akin to asking for a sandwich by specifying every single operation required to make it, rather than just asking for a sandwich with the expectation that the sandwich maker will know the operations (and order of operations) needed to make one.

In contrast, a declarative interface allows consumers (human or machine) to create services by asking for what they want. Detailed knowledge of all the steps required is not necessary as the automation target has the pre-configured workflows or service templates to create the configuration based on the required outcomes. While a declarative interface involves a slightly more complex initial setup, that complexity is offset by the simplicity of operation once suitable service templates are built. That makes it, in general, the preferred mechanism with which to build automation systems.

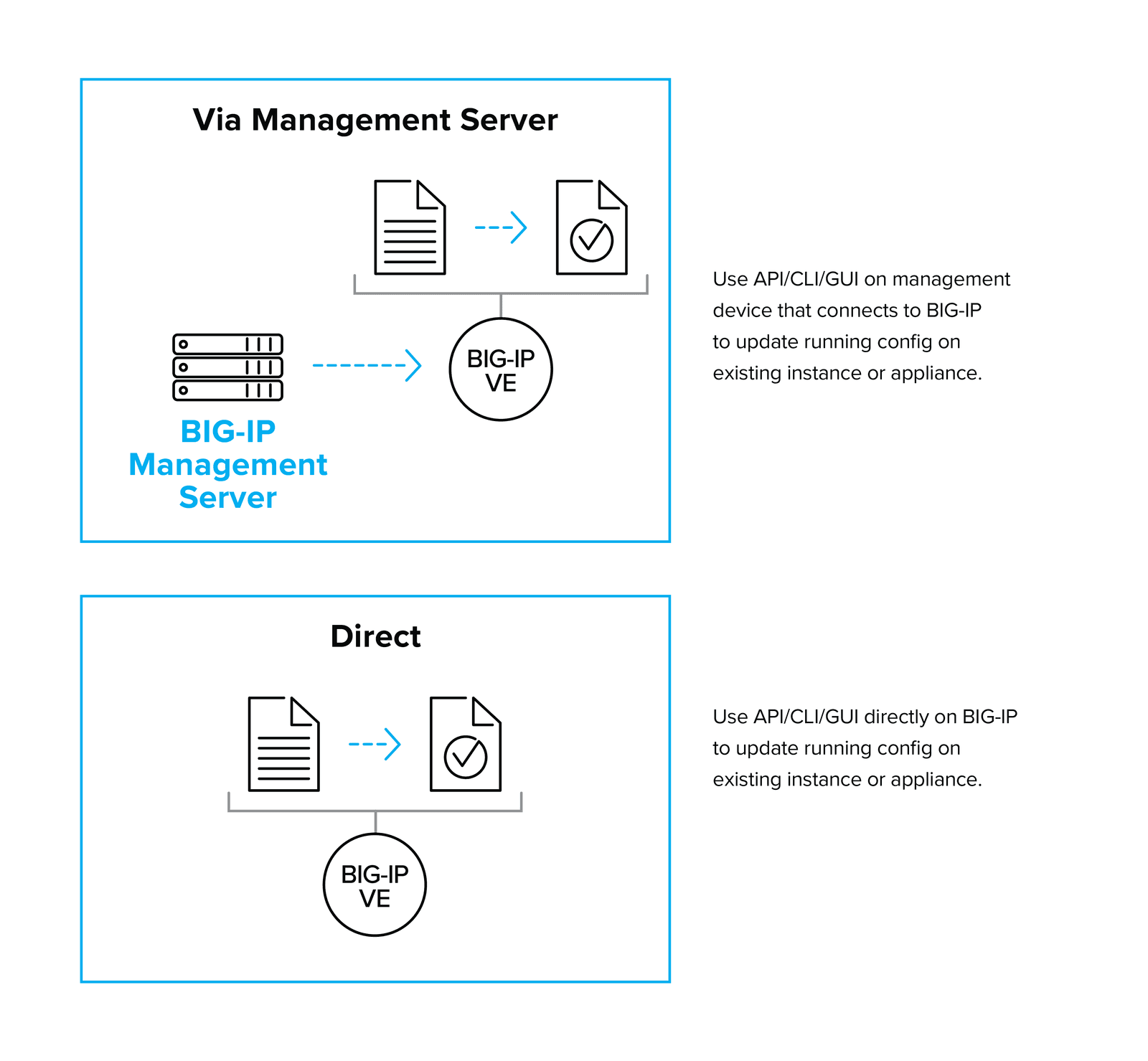

Another decision you’ll need to consider is whether automation API calls should be made from third-party tools directly to the device that needs to be altered or via an additional management tool. Management tools can abstract and simplify operations and may offer additional layers of control and logging versus a direct connection to the managed entity. However, you’ll need to ensure that your management layers are highly available in situations in which the ability to make changes quickly is critical.

BIG-IP devices are most commonly automated via the REST API, which exposes the majority of BIG-IP functionality via a documented schema. The F5-supplied modules for automation tools such as Ansible make extensive use of the REST API. The addition of the iControl LX capability also enables the creation of a user-defined API endpoint that can perform a multi-step operation from a single API call.

Another common way to automate BIG-IP configuration is to use startup agents, which run at startup and can fetch external information to configure the BIG-IP platform. Startup agents are often used to perform initial configuration to “onboard” devices and can fetch additional scripts and configuration files from third-party sites such as GitHub or your own repository. Startup agents can also be used to completely configure a BIG-IP platform, especially if you’ve chosen a fixed, per-application configuration.

The most common startup configuration is cloud-init, which is enabled in all BIG-IP VE images (except in Microsoft Azure), but it’s most suitable for use in AWS and OpenStack deployments. Alongside cloud-init, F5 supplies a series of cloud startup libraries to help configure BIG-IP on boot.

If you choose to use startup agents to configure a platform post-boot, pay careful attention to managing failure. This is especially important if external sources are used, particularly where an instance might be launched as part of a scaling event. If the external resources are unavailable, how will the system behave? Will additional “zombie” devices be created to try and keep up with demand?

In some cases, automation systems can behave as users and execute CLI commands. While this can occasionally solve some problems where API calls may not be complete, in general the difficulty of support and fragility of the solution make this the method of last resort.

Templates and playbooks can create automated deployments and build infrastructures that have a degree of standardization. The appropriate level of standardization makes your infrastructure more robust and supportable. Well-created templates offer a declarative interface, where the requesting entity (user or machine) needs only to know the properties they require, and not the implementation details. Deploying strictly through templates and remediating only by correcting templates can lead to a higher quality service, as problems generally only need to be fixed once. Services are re-deployed from the new template, and the updates made to the template prevent the same problem from arising a second time.

Platform integration tools tie the configuration of BIG-IP services into platforms such as private cloud or container management systems. The mechanisms vary between platforms and implementations but generally fall into three models:

While not a full automation solution, service discovery is a simple and powerful way to integrate BIG-IP configurations with changes in the environment. Service discovery works through periodically polling the cloud system via API to retrieve a list of resources, and modifying the BIG-IP configuration accordingly. This is especially useful in environments where resources are configured into auto-scale groups, because scaling the back-end compute resource requires the load balancer to be aware of the new resources. Service discovery components are supplied with F5 cloud auto-scale solutions for AWS and Azure.

So – is there an F5 recommended automation scheme? Yes and no. There are so many variables to consider that totally prescriptive advice is unhelpful. We have, however gathered the key building blocks we think you will need into a coordinated product family.

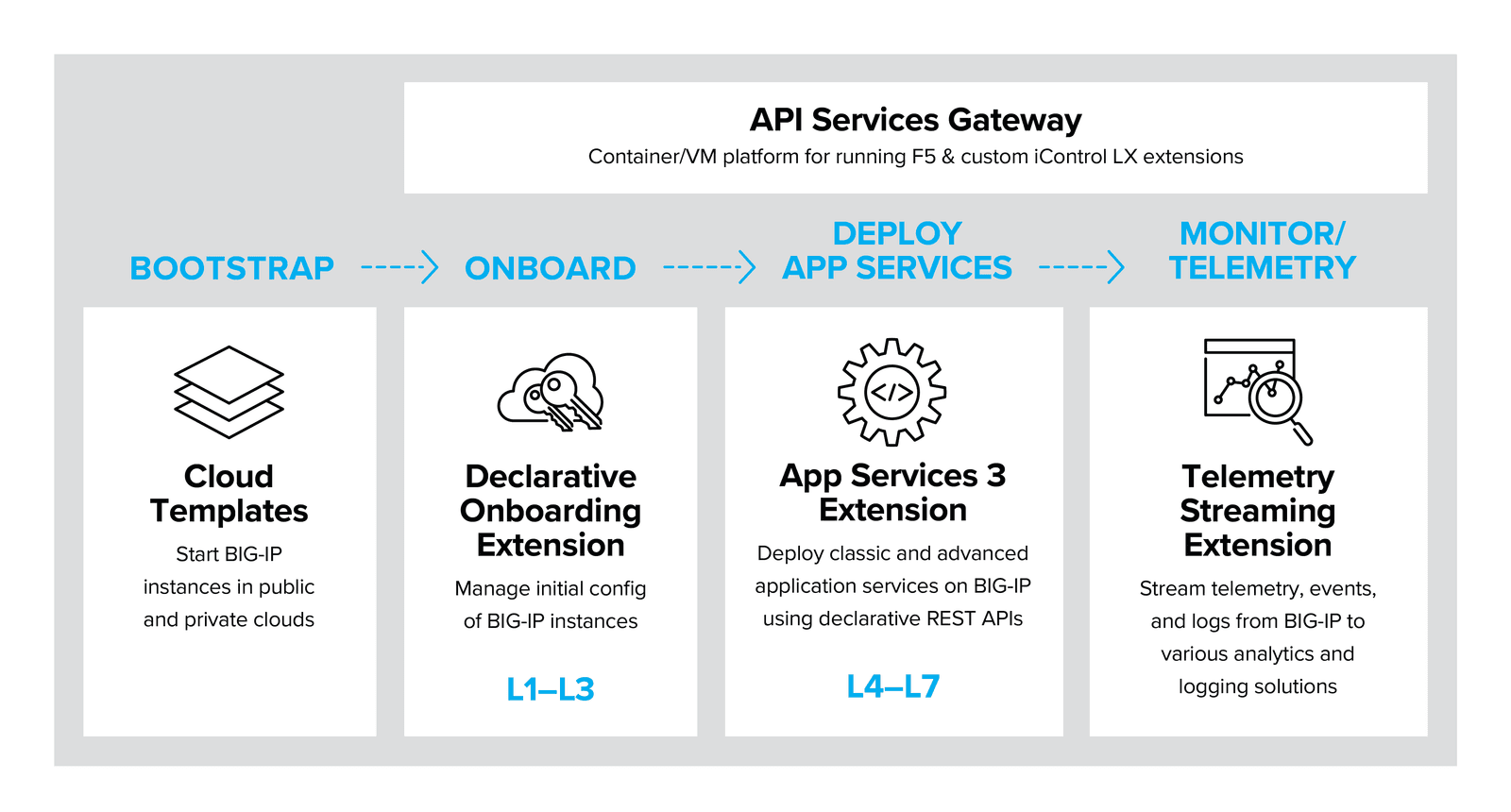

The F5 Automation Toolchain product family comprises the fundamental automation and orchestration building blocks that enable you to integrate F5 BIG-IP platforms into common automation patterns such as CI/CD toolchains.

The F5 Automation Toolchain contains the following key components

The Automation Toolchain family is designed to manage the delivery lifecycle of application services, from platform deployment (via cloud templates) through onboarding, service configuration, and advanced telemetry and logging for in-life application performance assurance.

This growing family of supported tools represents the future direction for automating F5 application services – but this doesn’t mean that other integrations don’t exist or aren’t supported – see the “Other automation tools” section of this document.

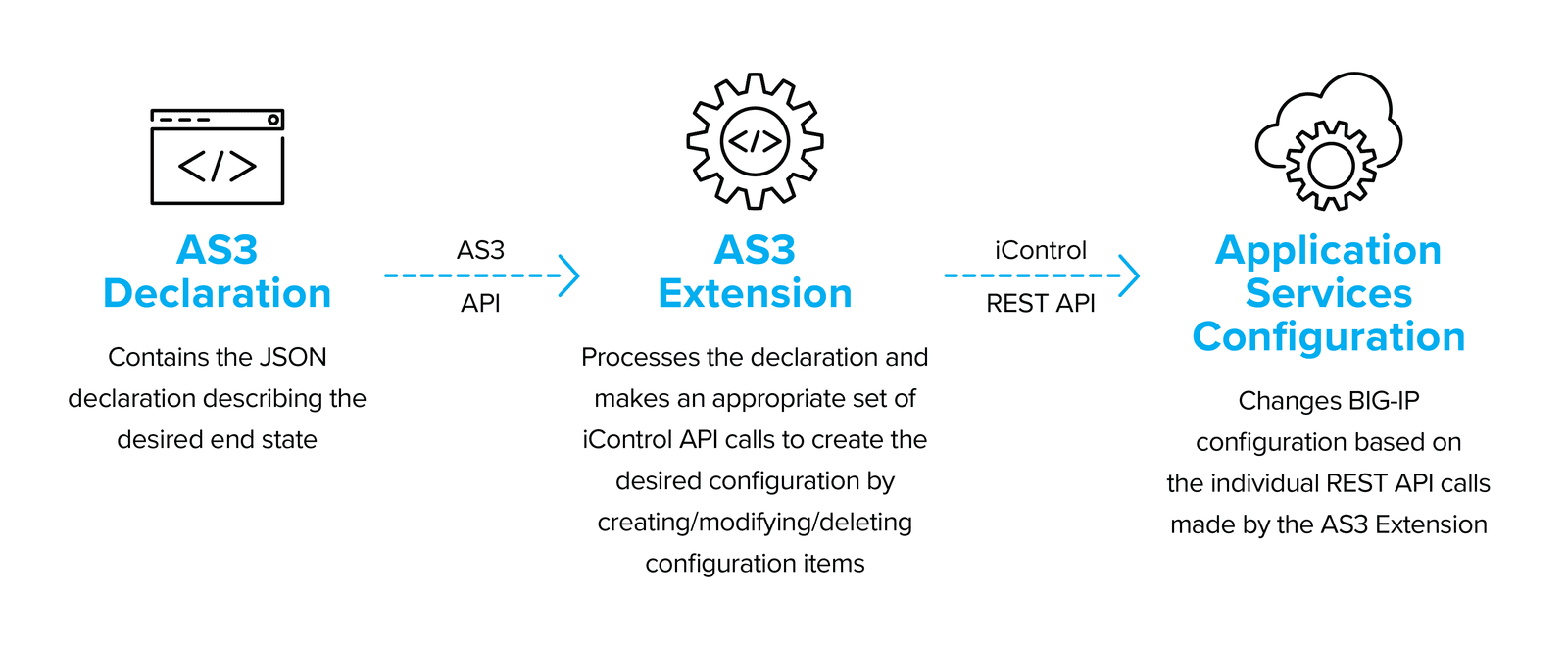

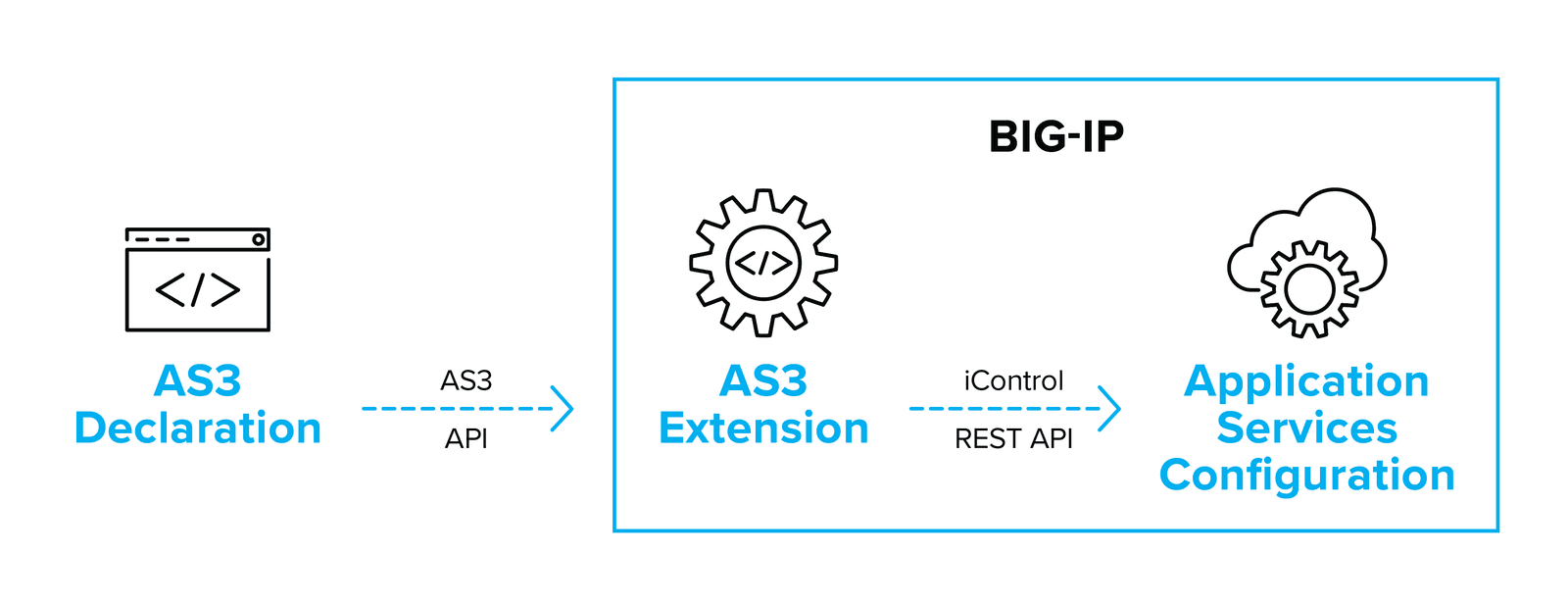

The F5 Applications Services 3 Extension (AS3) provides a simple and consistent way to automate layer 4-7 application services deployment on the BIG-IP platform via a declarative REST API. AS3 uses a well-defined object model represented as a JSON document. The declarative interface makes managing F5 application service deployments easy and reliable.

Diagram: The AS3 Architecture

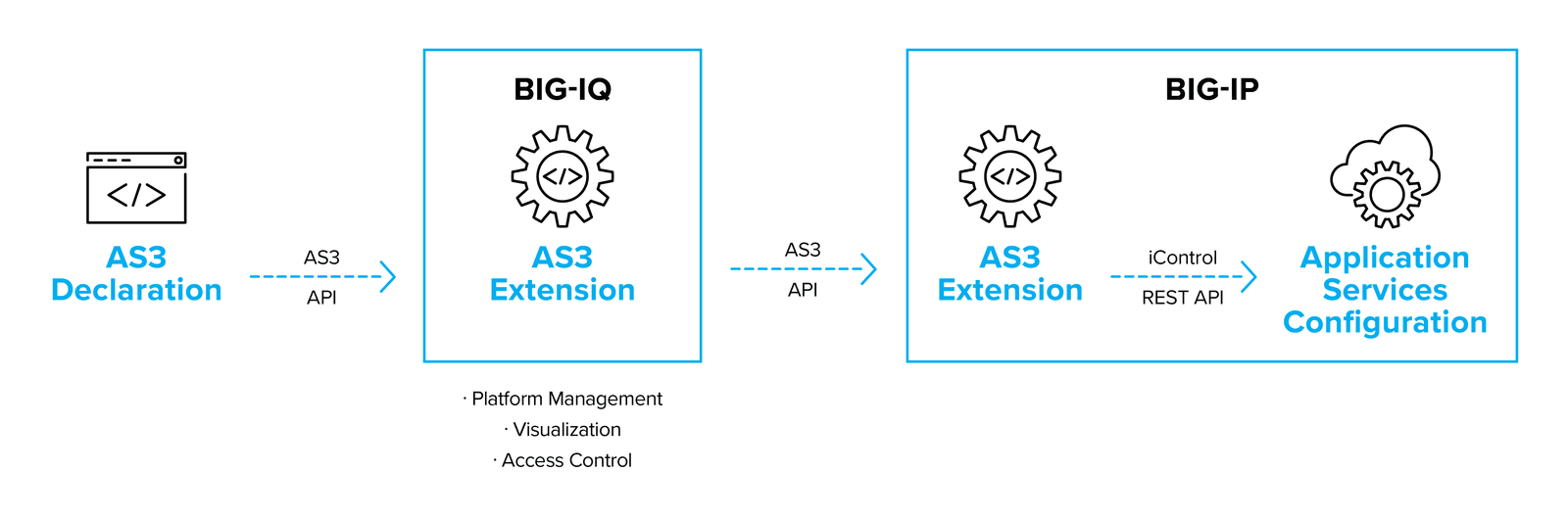

The AS3 extension ingests and analyzes the declarations and makes the appropriate iControl API calls to create the desired end state on the target BIG-IP. The extension can run either on the BIG-IP instance or via AS3 Container – a separate container/VM that runs the AS3 Extension, and then makes external API calls to the BIG-IP.

AS3 calls can also be made via BIG-IQ, which provides licensing, management, visualization, and access control for BIG-IP platforms, and powers the F5 Cloud Edition, which brings on-demand, per app instances of BIG-IP combined with powerful analytics.

The Declarative Onboarding extension provides a simple interface to take an F5 BIG-IP platform from just after initial boot to ready to deploy security and traffic management for applications. This includes system settings such as licensing and provisioning, network settings such as VLANs and Self IPs, and clustering settings if you are using more than one BIG-IP system.

Once the onboarding process has completed, application services can be deployed using whatever automation (or manual) process you select.

Declarative Onboarding uses a JSON schema consistent with the AS3 schema and has a similar architecture. Declarative Onboarding is supplied as a TMOS independent RPM that is installed on a newly booted BIG-IP during the first step in the onboarding phase.

The BIG-IP is a powerful generator of application, security, and network telemetry. The Telemetry Streaming extension provides a declarative interface to configure streaming of statistics and events to third party consumers such as:

As with the other members of the Automation Toolchain family, configuration is managed through a declarative interface using a simple, consistent JSON schema.

The API services gateway is a Docker container image that allows custom iControl LX extensions to run in a TMOS independent platform. This can help you scale and manage your F5 deployments by abstracting management operations away from the individual BIG-IP Platforms.

Cloud templates use the deployment automation functions of public and private clouds to provision and boot BIG-IP virtual appliances.

F5 currently has supported templates for the following clouds:

F5 is actively developing the cloud templates to cover a wider range of deployment scenarios – please submit issues or pull requests via the relevant github repository.

Application services are just one layer in the stack of technology that creates a functioning application. Integrating, building and deploying all the components to take an application from code commit to operational deployment and monitoring involves a large number of dependent steps, which need to be executed in the correct order. Automating this task is the job of orchestration tools which create workflow pipelines of coordinated tasks and tools.

Most pipelines begin with a source code repository which holds the code for the application, the testing, and the infrastructure configuration.

With tools like AS3, cloud templates, and Declarative Onboarding, you can store all the configuration information needed to build and configure Application services as part of a deployment pipeline.

In architectures using multi-tenant long-lived BIG-IP hardware or software platforms, you will only need the AS3 configuration managed as part of an application’s repository of code. In contrast, in scenarios where you want to start dedicated instances on-demand as part of the deployment process, managing templates and Declarative Onboarding declarations should be part of your application repository.

While we can’t cover every conceivable automation or orchestration tool, below is a list of the most common tools, use cases, and features used by F5 customers.

---------------

Language Integrations

Language

Status

Examples and Source

Python

F5 Contributed

https://github.com/F5Networks/f5-common-python

Go

User Contributed

https://github.com/f5devcentral/go-bigip

PowerShell

F5 Supported

https://devcentral.f5.com/wiki/icontrol.powershell.ashx

Configuration Management and Infrastructure Automation Tools

Tool

Status

Examples and Source

Ansible

F5 Contributed

https://github.com/F5Networks/f5-ansible

Terraform

F5 Contributed

https://github.com/f5devcentral/terraform-provider-bigip

Puppet

F5 Contributed

https://github.com/f5devcentral/f5-puppet

Chef

User Contributed

https://github.com/target/f5-bigip-cookbook

SaltStack

Third-Party

https://docs.saltstack.com/en/latest/ref/runners/all/salt.runners.f5.html

Infrastructure Template Systems

Platform

Status

Examples and Source

AWS

F5 Supported

https://github.com/F5Networks/f5-aws-cloudformation

Azure

F5 Supported

https://github.com/F5Networks/f5-azure-arm-templates

F5 Supported

https://github.com/F5Networks/f5-google-gdm-templates

OpenStack

F5 Supported

https://github.com/F5Networks/f5-openstack-hot

------------------

Startup Agents & Cloud Scripts

Cloud-init

Cloud libs

https://github.com/F5Networks/f5-cloud-libs

--------------------

Platform Integrations

Container Management Platforms

Platform

Status

Examples and Source

Kubernetes

F5 Supported

https://github.com/F5Networks/k8s-bigip-ctlr

Pivotal Cloud Foundry

F5 Supported

https://github.com/F5Networks/cf-bigip-ctlr

Marathon

F5 Supported

https://github.com/F5Networks/marathon-bigip-ctlr

Red Hat OpenShift

F5 Supported

https://hub.docker.com/r/f5networks/k8s-bigip-ctlr (https://hub.docker.com/r/f5networks/k8s-bigip-ctlr) or

https://access.redhat.com/containers/?tab=tags#/registry.connect.redhat.com/f5networks/k8s-bigip-ctlr (https://access.redhat.com/containers/?tab=tags#/registry.connect.redhat.com/f5networks/k8s-bigip-ctlr)

Private Cloud Platforms

Platform

Status

Examples and Source

OpenStack (LBaaS)

F5 Supported

https://github.com/F5Networks/f5-openstack-lbaasv2-driver

OpenStack (Heat)

F5 Supported

https://github.com/F5Networks/f5-openstack-hot

VMWare (vRO)

Third-party

https://bluemedora.com/products/f5/big-ip-for-vrealize-operations/

-----------------------

The tools and integrations above represent automated ways to deploy and configure the BIG-IP platform to provide application availability, security, and scaling services. These services, essential as they are, make up only one part of a full-stack application deployment. Creating a full application stack with the servers, data, compiled application code, and infrastructure in a coordinated and tested manner requires more than a simple automation tool.

You’ll need a higher-level orchestration tool – with associated workflows and integrations with a number of automation systems. These tools are most commonly used in Continuous Integration/Continuous Delivery (CI/CD) working practices, for which automation is, for all practical implementations, required. Although a number of orchestration tools exist, Jenkins is perhaps the most common, and there are example workflows available that show how you can use Jenkins, F5, and Ansible to incorporate F5 infrastructure-as-code capabilities in a CI/CD workflow. In general, however, the orchestration tool will work through one of the configuration automation tools to actually make changes and deploy services.

BIG-IP platforms require licensing to function, and so it’s helpful to include licensing on the critical path of automation. In highly dynamic environments where BIG-IP virtual devices may need to be quickly scaled up or down, or created for test and development purposes, licensing models should be considered carefully.

In the public cloud, one path is to use utility billing versions of the BIG-IP (available through cloud marketplaces). Utility billing instances will self-license, and costs will be charged via the cloud provider on a pay-as-you-use or time-commitment basis.

Another option is to use pools of reusable licenses purchased through subscription (or perpetually) alongside the F5 BIG-IQ License Manager, which will allow you to assign and revoke licenses from a pool.

You can automate the licensing steps through startup agents and API calls, which will require outbound Internet access to the F5 license server (even in the case of utility licenses in cloud platforms).

Depending on your organization, choosing the right automation and orchestration tools could be very easy or a tough task. It’s easy if you have already adopted a tool or methodology for other components and just need to integrate BIG-IP into the system. Even without integration into a particular tool, the rich iControl REST API combined with iControl LX capabilities and cloud-init make integrating BIG-IP into an existing automation tool relatively straightforward (especially if combined with iApp templates, which can be used to create even a complex configuration with a single API call).

If you are starting from scratch, however, things can be more complex. Just like selecting any other solution, understanding your requirements should come first. While this paper cannot build your requirements list for you here are a set of questions and recommendations to help you make your assessment

Automation model: A declarative model will be far simpler for your orchestration consumers to interact with. Consumers just need to know what they want, rather than all the procedural steps to get there. The F5 Automation Toolchain family will represent the ‘model’ deployment for greenfield sites moving forward.

Potential platforms and environments: It is inevitable that containers and a range of cloud platforms will be a key part of application infrastructure — plan accordingly.

Skills: Do you already have skills in some of the underlying technologies? Keep in mind that these skills may exist outside your department, but within your business as a whole. If so, it might make sense to pick a tool that uses a language your organization has already adopted.

Supportability: Only build systems that you can support. This may seem obvious, but a key to success is picking the level of complexity you can deliver within your organization—so that you maximize the benefits of automation without causing excess operational overhead.

Increasing automation of IT systems is inevitable. Taking a strategic approach to key application delivery and security services will ensure that the applications your organization deploys are kept secure and available. Automation can also help reduce your operational overhead, especially when working in multiple platforms and public clouds.

Choosing the right automation system can be challenging, and ideally should be done as a collaborative and holistic effort with a view to the skill sets available to you, as well as your ability to support the system you build. Whatever solution you choose, you can be confident that the BIG-IP platform and F5 expertise will be available to help you deliver the enterprise-grade services your applications rely on, no matter where they are deployed.

A note on F5 support. F5 provides support for several, but not all templates and tools. Where software is available on Github, the supported code is found in the “supported” folders in the F5 repositories only. Other software will have the support policies defined in the relevant readme files.

PUBLISHED MAY 13, 2019