Cloud computing environments are now a dominant feature of the IT landscape with organizations moving legacy on-premises applications to the cloud with ever-increasing regularity. But migrating an application to the cloud requires more than merely moving the application to a virtual machine at a cloud provider. Different organizations have disparate motivations for cloud migration, but there are key factors every organization should consider, and there are certainly best practices to help ensure a successful cloud migration effort. To gain the most benefits from cloud migration, plan ahead—both in the application architecture and the transition from on premises to cloud.

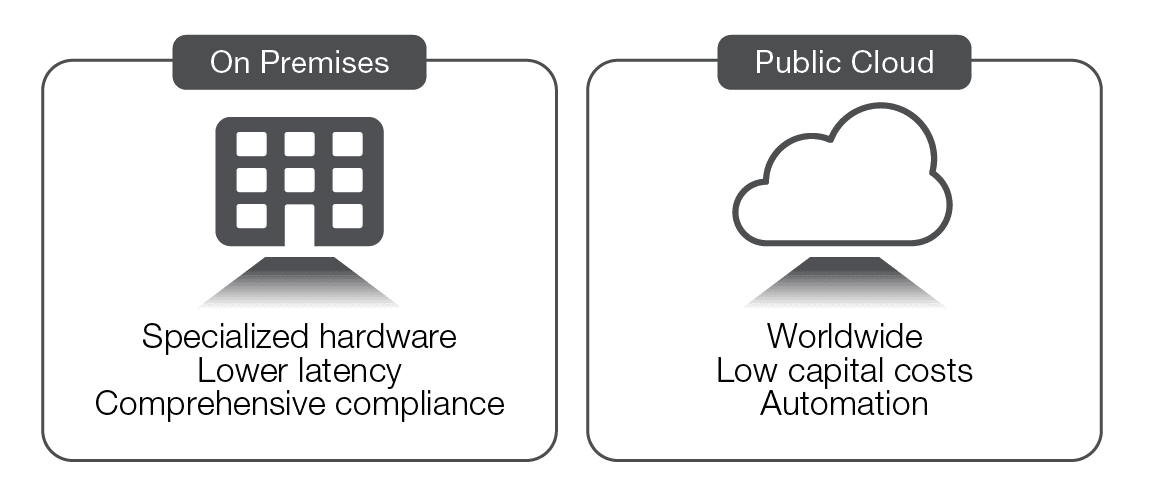

A cloud environment behaves differently from an on-premises environment. Some of the distinctions between the two environments are obvious, such as the fact that compute resources are owned and managed by another firm, instead of being owned and managed internally. Several of the most important differences, however, are not a function of the technology. In general, any piece of technology available from a cloud provider is also available on premises. What the cloud offers is an alternative consumption model, not an alternative technology. Put another way, the advantages of moving to a cloud provider are found in how the compute resources are consumed, not in the compute resources themselves.

Cloud providers necessarily cannot duplicate all on-premises capabilities, such as data-audit compliance policies designed for on-premises hardware. Because cloud components are geographically dispersed, latency is generally worse in cloud deployments. Cloud providers face other limitations as well. Because providers adhere to standards across clients, real limits exist for customization of computing environments. For example, few cloud providers will provide rare and exotic computing equipment.

But if a company can operate within those limits, a cloud provider can deliver consumption capabilities beyond the reach of most firms. A cloud provider may operate data centers across the globe, offering organizations a choice of which data center to locate compute resources in. A cloud provider can also absorb fixed costs, enabling a company to pay only for those resources actually used. Since a cloud provider has economies of scale unmatched by most companies, it can invest heavily in systems to manage and automate deployments. Note that none of these benefits are technical in nature; rather, the advantages of a cloud provider are in the choices and cost structure available to the consumer.

In order to mitigate the limits and leverage the advantages of a cloud provider, an IT department must change the way it deploys applications, altering workflows to make best use of the relative strengths of a cloud provider, while avoiding dependencies on attributes where a cloud environment is at a disadvantage, such as latency.

Cost savings is often mentioned as a primary driver to move to the cloud, but the true reasons tend to be more nuanced. Most motivations fall into three categories: certainty, productivity, and agility.

Public cloud providers increase certainty by packaging up technology to make it more consumable, and in doing so absorb some of the IT operational risk. Since the providers maintain and take responsibility for the infrastructure, an organization can ignore those concerns. Similarly, cloud providers generally make tools available to gain insight into IT operations, increasing visibility. Since public cloud costs are generally a function of consumption, an organization can more closely tie the benefits of an application (such as revenue) to the operational costs of that application. As the application is more used, the costs increase, but so do the benefits. By moving expenses from capital to operational, there is less need to provide room for future growth in purchasing decisions. Managing these uncertainties at the cloud provider level delivers measurable benefits.

Another reason to adopt the public cloud is increased productivity. Collaboration and automation tools from providers are some of the most advanced available in the marketplace. Moreover, not managing infrastructure frees IT staff to focus on business-strategic efforts, while streamlined operations that can be managed from anywhere raise IT staff productivity.

The final reason to adopt public cloud is perhaps the most compelling: agility. Because the cloud provider creates the illusion of infinite resources available at any time, the speed and direction of business efforts are no longer tied to IT provisioning constraints. Resources can be deployed in seconds or minutes. A project can consume a complex computing environment for a short period of time without concern for decommissioning the hardware when the project sunsets. Applications can be deployed, scaled, and removed quickly without concern for hardware assets.

While there are many benefits to a cloud migration, there are several challenges associated with a migration effort. The barriers and risks vary depending on where they occur in the migration process: before the migration, during the migration, and post-migration. Addressing these barriers and risks upfront increases the likelihood of a successful migration effort.

Planning before the migration is perhaps the most critical part of the migration process. Migration efforts may fail due to a failure to re-architect the application to take advantage of cloud capabilities. This is also a good time to take stock of, and begin to manage, the application’s complexity. IT training should take place at the beginning of the effort as well. Cloud deployments tend toward siloed execution and tool proliferatio, and planning in the early phases can help smooth the migration process.

Migrating applications to the cloud tends to take longer and be more complex than initially planned. To help minimize problems, start with a small and relatively independent application so that problems encountered will have minimal impact. Lessons learned from the first migration of a small application can be applied to future migrations.

Long-term issues with migrations become apparent after the migration is complete. Security and compliance concerns can arise, often due to the security perimeter being more difficult to define in a cloud setting. Support for legacy systems might be more difficult when old and new systems are not only in different environments, but developed with different cultures. Some organizations have experienced concern with cloud lock-in, especially with the rise of containers. Finally, budget concerns can be an issue, since variable cloud costs can be difficult to project each quarter.

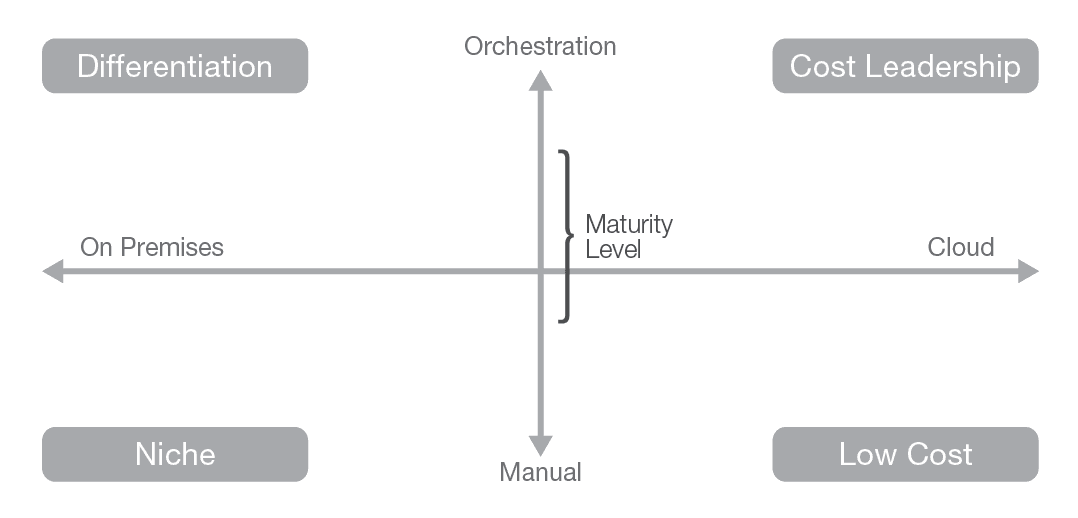

One technique for evaluating applications is the maturity vs. location matrix. Examine your applications to determine how much maturity you need. Applications that provide competitive differentiation or are niche might best be served on premises. At the other end of the spectrum, low-cost or cost-leadership applications (particularly in the cost of change), often fit well in a cloud environment. Other factors to consider include scalability. Suppose that a new application has been deployed and might eventually scale to 100 times the current load. To stay ahead of the growth in on-premises deployment, the infrastructure would need to be significantly over-provisioned, introducing extra capital costs for idle resources. However, if this same application was deployed in a cloud environment, costs would scale with growth and there would be no need for over-provisioning.

Network issues can also play a role in the decision of which applications to migrate to a cloud environment. If an application has a large proportion of distributed users, such as an expansive dealer network, or a sizable number of remote email users, then an on-premises deployment funnels all of that traffic to the data center. Because the traffic is already traversing the Internet, the applications are not sensitive to latency. When applications such as these are placed in a cloud environment, the network latency does not change, but it does free up bandwidth at on-premises data centers that would otherwise be consumed by the distributed users.

At the same time, there are reasons to keep some apps on premises. Applications with shorter life expectancies might not be worth the risks and costs of a migration effort. Some apps depend on low network latency and storage speeds, and could perform much worse in a cloud environment. Compliance and security concerns often arise as reasons to keep apps on premises. Some audit procedures assume physical access to the systems and data in order to verify the data, while others require that the application and operating system perform on bare metal (i.e., they cannot be run on a virtual machine). Over time, compliance procedures will accommodate the cloud environment, but in many cases that has not happened yet. Instead of assuming the risk of failing compliance audits with applications in the cloud, it might make more sense to keep those sensitive apps on premises for now. Some apps need to support legacy systems and processes, and therefore cannot feasibly be re-archtected to operate in a cloud. These apps could also be candidates to leave on-premises. For these reasons, some apps should have a lower migration priority than apps that have a better fit for a cloud environment.

Applications better left on premises

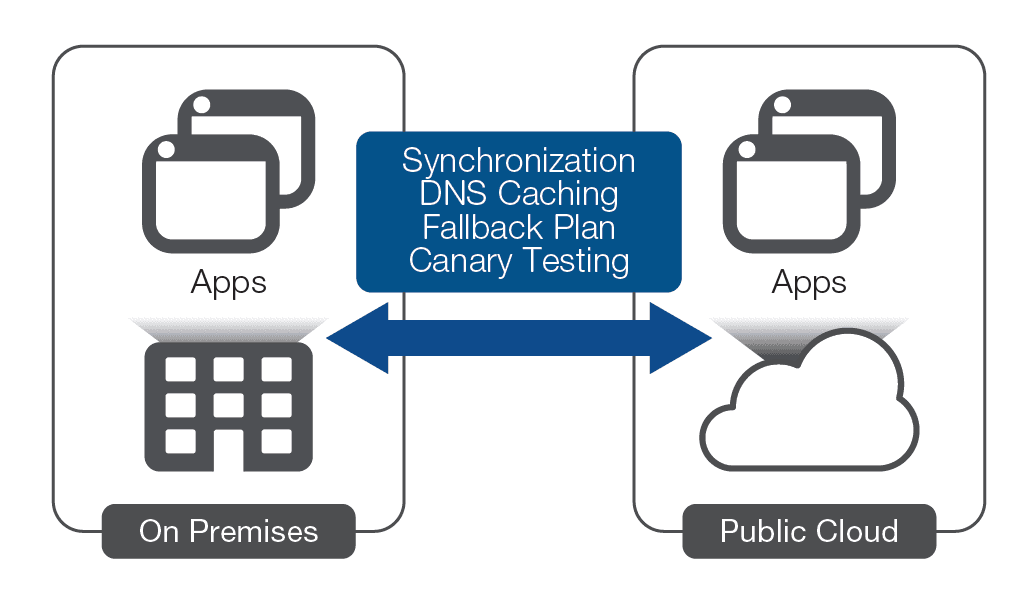

Many organizations must surmount challenges during the migration process. A major question that will need to be answered is how to perform the actual cutover? One approach is to use DNS to resolve to the on-premises application until the cloud application has been thoroughly tested, and then change the DNS records to resolve to the cloud application. DNS contains a time-to-live (TTL) value that allows clients to cache the DNS response for a period of time. Before the transition, it might make sense to set a low TTL, perhaps just a few seconds, so that after the cutover clients quickly resolve to the new applications. However, this approach does have some risks. Lowering the TTL can significantly increase DNS traffic as well as the burden on the servers. An organization could ignore TTL and cache for longer periods than specified, leaving some users resolving to the on-premises application long after the cutover has taken place. Consider testing the robustness of your DNS infrastructure before using this approach.

The process of transition can also expose problems with the new application that were not found in testing. A way to hedge against a problematic application is a fallback plan that enables processing to revert to the on-premises application in case of issues. Unfortunately, a bidirectional transition complicates data synchronization and so requires far more upfront planning and testing. A more sophisticated approach uses a partial transition called canary testing, which transitions a portion of users to the cloud application while it is monitored. As confidence in the application grows, more, and eventually all, users can be transitioned. Inversely, should a problem surface, the transitioned users can go back to using the on-premises application. Canary testing can be performed by a programmable Application Delivery Controller (ADC) through F5® iRules®, thus minimizing risks while increasing the number of options open to the operations team during the transition period.

One of the biggest mistakes organizations make when migrating applications is failing to rearchitect them for the cloud. Allocating the time to change the structure of the application so that it takes advantage of the differences in a cloud environment can yield significant benefits.

For example, scaling in a cloud environment is trivial compared to scaling on premises. Instead of designing the application and testing for worst-case load, instead design the application to be slim but scalable. There is no need to maintain overhead for a large footprint when application use will cross a wide spectrum. Instead, build small and be ready to scale. Similarly, certain failures, such as power failure, are less likely in a cloud environment so there is less need to architect for those.

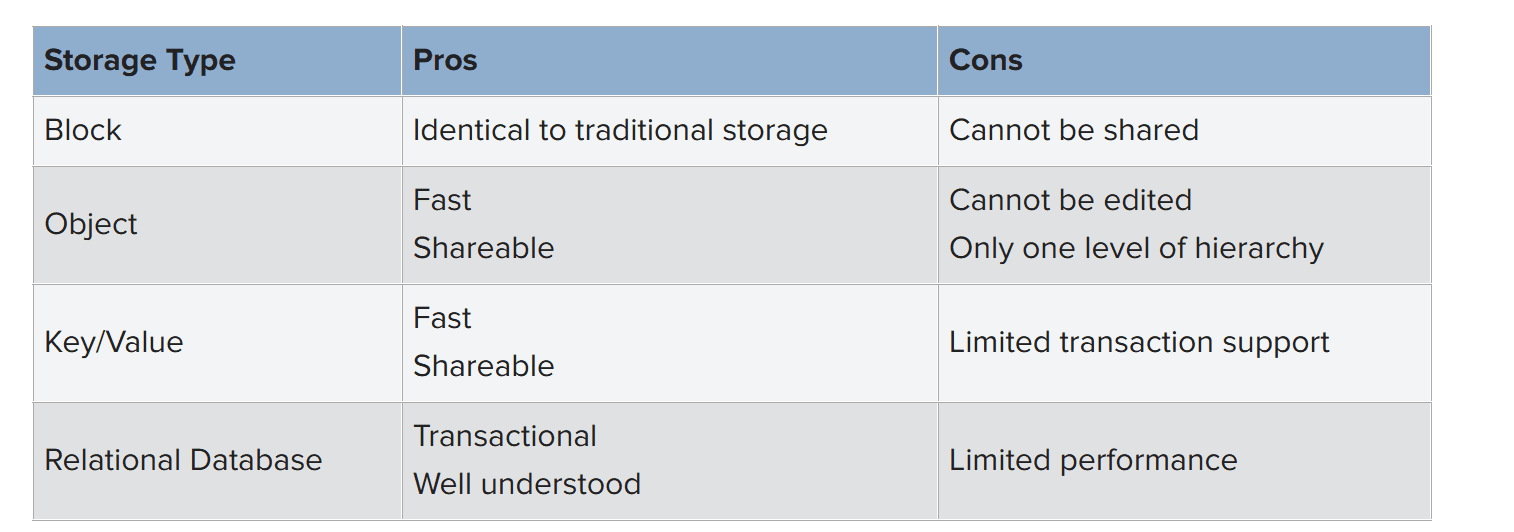

On the other hand, cloud envrionments introduce new limitations not regularly experienced on premises. Cloud architects note that a design should plan for failure of any VM instance at any time as part of regular processing. Storage is also different in a cloud setting. On-premises designs regularly use local block storage. That same option is available in cloud environments, but it cannot be shared. The combination of local unshared state and instance failure could be a recipe for disaster. Other storage paradigms exist, such as object-based storage or key-value storage services. Hedging against the limitations of a cloud environment can be done through rearchitecting the application.

Some applications or control systems make sense to keep on premises. Many organizations will retain Active Directory on premises while employing federation to extend identity into a cloud environment. Similarly, an ADC provides many security services to support application delivery, such as firewalls, web application firewalls, DDoS protection, and SSL/TLS intercept along with service chaining. An ADC also provides advanced traffic management and a programmable proxy, as well as identity and access management.

Organizations have typically consolidated these application services externally to the application because the services are not part of the business logic, but instead are operational concerns. To enable operational changes independent of application release schedules, many enterprises concentrate domain expertise that can be applied to all applications using an ADC as a strategic point of control. However, cloud environments change this model.

An early approach to application delivery in cloud environments was to replicate ADC services using native tools in each cloud environment. Soon it became apparent that cloud providers did not offer the same level of native capabilities, which left organizations with a choice: either maintain heterogeneous application delivery standards between cloud and on-premises environments, or redesign all applications to the lowest common denominator.

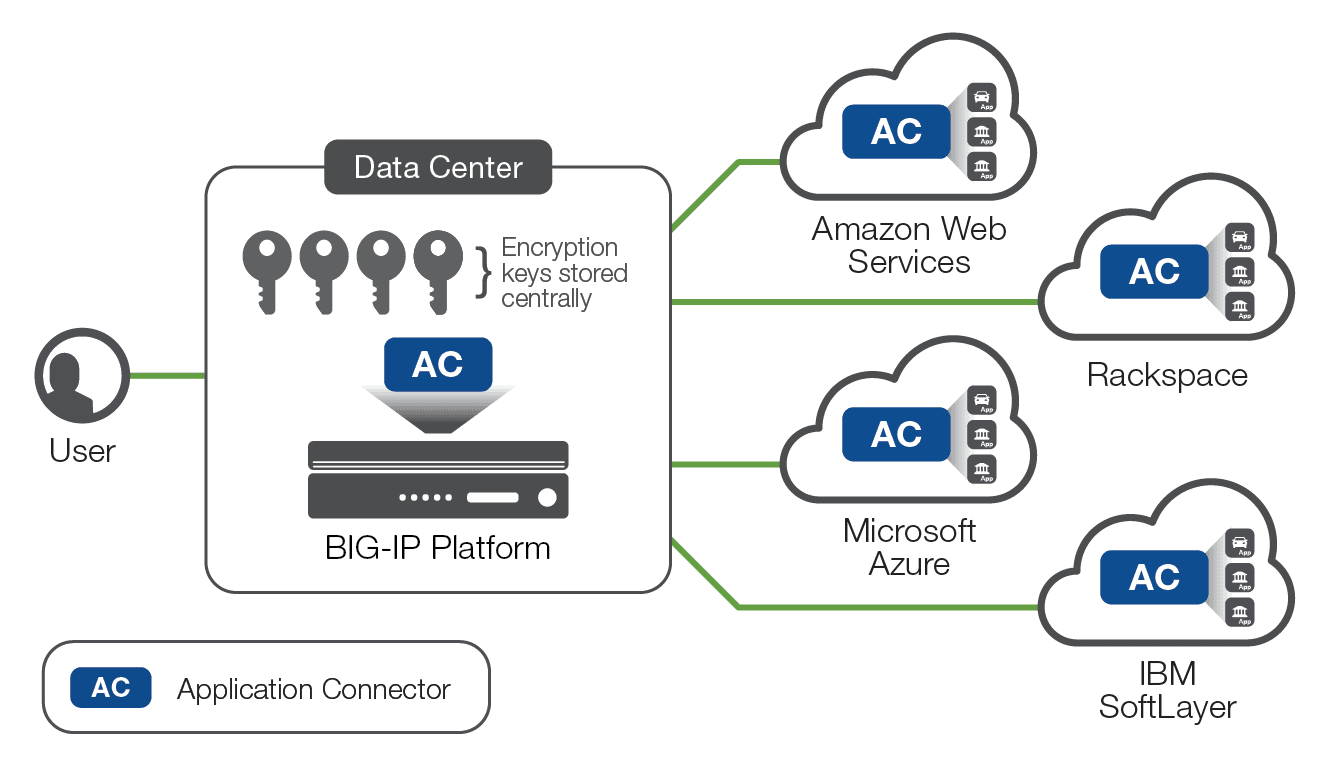

As enterprises expanded into multiple cloud environments, both options became less palatable. Suppose an organization leverages three cloud providers and also maintains an on-premises data center. The organization either had to maintain four sets of SSL/TLS expertise, four sets of DDoS expertise, and so on, or it had to standardize on an increasingly disjointed (and limited) set of basic services. Deploying just one new application in an additional cloud environment carried a significant marginal cost of either another set of domain experts, or a new set of standards to apply to all existing applications.

But there is a third choice for delivering consistent services across all environments. F5 Application Connector enables one set of domain experts to manage all applications, regardless of where those applications live. By using existing BIG-IP® hardware or software appliances, an organization can inject complete advanced application services in front of any application wherever it is located giving all applications a strategic point of control, managed by a single set of domain experts. In addition, Application Connector boosts security by eliminating all visible public IP addressing, thus reducing attack surfaces. By keeping encryption keys stored centrally and not in the cloud instances, organizations can be confident that their critical cryptographic information remains private. Finally, Application Connector increases the efficiency of your cloud deployment by allowing new workload nodes to be discovered automatically by the proxy instance.

One of the largest differences between on-premises applications and cloud applications is storage for application state. Overwhelmingly, on-premises designs use local block sorage, such as a hard disk or solid-state drive. A cloud application can use block storage as well, but the storage is local to the instance and designs should expect instances to fail. If organizations retool nothing else in application design when moving an app to the cloud, they should change how state is handled.

A common cloud storage type is object storage where each object is essentially a file but addressed using a name. Generally, the storage has at most one level of hierarchy (i.e., folder or directory), so mapping a traditional file system to objects can be problematic. Because objects cannot be edited—only created, read, and deleted—object storage is a good choice for static content, such as images, but not a good choice for state. Many applications assume that a file can be modified so these applications will not work with objects.

Other storage choices include key-value stores and traditional transactional databases. These are not storage like a file system, but they do store data and are provided as an additional service. With an effective design, state can be stored in a key-value or database service.

Cloud computing environments offer benefits, but only if key applications are re-architected to take advantage of the cloud features while mitigating the impact of cloud limitations. Cloud environments have different failure scenarios and often have increased network latency, but allow for rapid scaling and choice of data centers across the globe. Considerations for cloud migration include determining which applications to migrate and which to keep on premises.

Careful thought and planning should also go into the transition process itself, along with a backout plan in case the cloud application is not behaving as expected. As part of the planning process, some organizations keep an ADC on premises as a strategic point of control for all of the applications regardless of where they are hosted. Finally, carefully plan how the cloud application will store data and state. However you choose to move your applications—and your business—to the cloud, consider bringing an experienced cloud partner with you.

PUBLISHED AUGUST 16, 2017