F5’s completion of its acquisition of Volterra marks the beginning of the next phase of edge computing, ushering in the Edge 2.0 era. We envision that in the Edge 2.0 era, digital business in every industry sector will adopt edge computing platforms to deliver applications and to process and analyze data. The edge platform will be an essential component of the user experience for all digital services.

In this article, I’ll explain the evolution of edge architecture and discuss F5’s technology vision for the Edge 2.0 paradigm.

Edge 1.0

Edge technologies have existed in an embryonic form for many years, but with a different focus. Early on in the emergence of the Internet, it focused on static content and was called Content Delivery Network (CDN). Tim Berners-Lee, the inventor of the World Wide Web, foresaw the congestion challenge related to the passage of large amounts of web content over slow links that the Internet users would face: he called this issue the "World Wide Wait." Intrigued by this challenge, MIT professor Tom Leighton explored the problem with scholarly research. He and his student Danny Lewin then co-founded Akamai Technologies in 1998, which created the Content Delivery Network architecture paradigm.

The focus of the CDN paradigm was, appropriately, on distributing the relatively static web content or web applications to get it closer to users to address the need for speed and redundancy. That need led to a set of key architecture tenets including physical Point of Presence (PoP) close to end users, content caching, location prediction, congestion avoidance, distributed routing algorithms, and more. Although networks and devices have changed, these design principles still dominate the fundamental CDN architectures today.

Edge 1.5

In the meantime, the Internet "content" ecosystem has evolved. Applications have become the primary form of content over the internet. As such, the distributed edge could not persist in its nascent form: it had to evolve along with the application architectures it delivered while under increasing pressure to secure a growing digital economy. With so much of the global economy now highly dependent on commerce-centric applications, security services quickly became an add-on staple of CDN providers, whose existing presence around the globe stretched closer to the user—and thus resolved threats earlier—than the cloud and traditional data center. These services were built atop the infrastructure put in place to distribute content and therefore represent closed, proprietary environments. The services offered by one CDN vendor are not compatible with nor portable to another.

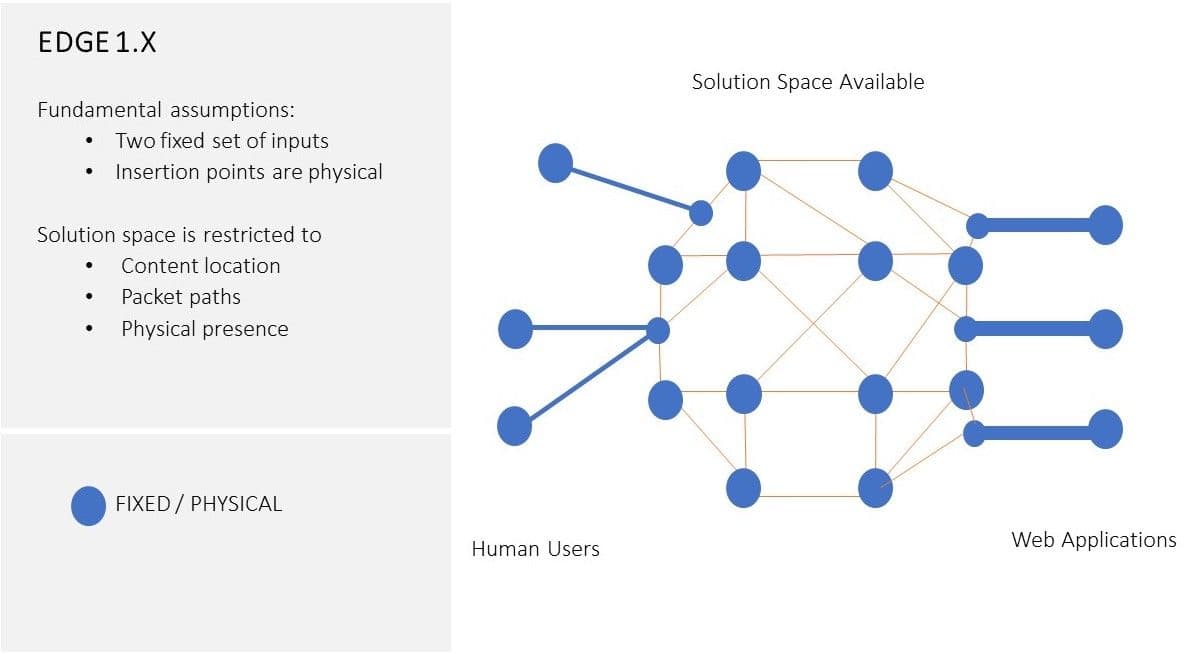

Furthermore, the original CDN architecture paradigm's fundamental design principles—aimed at solving Tim Berners-Lee’s original Internet "World Wide Wait" challenge—assumed that the two sets of endpoints (the users and the content they access) are relatively passive entities, and relegated the solution to the problem primarily to some middle tier: in this case, a CDN. The evolution of the Internet ecosystem, especially the shift to container-based microservices applications and intelligent end-user computing, has utterly broken that assumption. See Figure 1. for a conceptual view of Edge 1.x architectural sphere. We will elaborate on both factors in more detail in the next section.

Catalysts of Edge Evolution

While companies still need to distribute static content, they are also looking to the Edge to play a more significant role in their application architecture. The latest research conducted by F5 shows that 76% of enterprises surveyed are planning to use Edge for various use cases, including improving performance, speeding data collection and analytics, supporting IoT, and engaging capabilities for real-time or near-realtime processing. This includes 25% of companies who foresee no role in their infrastructure for the services of a simple CDN function. These organizations are creating highly dynamic, globally distributed applications with secure and optimal user experiences. They look to Edge to provide the presence and flexibility of multi-location application services and the consistent building blocks they need to do it successfully.

Today, we see services offered by Edge providers saddled with CDN-centric architecture—the likes of Akamai, Fastly, and Cloudflare—as lacking the basic characteristics needed to provide these application-centric capabilities. For example, with Kubernetes-based distributed applications, the application logic—packed inside a container—can dynamically move to any appropriate compute location with a supporting Kubernetes stack. It can do this—for the purpose to optimize user experiences—whether the compute location is an IaaS instance from a public cloud, a physical server the enterprise owns, or a virtual machine in the Edge provider’s PoP. As such, applications are no longer the "passive" routing destinations of the delivery network but are instead active participants in the Edge solution. This is in direct contrast to the architecture principles upon which these CDN providers’ Edge solutions were built. That is, rooted from a time when contents (or applications) were static entities associated with the physical locations, their edge solutions presumed that the content delivery network alone functions as the "intelligent platform" to connect users to applications, whilst the applications (and users) stay as passive "endpoints" to the "intelligent platform." This approach is no longer the best architectural way to connect users to content or applications.

It’s not just applications. Users, as well, have evolved. Not only is their digital sophistication and appetite for digital engagement light years ahead of where they were when Akamai started in 1998, but technology has forced a change in the definition of what they are. Today, a "user" might well be a machine, a script, or an automated service acting on behalf of a human. It might be a sensor collecting critical data from a manufacturing plant or a farm field. On one hand, these "users" continue to carry their human counterparts' desires for speed, security, and privacy. On the other hand, these new "users"—intelligent IoT endpoints alike with embedded application stacks—often participate in the dynamic processing of application logic and data analytics to deliver secure and optimal user digital experiences. They have themselves become hosts to certain application functions to help optimize digital experiences. For example, with WebAssembly running on an intelligent end-user device, it has become possible for the endpoint to participate more fully in application security functions (e.g., application firewall) or application data analytics.

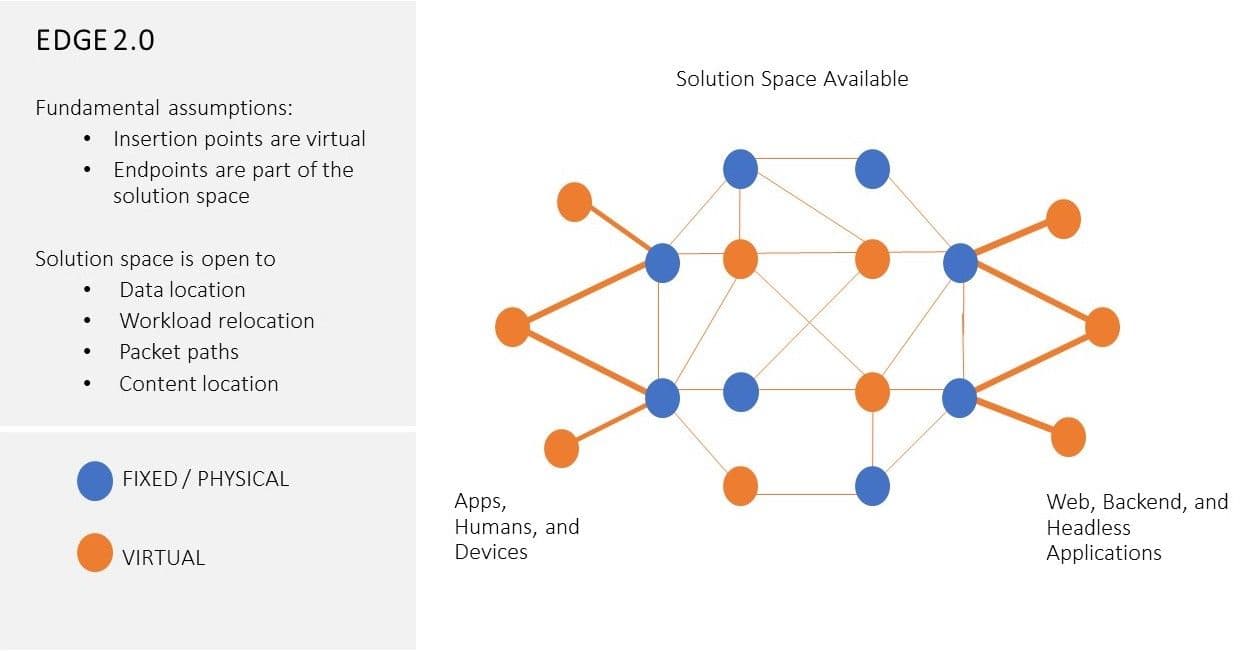

These two industry-level mega changes—modern container-based distributed applications and intelligent endpoints—are quickly making themselves part of advanced Edge solutions, in place of the old Content Delivery Network alone. The architecture principles of CDN or content-centric Edge 1.x solutions—rooted in solving the content delivery challenges associated with an Internet ecosystem circa 2000—are no longer suitable for solving the challenges of globally distributed applications and digital experiences of the future. The industry needs a new Edge paradigm: an Edge 2.0 paradigm. See Figure 2. for a conceptual view for Edge 2.0 architecture sphere.

Put in business-oriented terms, today’s enterprise IT and digital business leaders would like to see Edge application distribution and security become an integral part of their digital pipeline and production process. Doing so will allow their applications to be "built once, delivered everywhere" globally with the same seamless, secure, and optimized user experience. The CDN-centric "application services" from existing Edge providers—Akamai, Fastly, and Cloudflare—require enterprises to painfully rearchitect their applications and retrofit to the CDN-centric Edge provider’s design, locations, services, and tools. The resulting application architectures are not easily integrated into the enterprise’s DevOps and IT workflows that drive its workloads' deployment and operation. As such, these "application services" rooted in the closed CDN-centric systems and services introduce yet another operational obstacle in the enterprise's quest for a seamless multi-cloud solution to proper and effective application distribution.

Edge 2.0

The core application challenges that the Edge emerged to address—speed and then security—still exist today. What has changed is the definition of application (from a static instance residing in a fixed location to "movable" container units), user (from a human user to an intelligent "thing"), and location (from an IP-address to a logical identification). Business digitization, which is significantly accelerated by COVID and is sweeping through every industry sector, gives rise to a new class of digital experience that cannot be addressed merely by moving content closer to the user. It requires a new Edge paradigm—one centered around holistic application distribution and based on a different set of technology design principles—the Edge 2.0 paradigm.

Edge 2.0 is designed with modern 'users' and applications in mind. It combines the resources available in public clouds, customer’s on-prem private cloud or data centers, and even bare metal machines or intelligent devices in remote locations to virtually extend its elastic presence on demand. It embraces modern development and deployment methodologies to offer integrated application life-cycle management and enables DevOps with global observability. Where the security of applications is concerned, Edge 2.0 rejects the traditional perimeter-based defense approaches of the past and instead adopts a system that integrates security into the Edge platform itself and provides embedded tools to protect privacy. It separates the location for data processing and analytics from that of the application logic, while allowing all of it to be governed by enterprise policies. Edge 2.0 also recognizes when workloads need specific processing and targets workloads properly to leverage special hardware to obtain optimum efficiency. All of these are commanded by a unified control plane.

TheEdge 2.0 platform is based on the following key design principles:

- Unified control plane

Edge can include any environment including user endpoints and public clouds. A unified control plane ensures the common definition of security policies, data location policies, and user identity management across different environments and enforces execution via integration with automation and orchestration tools. - Application-oriented

The Edge 2.0 platform integrates fully with application life cycle management tools. Application security policies, data location policies, identity management, and resource orchestration are "declared" via the unified control plane and enforced in any environment the platform runs inside. The Edge becomes a "declared property" of the target application (resulting in every application having its own "personalized" Edge) and is "executed" by the platform without required manual provisioning. Developers can simply focus on the application logic, application interactions (APIs), and business workflows without worrying about managing infrastructure or locations. - Distributed security embedded in Edge platform

In the Edge 2.0 platform, application security policies are defined in a common way via the unified control plane. They are distributed for enforcement in every environment the application runs inside. Security capabilities embedded directly in the platform (e.g., encryption, best of breed BOT detection) allow these security functions to move with the application by default. - Distributed data processing and embedded analytics

The Edge 2.0 platform becomes a global fabric for application logic and a global fabric for data processing and analytics. Any digital service requires both data and application logic, but the location for storing, processing, and transforming data should not be required to be the same as where the application logic resides. The data location should be specified independently as a set of platform level policies determined by factors such as data gravity, regulations (PCI, GDPR, et al.), and the relative price/performance of processing. Similar to security policies, data location policies should be "declared" by the users via the unified control plane and enforced by the platform in any environment. The Edge 2.0 platform has a role to play in other data management policies, such as data lineage, as well—storing these details on data as embedded attributes—in addition to having a set of built-in operational capabilities for observability, telemetry streaming, ML tools, and ETL services. - Software-defined elastic edge

In Edge 2.0, "Edge" is no longer defined by physical PoPs in specific locations. Instead, it is defined dynamically by the Edge 2.0 control plane over resources that exist anywhere a customer may desire: in public clouds, hypervisors, data centers or private clouds, or even physical machines in "remote" locations unique to their business. The connection network capabilities are also delivered in a software-defined way overlaid atop private or public WAN infrastructure without herculean effort to assemble and configure. It will respond to the target application's "declaration of intent” by delivering a customized, software-defined elastic Edge at an application’s request. "Edge"—and all that it establishes for the application as part of that declaration—will become a simple, easy-to-use property of the application. - Hardware-optimized compute

The advancement in processor and chipset technologies—specifically GPU, DPU, TPU, and FPGAs that are emerging in capability and capacity—has made it feasible for specialized compute to significantly optimize resource use for specific workload types. An Edge 2.0 platform will interface with systems that possess this special hardware to target, land, and run specific application workloads that would benefit from this assistance. For example, it will find and configure GPU resources for AL/ML intensive workloads or locate and integrate a DPU for special application security and networking services that an application needs. Hardware awareness of Edge 2.0 offers intriguing benefits for the creation of special-purpose application-facing intelligent "industrial systems" and thus boundless possibilities for establishing attractive IoT solutions where real-time processing is needed locally. For example, an EV charging station can serve as a data aggregation point of presence for the copious amounts of data generated from the EV sensors, or an autonomous vehicle powered by Android OS can behave like a mobile data center running continuous hardware-assisted self-diagnostics. Our vision is that the Edge 2.0 platform will be able to support all these special purpose intelligent systems and help deliver and improve upon our customers’ global digital experiences.

Conclusion

Edge 2.0 is built with the vision to solve the challenge facing tomorrow’s distributed applications with seamless global digital experiences in mind. It’s not 1998 anymore. The Internet ecosystem, cloud computing, and digital transformation have now evolved significantly beyond what one could imagine when the CDN architecture model was first conceived. By recognizing that this is not only a multi-cloud world but also a ubiquitous digital one, the Edge 2.0 paradigm seeks to solve the challenges of the future by disposing of the limiting assumptions of the past. It promises to enable true portability of applications across any environment along with the services they need to operate successfully, securely, at speed, and with seamless user experiences.

With the recent acquisition of Volterra, F5 is in the perfect position to lead the creation of this application-centric Edge 2.0 paradigm.

About the Author

Related Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.