The destination of digital transformation has always been a "digital as default" operating model. I can’t remember the last time someone left a phone book on my front porch or handed me a DVD of a new movie to watch. When I want to play a new game, I use the store on my console. I certainly don’t get up and drive to a physical game store and browse cartridges anymore. And updates? They’re automatically delivered. Seriously, even my favorite tabletop RPGs are digital today. I don’t have to drag thirteen books and several pounds of dice to someone’s house to play. I just need my laptop.

Not that I’m giving up my dice. Cause that’s just crazy talk.

But I am using more digital because business is giving us more digital.

In the past year, every industry has progressed rapidly – motivated by necessity – to the second and third phases of digital transformation.

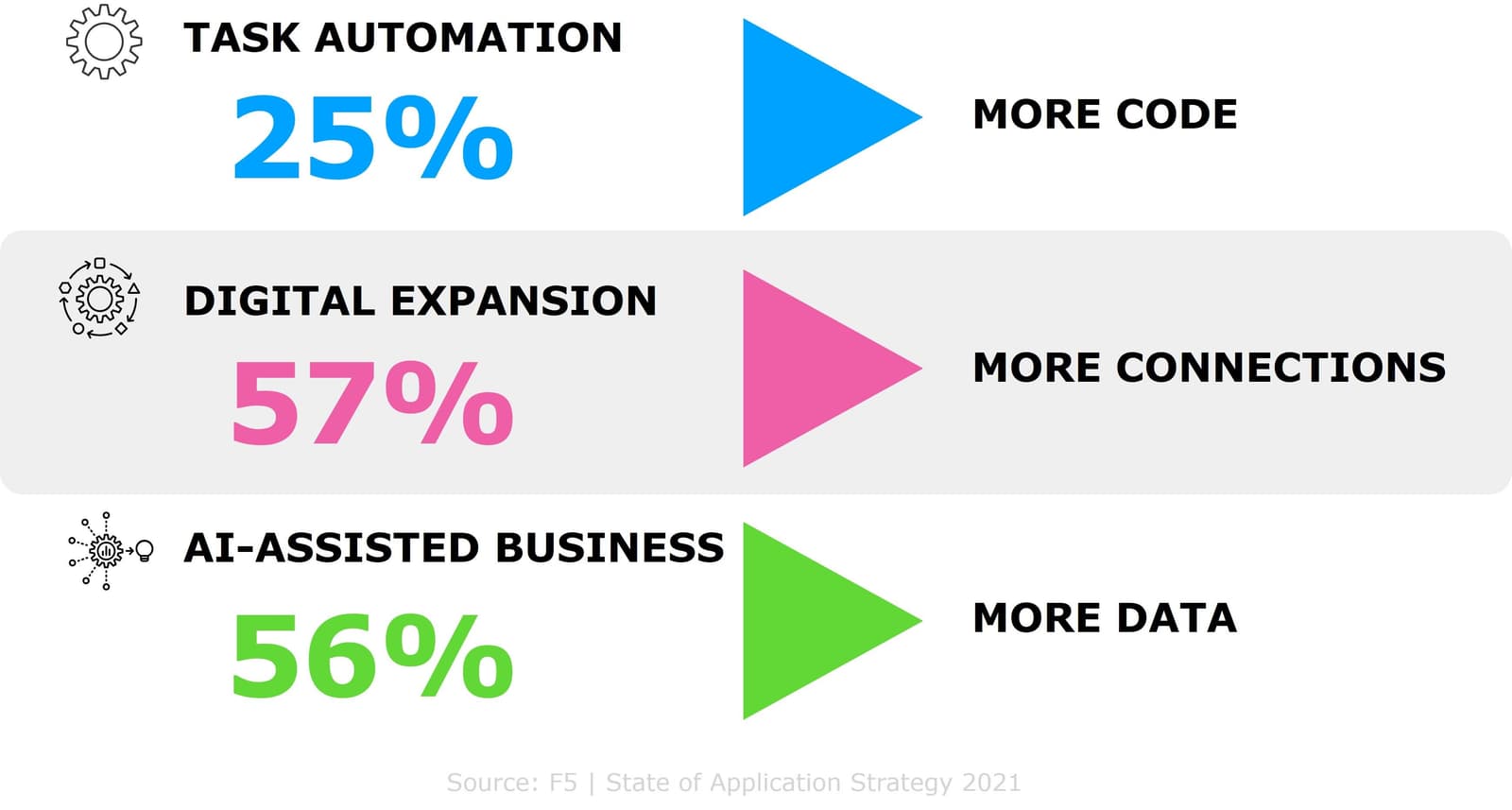

Phase 1

Task Automation

In this stage, digitalization leads businesses to turn human-oriented business tasks to various forms of "automation," which means more applications are introduced or created as part of the business flow. This began with automating well-defined, individual tasks to improve efficiencies. A common example is IVR systems that answer common questions about a product or service but may need to hand off to a human representative. In this phase, individual tasks are automated, but not consistently integrated.

The Unintended Consequence: More Code

It’s not just more code resulting from more applications, it’s also more code as a result of new application architectures. The average iPhone app takes less than 50,000 lines of code. Google? More than 2 million. Most apps are somewhere in between. All that code needs to be maintained and updated and secured, and organizations have been expanding their code base across architectures for years. Now they’re operating five distinct architectures and like three to four different code bases from COBOL to C to JS to Go.

And that doesn’t count the growing use of JSON and YAML and Python as organizations adopt "infrastructure as code." That’s more than half (52%) based on our annual research, and it’s only going to keep growing as organizations dipping their toes into AI and ML begin to adopt operational practices that include "models and algorithms" as code, too.

Phase 2

Digital Expansion

As businesses start taking advantage of cloud-native infrastructures and driving automation through their own software development, it leads to a new generation of applications to support the scaling and further expansion of their digital model. The driver behind this phase is business leaders who become involved in application decisions designed to differentiate or provide unique customer engagement. For example, healthcare providers are increasingly integrating patient records and billing with admission, discharge, and scheduling systems. Automated appointment reminders can then eliminate manual processes. Focusing on end-to-end business process improvement is the common theme in this phase.

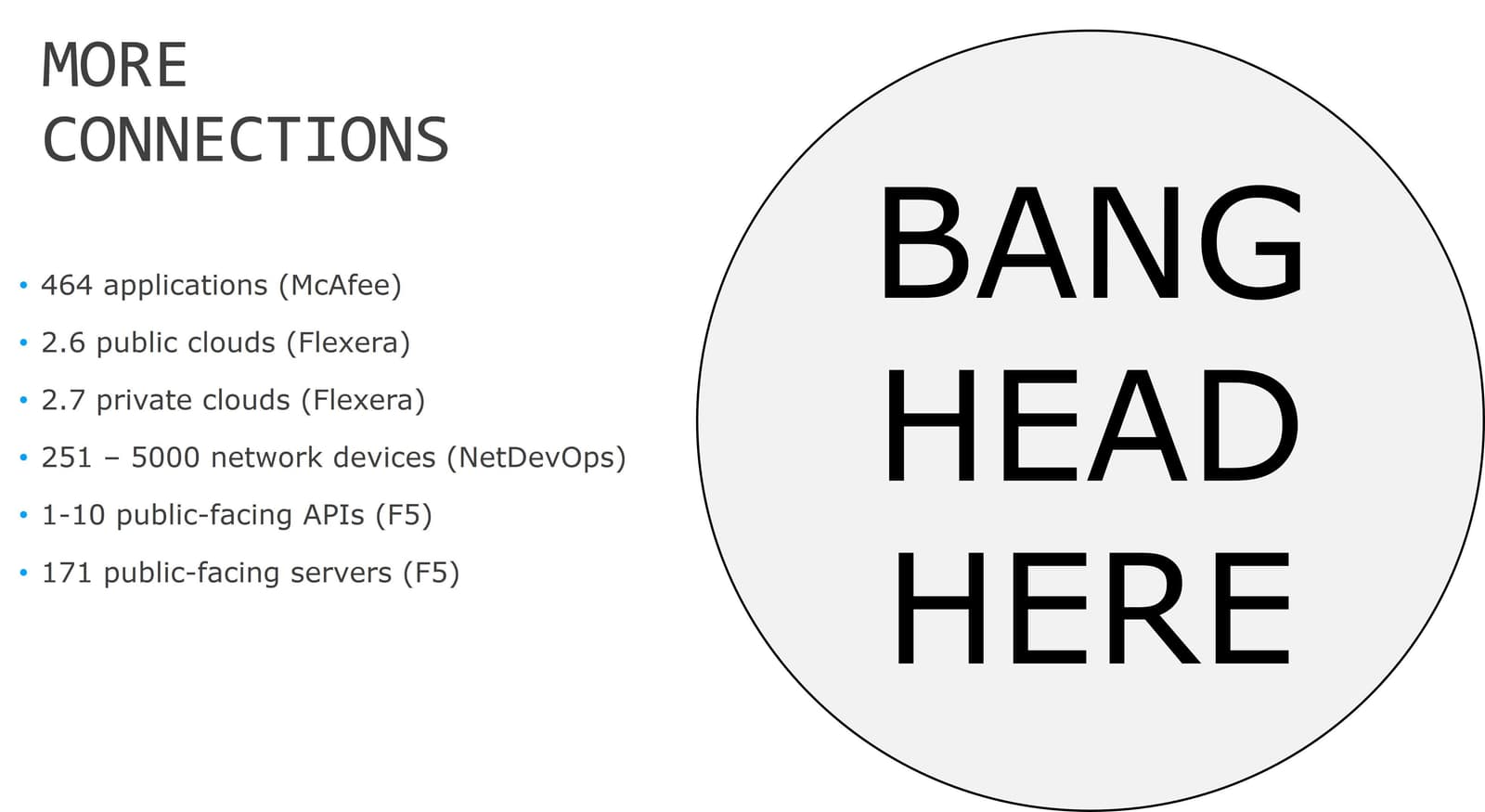

The Unintended Consequence: More Connections

But wait, there’s more. Digital as default and the modernization of IT means more connections—between applications, systems, devices, consumers, partners, APIs. Every one of them is a potential entry point, one that could ultimately result in a significant breach or compromise of systems.

The table stakes here are high. Malware. Ransomware. Fraud. Lost revenue. The costs of failing to secure everything that could be attacked is overwhelming – as is how you’re going to do it.

Phase 3

AI-Assisted Business

As businesses further advance on their digital journey and leverage more advanced capabilities in application platforms, business telemetry and data analytics, and ML/AI technologies, businesses will become AI-assisted. This phase opens new areas of business productivity gains that were previously unavailable. For example, a retailer found that 10% to 20% of its failed login attempts were legitimate users struggling with the validation process. Denying access by default represented a potentially significant revenue loss. Behavioral analysis can be used to distinguish legitimate users from bots attempting to gain access. Technology and analytics have enabled AI-assisted identification of those users to let them in, boosting revenue and improving customer retention.

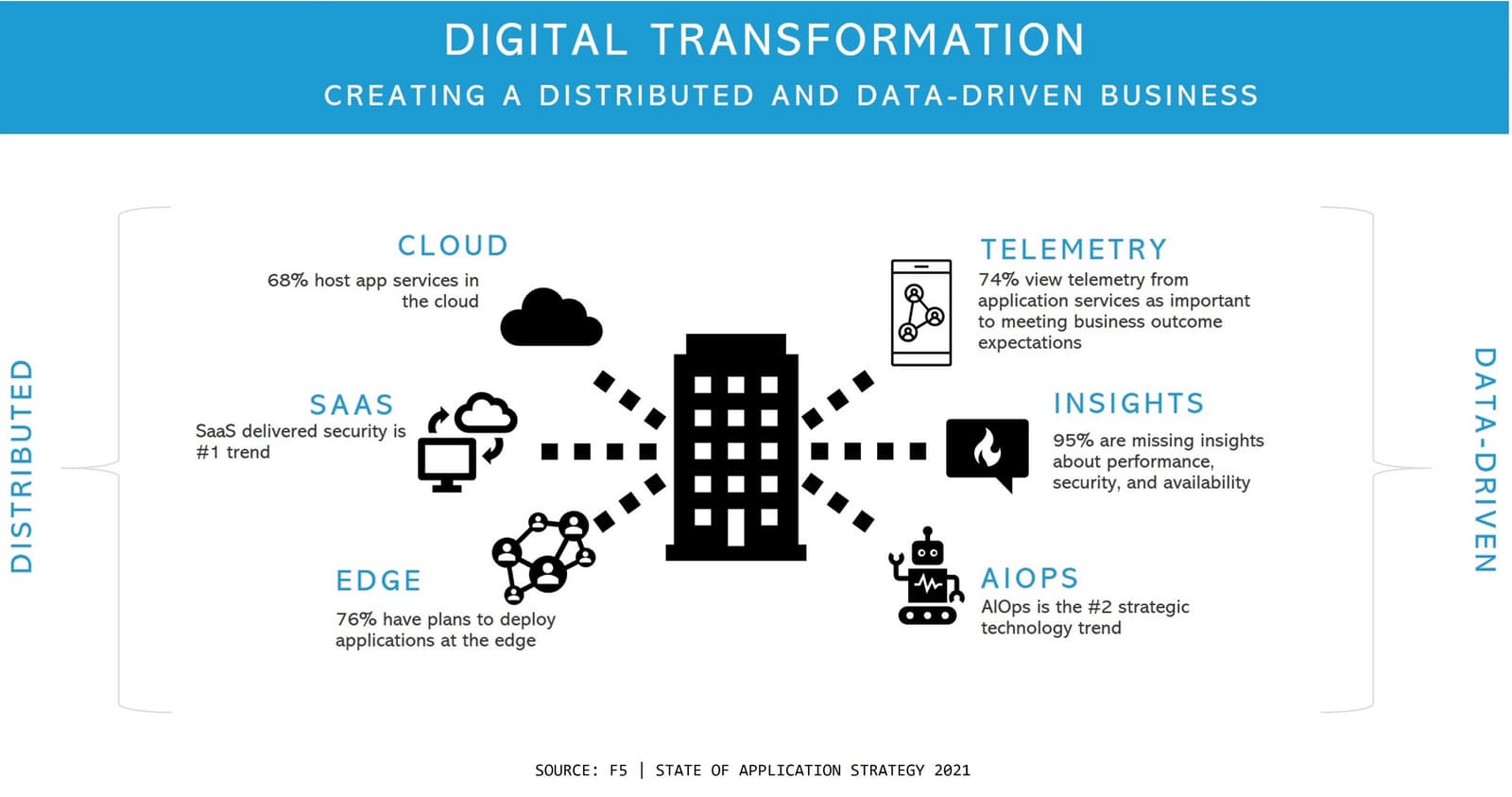

The Unintended Consequences: More Data

Lastly, digital as default necessarily results in more data. Not just customer data – orders, products, addresses, payment details – but operational data like metrics and logs. A digital business needs telemetry to understand visitors, engagement patterns, performance, unusual flows, and anomalous behavior. That telemetry isn’t something that can be analyzed and thrown out, at least not right away. Days, if not weeks or months, of telemetry can be required to properly establish operational baselines and then uncover patterns that feed into business decisions as well as anomalies indicative of an attack.

All that data needs attention. It needs to be normalized, stored, processed, analyzed, and curated. And it needs security, because some of that data may contain protected customer bits requiring compliance and regulatory oversight.

The Unintended Result of Digital Transformation: More Complexity

No matter how fast or slowly an organization progresses through these phases, the result is the same: more complexity.

And we all know that complexity is the enemy of security.

So, for security professionals digital as default means new challenges. One of the ways to deal with this set of security challenges is to break it down into more manageable categories.

Simplify to Keep Your Sanity

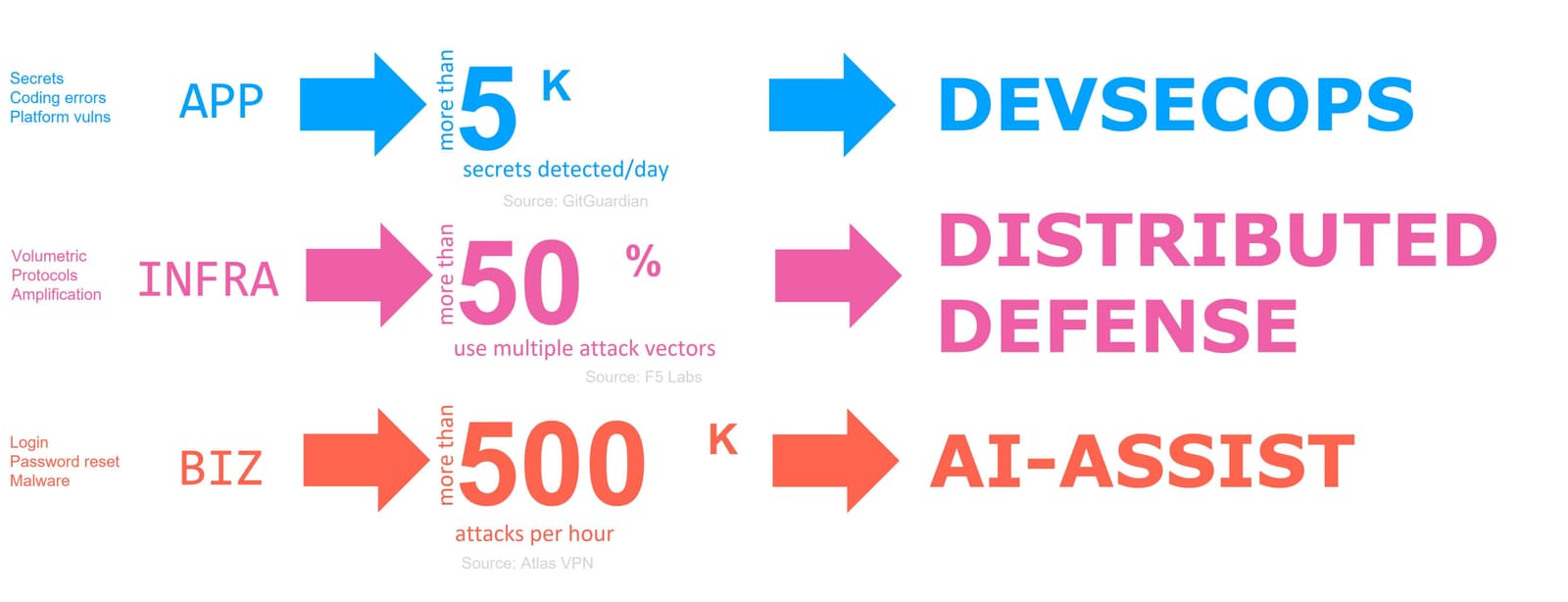

Most of the security challenges can be broadly grouped into three categories: application, infrastructure, and business. These higher-level categories are good for managing up when you need funding or executive support. They’re also good for triage when determining the best approach to mitigate them.

App Layer → DevSecOps

App layer vulnerabilities can be addressed with a shift left approach, that is, making security a part of every pipeline—from development to deployment to operation. These are almost always inadvertent vulnerabilities—those that are generally caused by a human action—either intentional or accidental. They span the stack, from secrets shared through personal developer repositories to misconfiguration of S3 buckets. Tools can identify vulnerabilities in third-party components and other dependencies to make sure you’re using the latest, greatest, and hopefully safest version of that script.

From WAF to DAST to RASP to SAST, tools abound to help scan and secure code. Most of them are fully capable of integrating with the development pipeline. By automating scans, you effectively eliminate a hand-off—and the associated time sink. The industry calls it DevSecOps, but you could also call it parallel processing or multi-tasking. It means the timeline doesn’t stop when a team or individual is unavailable or overbooked. It means more thorough analysis and ability to catch errors earlier in the process.

Infrastructure Vulnerabilities → Distributed Defense

More traditional vulnerabilities like volumetric DDoS and DNS amplification live in the infrastructure layer. You really can’t shift left to mitigate these and you definitely can’t eliminate them, because you don’t control attackers. You can only control your response.

Infrastructure layer vulnerabilities need more of a shield right approach—where security services defend against live attacks, because there’s ways to "process" them out.

The app is the perimeter today, and organizations have apps all over the globe. Ignoring SaaS, organizations use, on average, 2.7 different public clouds that extend existing data centers. That’s plural.

They also have a lot of distributed endpoints—like my corporate laptop. Even before work from home became a more or less permanent thing, people traveled—and that meant mobile distributed endpoints.

This is driving the need for distributed app and identity centric solutions to defend infrastructure and applications. That means SASE and Zero Trust, and the use of edge to move infrastructure defensive services closer to the origin of attacks. SASE and ZTNA shift policy from IP addresses and networks to users and devices and require proof of identity to access applications and resources.

Business vulnerabilities → AI-Assistance

Finally, there’s the business layer vulnerabilities. Like infrastructure layer vulnerabilities, these are inherent; you can’t process them out. You can’t really eliminate a login page or password reset process, so you’re stuck defending against attacks that will invariably batter your defenses.

And batter them they will. F5 Labs research notes that the average DDoS attack size increased by 55% over the past year, with education one of the most targeted industries in early 2021. Credential stuffing attacks were launched against video gamers in 2020 to the tune of more than 500,000 per hour. These must be dealt with in real-time.

That’s why it’s no surprise that AI-Assisted security is being adopted at a frenetic pace, to keep up with the crazy rate at which new attacks and new ways to execute old attacks are developed and launched.

Remember, science tells us that human beings can only process about 50-60 bits per second. That’s why it’s so hard to multi-task. Data is flowing in from systems, devices, applications, clients, and the network at a much higher rate than we, as human beings, can process. That’s why we have dashboards and visualization, but those don’t actually inform us as to what’s really going on. They are snapshots of a moment in time and too often based solely on binary metrics – up down, fast slow. The ability to accurately process and predict potential attacks was cited by 45% of respondents to our annual research as "missing" from their current monitoring solutions. AI is one answer to that, with the promise of real-time analysis of data via trained models that can detect and alert us to a possible attack.

Digital as Default is the New Normal

Ultimately, all this digitization is creating a distributed and data-driven world. It’s digital as default. And that means more ways for attackers to gain access, exfiltrate data, and generally make a mess of things. In a digital as default world, security needs a digital stack and that means DevSecOps, a distributed defense model, and AI-Assisted security.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.