Since its inception, the Internet has evolved in ever-faster cycles—improving the application delivery process and increasing interactivity—driven by advances in technology and market forces. Enterprises and government must work to keep up with user expectations and competitive pressures. Older technologies that impeded the user experience (e.g., Adobe Flash, HTTP/1.x) have fallen by the wayside, and newer technologies (e.g., HTML5) more attuned to the user experience have taken over.

In this paper, we’ll quantify some of the more recent changes affecting user experience, which IT groups should understand for operational and planning purposes in 2017 and beyond:

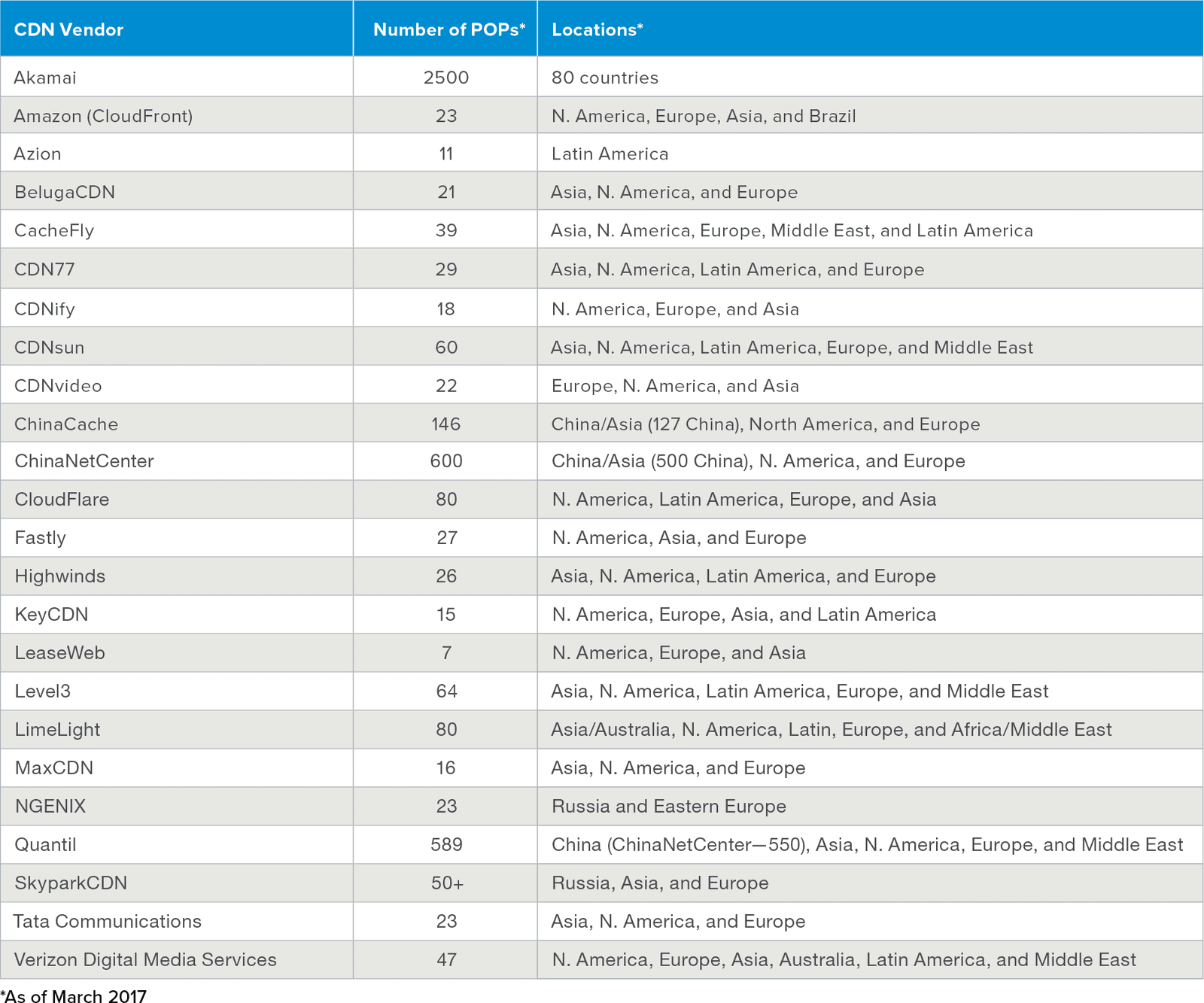

CDN vendors have rapidly expanded the number of points-of-presence (PoPs) they operate, mostly near population centers, to reduce latency (~30ms in many cases) in delivering content to users. Below is a list of the most popular CDNs that have built out infrastructure across the world to ensure fast delivery of content.1

As CDNs have boosted the number of PoPs they operate—as well as their proximity to users—and as the user base for applications has gone global, websites have increased their reliance on CDNs. In March 2015, 11% of the Internet’s available HTML content was hosted on CDNs.2 In 2016, that number had risen to 17%, and as of March 2017, HTML content hosted by CDNs was up to 20%. In less than two years, the amount of HTML content hosted on CDNs nearly doubled, a trend that has greatly reduced latency for content delivery to users.

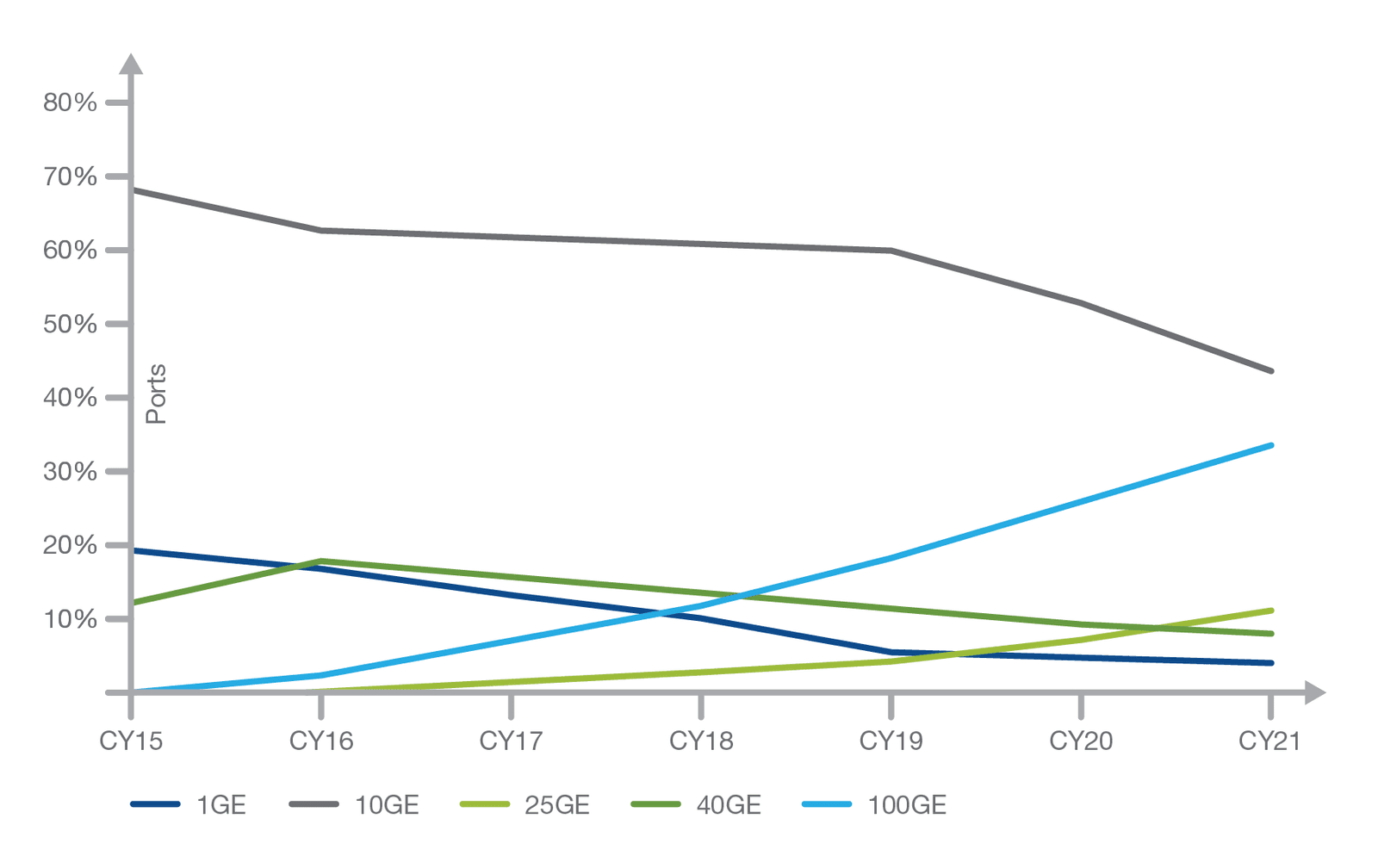

Implementation of 10G/40G/100G Ethernet ports (and corresponding higher capacity switches and routers) dramatically improved Internet performance for corporate networks, WAN connections, and remotely located endpoints. In the next four years, enterprise and service provider networks continued upgrade to 25G and 100G connections will have upgrade bandwidth capacity nearly 5x over 2015.3

The investment in upgrading data center and edge infrastructure has mitigated the problem of service provider networks and enterprise data centers being bottlenecks to application traffic heading out to the client.

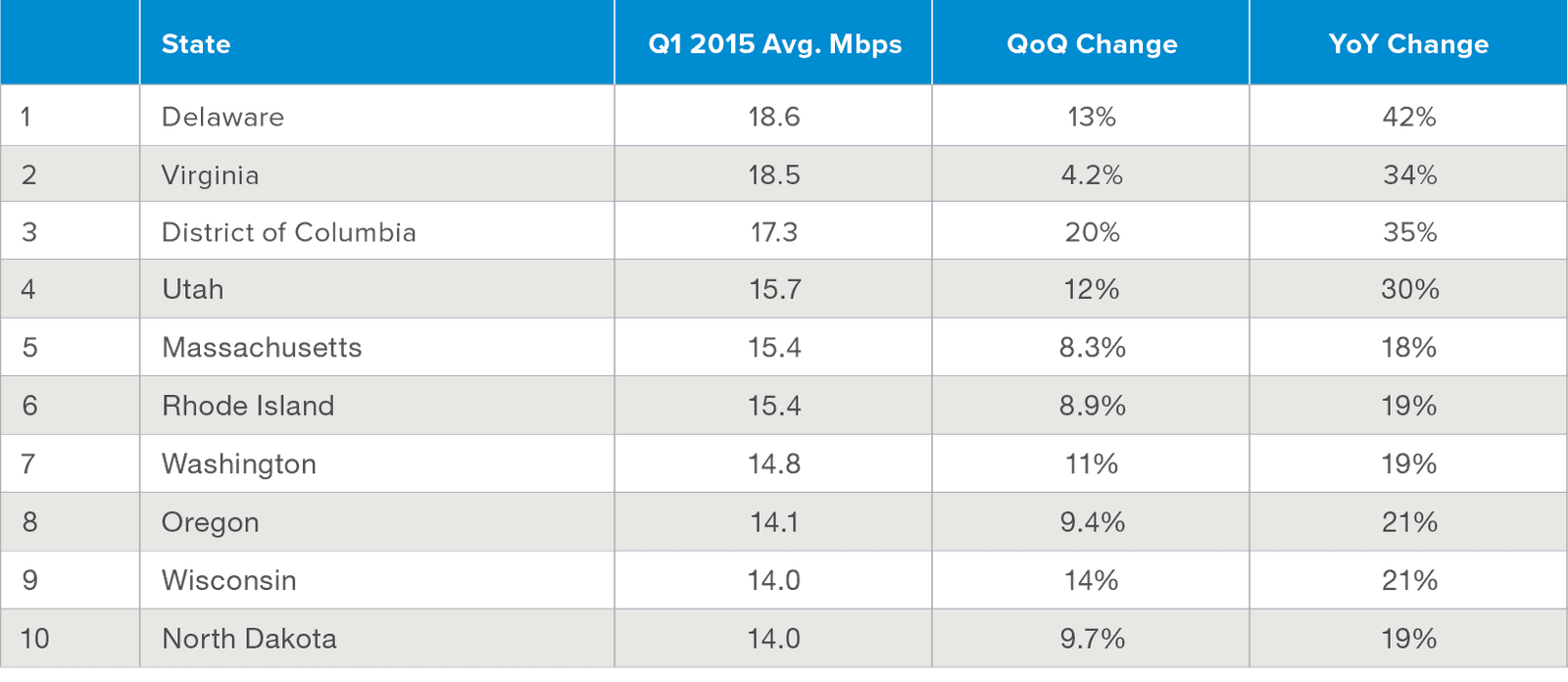

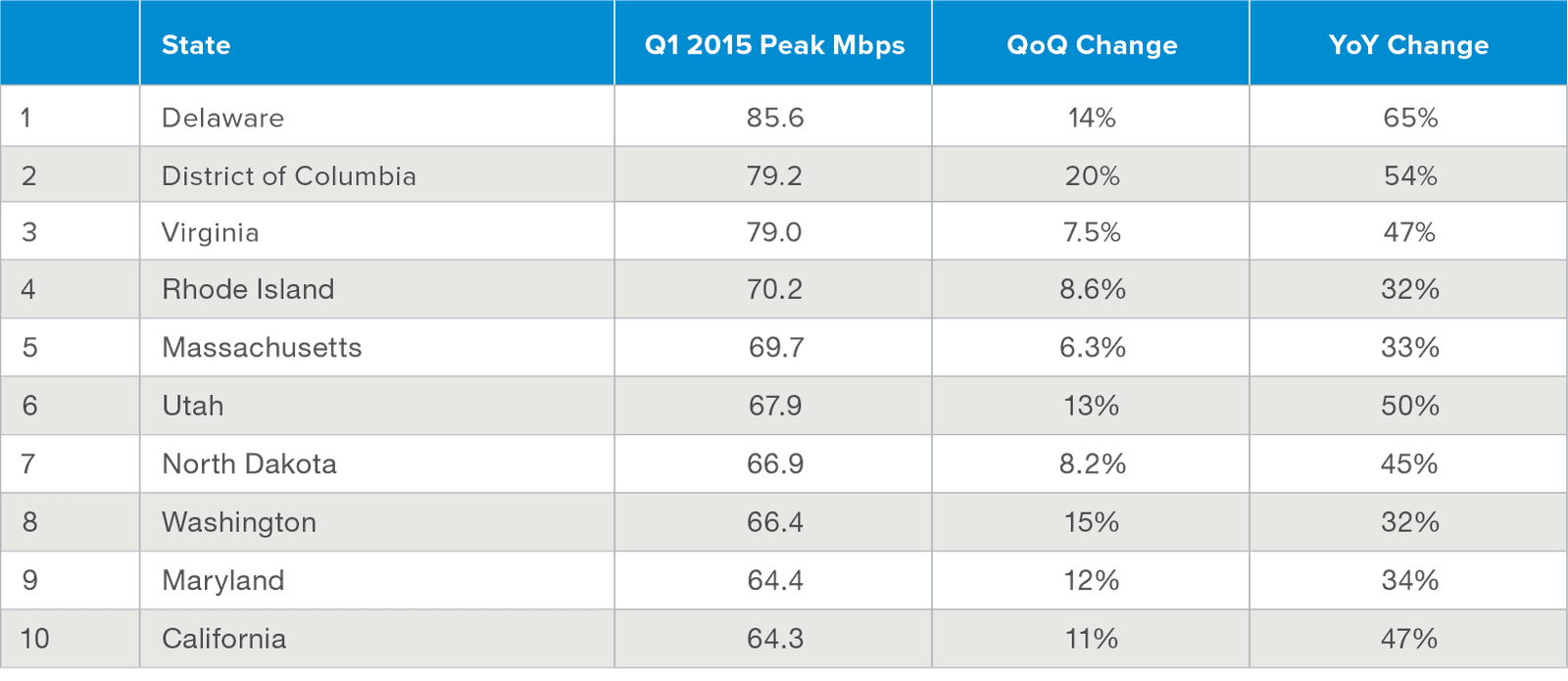

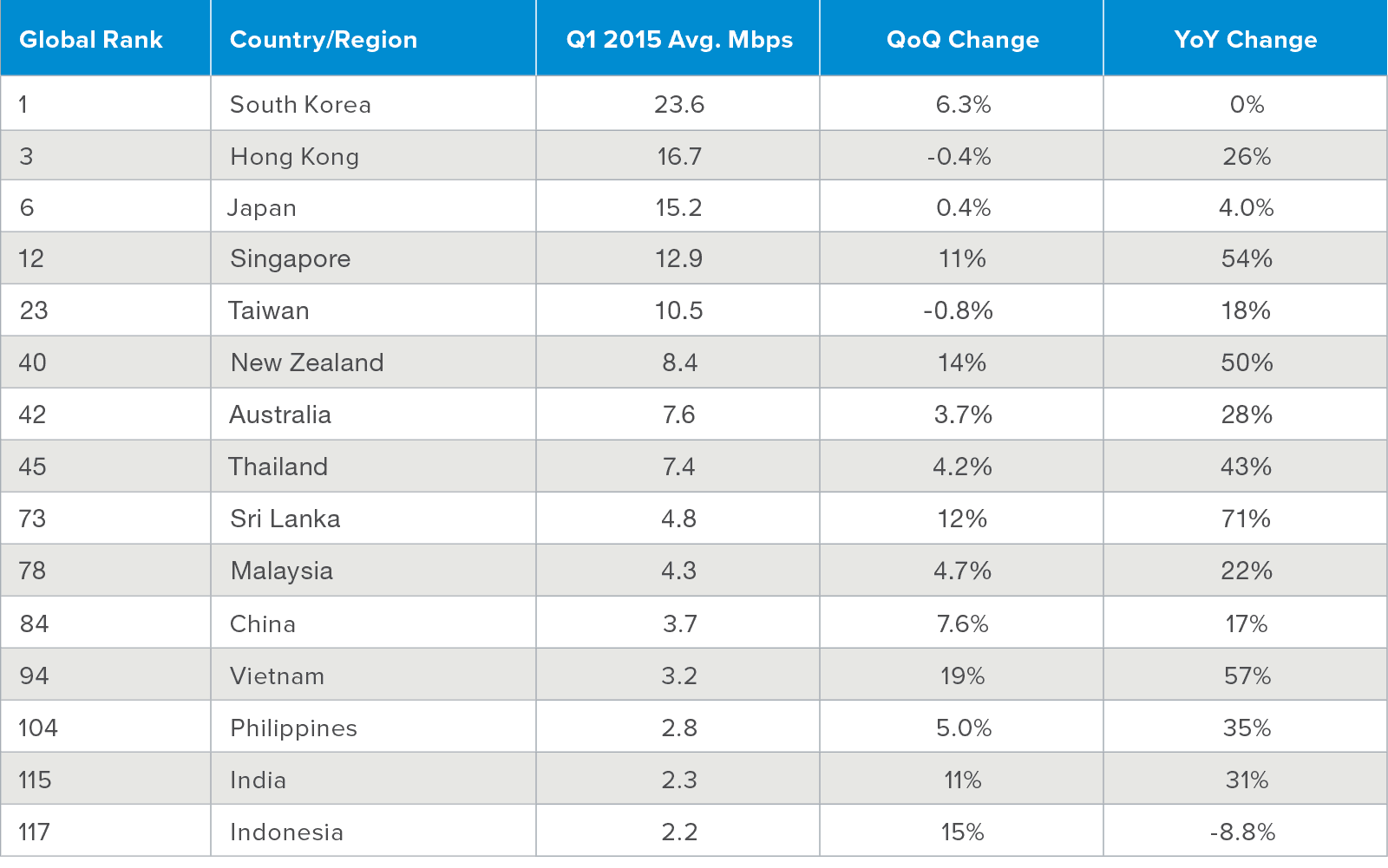

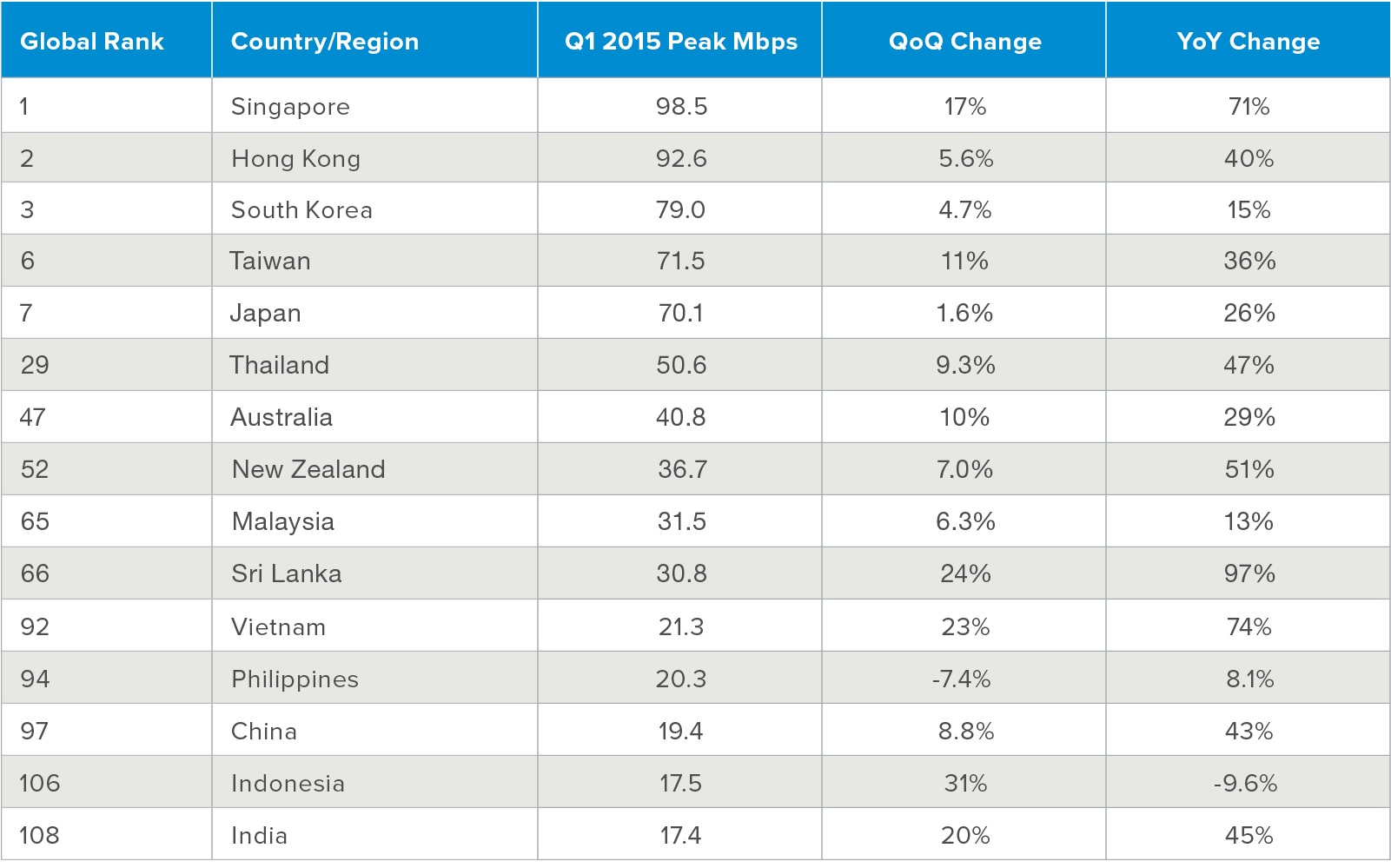

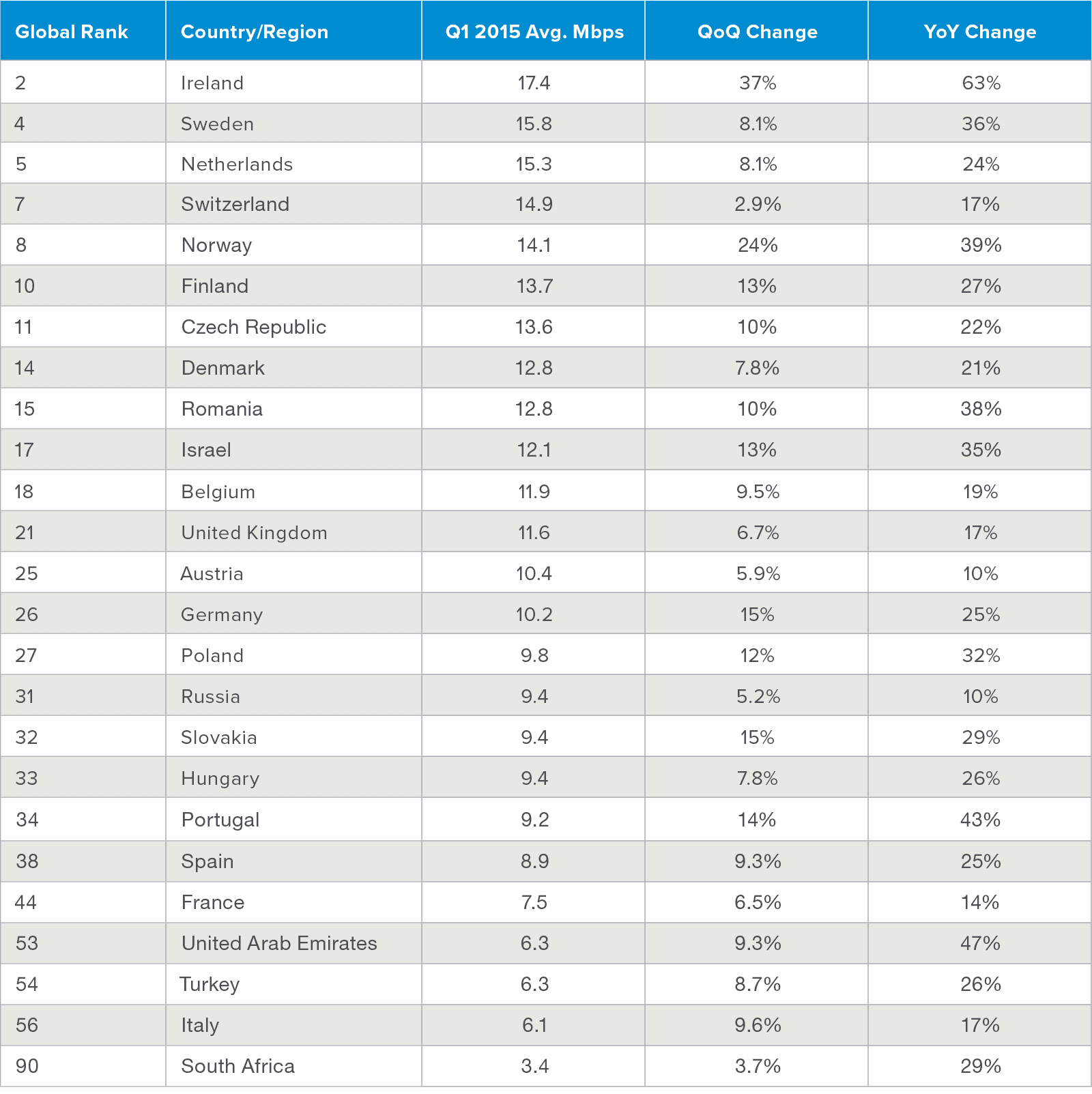

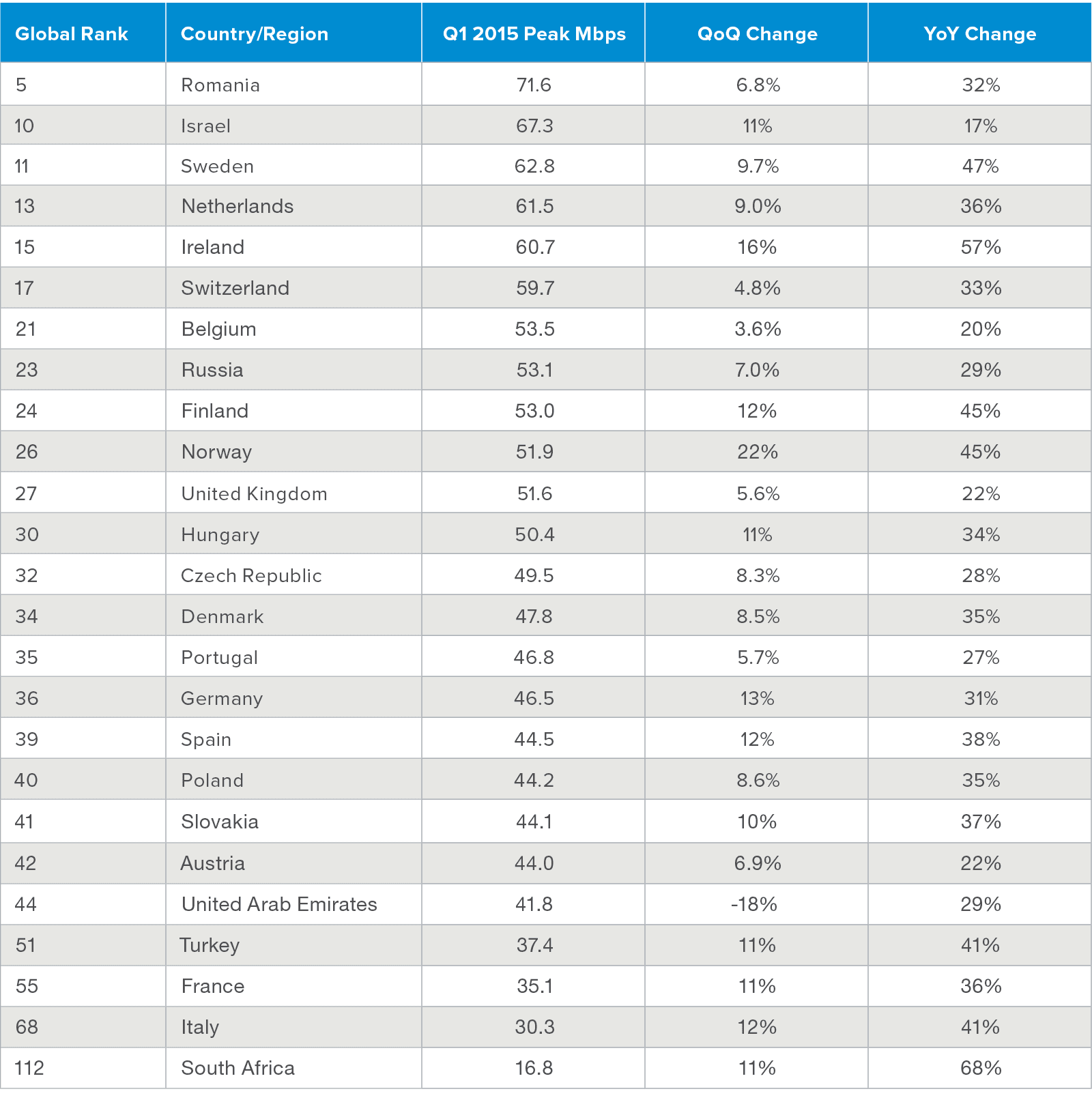

Due to increased competition, Internet Service Providers (ISPs) and mobile carriers worldwide have increased download speeds for the “last mile” (the final leg of telecom networks that deliver connectivity to customers) and mobile users. Below are charts showing download performance for ISPs in 2015 for the U.S., Asia, and Europe.4

Worldwide, the “last mile” of Internet connectivity has gotten much faster than before and the bandwidth available today helps drive enhanced user experience with web applications.

The development of the HTTP/2 specification was primarily driven by performance issues with HTTP1.x. In 2012, Google developed and propagated SPDY to optimize delivery of web content and try to negate the inefficiencies of earlier HTTP 1.1 protocol design. HTTP/2 builds on SPDY with a primary focus on reducing latency and the number of TCP connections, while maintaining compatibility with HTTP/1.1.

Driven by the objective of performance improvement, the HTTP/2 protocol includes the following changes:

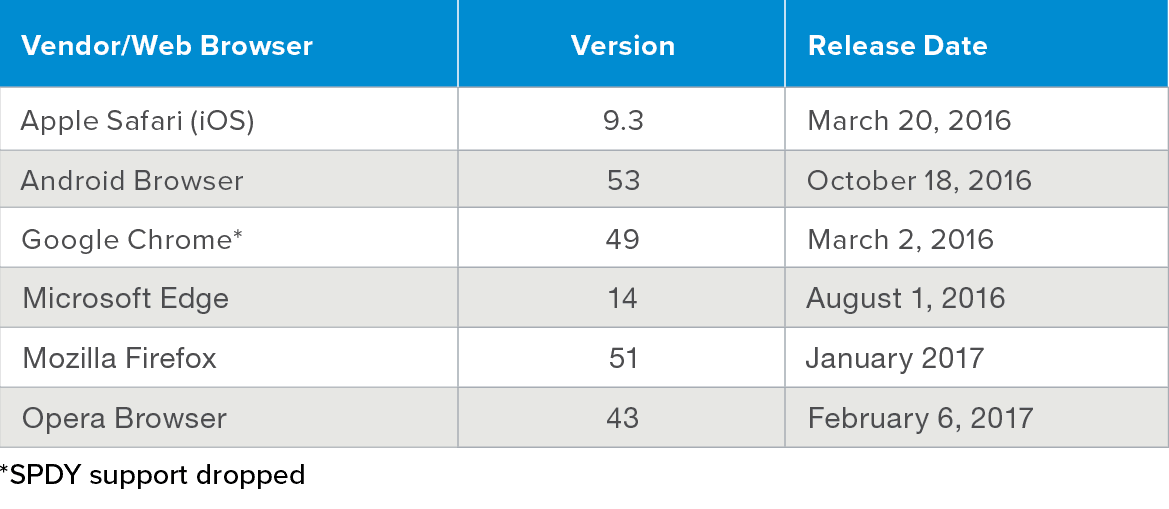

Major web browser vendors announced schedules (see table below) for incorporating HTTP/2 support after the protocol specification was ratified by IETF in mid-2015.

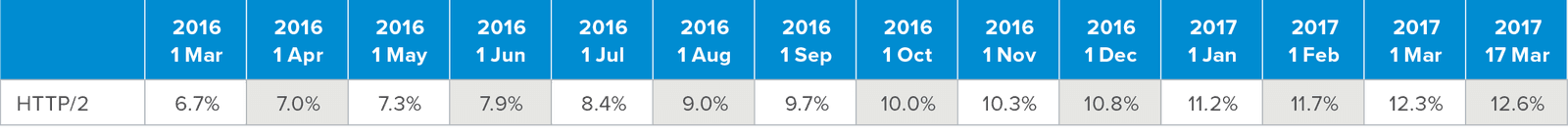

HTTP/2 requests to web servers from endpoint web browsers commenced in early 2016 and have grown with the release and adoption of web browsers enabled for the new specification. Updated web browser requests to web servers are made first in HTTP/2 with negotiation back to HTTP/1.x. In the figure below, we detail the growth of HTTP/2 interaction (between server and endpoint) from worldwide websites.5

The enhancements in the HTTP/2 specification result in performance that is 80% to 1000% faster than on an HTTP/1.x–based infrastructure. To get a feel for how the changes in specification affect real-world performance, run this simple HTTP vs. HTTPS test from Anthum that demonstrates the differences between the two protocol versions.

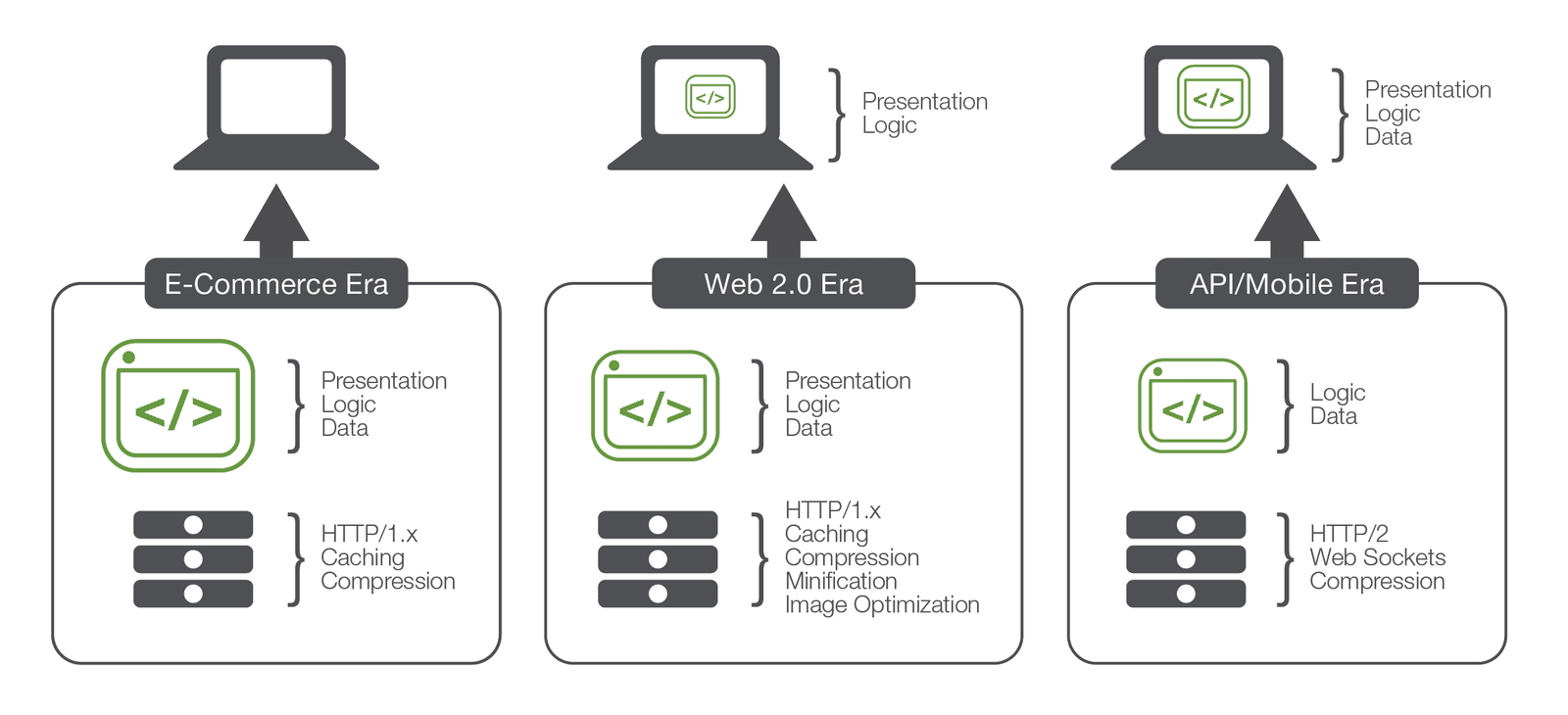

In addition to these infrastructure and protocol improvements, the actual composition of applications is also changing. While “front end” acceleration was once necessary because older applications “pushed” everything to the client, the modern app is distributed across the two primary components (client and server) to the point that 90% of the exchanges with an application (either via API or from a browser) involve small chunks of data.

Very little “content” is delivered beyond the first load; most of the presentation layer (the UI) can be manipulated via JavaScript libraries on the client (JQuery, Angular, etc…). That means the amount and type of data in responses (the primary target of front-end acceleration technologies) has dramatically decreased and is now primarily JSON or XML, not HTML.

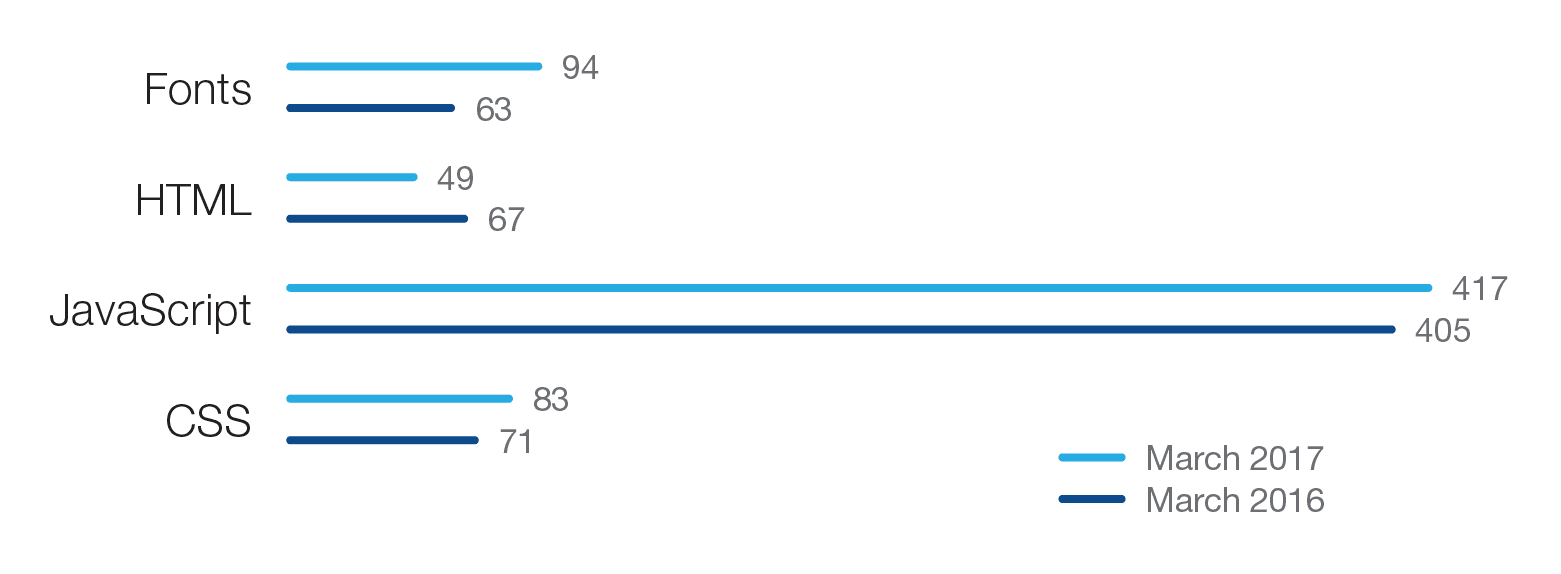

Because of this architectural shift, the percentage of an app today that is HTML is decreasing, while other compositional types are increasing (see figure below).6

As a significant amount of JavaScript (and HTML) is being delivered via CDNs—because developers include the scripts from the Internet rather than locally—this makes front-end acceleration techniques targeting this content less consequential for modern applications.

With the rise of mobile devices and the migration to HTML5 from earlier technologies (e.g., Adobe Flash), IT applications groups started to develop single-page Web applications to be delivered to the browser. These single-page web applications typically do not require the page to be reloaded as the user navigates the different parts of the application. The results are faster navigation, more efficient network transfers, and a better end user experience.7 Today, the preponderance of web apps are developed with a “one page” design in mind.

In order to create and maintain the ideal end user experience for those web-based applications within the control of IT operations, the following technologies and practices should be employed.

Standardize web servers on HTTP/2 protocol.

Upgrading web infrastructure to the HTTP/2 standard will address the server-side performance issues that plagued HTTP/1 and HTTP/1.1. In addition to better connection management, HTTP/2 enables web application designers to prioritize to ensure optimized content delivery and build within endpoint web browsers.

Set minimum standard for web browsers to ensure only HTTP/2-based requests are made.

Pushing internally (e.g., employees and/or contractors) or externally (e.g., customers and partners) to upgrade their browsers to versions that support HTTP/2 requests will eliminate auto-negotiation back to HTTP/1.1 or 1.0 and less efficient data transfer.

Validate that SaaS vendors have implemented HTTP/2 across all web applications.

Again, avoiding auto-negotiation back to HTTP/1.1 and 1.0 will help drive improved performance and better user experience.

Ensure your CDN vendor has PoPs geographically aligned to where your users and customers are located.

Utilizing a CDN vendor that has a network of PoPs aligned geographically to employees, contractors, and customers will reduce latency in the interactions between web browsers and CDN PoPs.

Consider caching technology on network-based appliances.

Leveraging on-board caching technology network appliances in front of applications will help drive performance for the static content required within web pages.

Evaluate low-cost open-source web caches.

If caching is required, consider using a low-cost and high-performance open-source web cache with SSD, such as Varnish.

Business requirements and the movement to mobile and cloud computing put ever-increasing pressure on IT groups to optimize user experience while delivering critical applications. Currently, there are three factors altering how users experience web content. The capacity of CDNs has greatly expanded, and the proximity of POPs to core user bases contributes to reduced latency. Secondly, Internet protocols have been updated to improve overall web server to client performance. And thirdly, the relationship between Internet server and client has changed so that the transactions between them are not as one-sided as before, and the data delivered is more dynamic and specific to the user. In light of these changes, strategies created 5–7 years ago to optimize front-end application delivery to enhance user experience are being rapidly depreciated by market forces and the natural evolution of web technology.

Enterprise web application infrastructure must evolve with the market changes or be left with declining performance and return on IT investments. The key for IT departments will be ensuring that the infrastructure that delivers the user experience (e.g., web servers, load balancers, CDNs, and SaaS) is upgraded to HTTP/2, which was designed for improving web performance.

1 http://www.cdnplanet.com/cdns

2 http://httparchive.org

3 https://technology.ihs.com/589721/data-center-network-equipment-market-tracker-regional-q2-2017

4 https://www.stateoftheinternet.com/resources-connectivity-2015-q1-state-of-the-internet-report.html

5 https://w3techs.com/technologies/details/ce-HTTP/2/all/all

6 http://httparchive.org

7 https://techcrunch.com/2012/11/30/why-enterprise-apps-are-moving-to-single-page-design

PUBLISHED AUGUST 29, 2017