As an industry, we are willing to debate just about any aspect of technology. Our forte, however, seems to be found in arguing over terminology. Jargon. Words.

Cloud. DevOps. SDN. Open. Controversy still clings to definition of these terms like dog hair to a black couch.

You use your similes and I'll use mine, thanks much.

Today we're here to talk about a term that's bound to set off arguments for another decade or so: telemetry. Isn't it just data, after all? Are we just using telemetry because it sounds sexier than data?

No. Not at all.

Ultimately both data and telemetry are organized bits of information. To use them interchangeably is not a crime. But the reality is that if you want to be accurate, there is a difference. And that difference will become increasingly important as organizations march into the data economy.

Telemetry is derived from two Greek words: "tele" and "metron," which mean "remote" and "measure". According to Wikipedia, "telemetry is the collection of measurements or other data at remote or inaccessible points and their automatic transmission to receiving equipment for monitoring."

This is the reason we see so much operational data referred to as telemetry—because it's being collected (remotely) and transmitted to a different system. The existence of telemetry data is not new. It's been an innate byproduct of every network and application service for as long as they've existed. Network and application monitoring have used agents and protocols to collect telemetry for decades. Its value has mainly been in troubleshooting issues in the data path.

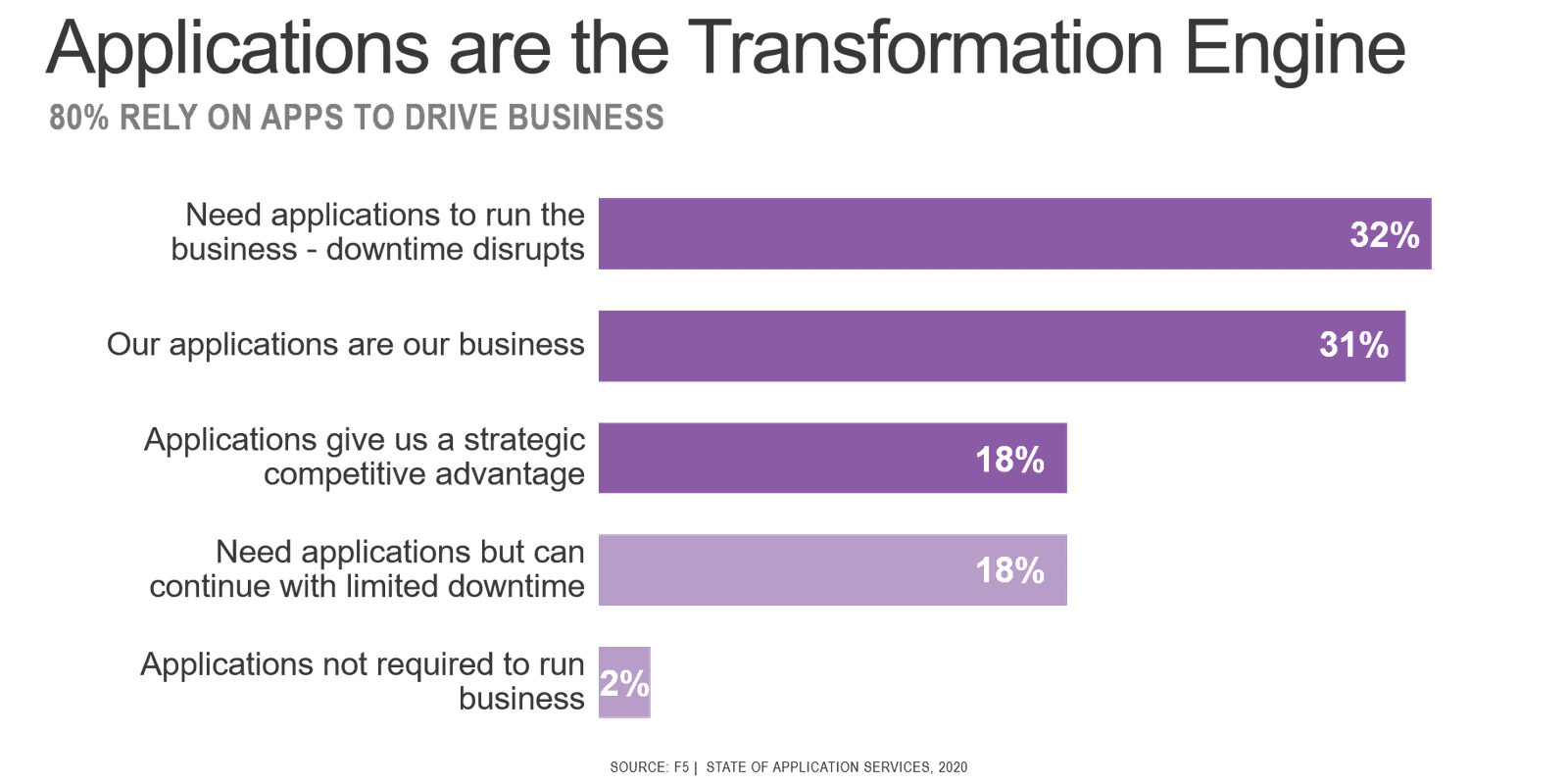

But as business progresses through digital transformation and the line between business process and technology continue to blur, telemetry from across the data path will provide insights into both technical and business problems. As organizations are increasingly reliant on applications to execute business—internally and externally, with customers and partners—the telemetry that will be of greatest value is that generated from the application services that make up the data path.

If you look at that path there is at least one—certainly closer to ten—application services providing for scale and security.

Each application service—and the platforms upon which they are deployed—has valuable information about the state of a given customer experience. Everything from characteristics about the user platform (device type, location, network) to time spent at every individual "hop" along the data path can be used to troubleshoot incidents, identify malicious actors, and detail performance problems. This is not "customer" data or "corporate" data; it is operational data. It is telemetry.

To truly take advantage of that data, however, requires that we find a way to capture and then analyze the massive volume of that can come from application services in the data path. That is where cloud comes in.

Why Cloud is Critical is to Leveraging Telemetry

Today, only some telemetry is captured because to keep it all would require more storage space than is available.

The amount of telemetry that is—and could be—emitted is overwhelming. Most systems cannot store more than a few weeks—or days—of telemetry. Often, it is sliced into time-series to save space. But even that cannot stop the incredible burden on storage. Eventually, it must be deleted to make space for newer, more relevant telemetry data.

This is why you tend to find advanced analytics services hosted in a public cloud. The capacity of cloud compute and storage coupled with machine learning provides the technological foundations needed to collect, store, and process massive quantities of telemetry. With a robust enough set of telemetry, advanced analytics will be able to provide actionable insights to organizations by discovering patterns and relationships between seemingly disparate data points.

But to get there, application services need to emit as much telemetry as a cloud-based repository can ingest. And it needs to come from as many points across the data path as possible. The more information that can be gathered from across a customer experience (the data path) the more valuable it will be to the system searching for patterns and relationships that uncover actionable insights that improve both the customer experience and business performance.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.