In just about every webinar about Ingress controllers and service meshes that we’ve delivered over the course of 2021, we’ve heard some variation of the questions “How is this tool different from an API gateway?” or “Do I need both an API gateway and an Ingress controller (or service mesh) in Kubernetes?”

The confusion is totally understandable for two reasons:

- Ingress controllers and service meshes can fulfill many API gateway use cases.

- Some vendors position their API gateway tool as an alternative to using an Ingress controller or service mesh – or they roll all three capabilities into one tool.

In this blog we tackle how these tools differ and which to use for Kubernetes‑specific API gateway use cases. For a deeper dive, including demos, watch the webinar API Gateway Use Cases for Kubernetes.

Definitions

At their cores, API gateways, Ingress controllers, and service meshes are each a type of proxy, designed to get traffic into and around your environments.

What Is an API Gateway?

An API gateway routes API requests from a client to the appropriate services. But a big misunderstanding about this simple definition is the idea that an API gateway is a unique piece of technology. It’s not. Rather, “API gateway” describes a set of use cases that can be implemented via different types of proxies – most commonly an ADC or load balancer and reverse proxy, and increasingly an Ingress controller or service mesh.

There isn’t a lot of agreement in the industry about what capabilities are “must haves” for a tool to serve as an API gateway. We typically see customers requiring the following abilities (grouped by use case):

Resilience Use Cases

- A/B testing, canary deployments, and blue‑green deployments

- Protocol transformation (between JSON and XML, for example)

- Rate limiting

- Service discovery

Traffic Management Use Cases

- Method‑based routing and matching

- Request/response header and body manipulation

- Request routing at Layer 7

Security Use Cases

- API schema enforcement

- Client authentication and authorization

- Custom responses

- Fine‑grained access control

- TLS termination

Almost all these use cases are commonly used in Kubernetes. Protocol transformation and request/response header and body manipulation are less common since they’re generally tied to legacy APIs that aren’t well‑suited for Kubernetes and microservices environments.

Learn more about API gateway use cases in Deploying NGINX as an API Gateway, Part 1 on our blog.

What Is an Ingress Controller?

An Ingress controller (also called a Kubernetes Ingress Controller – KIC for short) is a specialized Layer 4 and Layer 7 proxy that gets traffic into Kubernetes, to the services, and back out again (referred to as ingress‑egress or north‑south traffic). In addition to traffic management, Ingress controllers can also be used for visibility and troubleshooting, security and identity, and all but the most advanced API gateway use cases.

Learn more about how you can use an Ingress controller for more than just basic traffic management in A Guide to Choosing an Ingress Controller, Part 1: Identify Your Requirements on our blog.

What Is a Service Mesh?

A service mesh handles traffic flowing between Kubernetes services (referred to as service-to-service or east‑west traffic) and is commonly used to achieve end-to-end encryption (E2EE). Service mesh adoption is small but growing as more organizations launch advanced deployments or have requirements for E2EE. A service mesh can be used as a distributed (lightweight) API gateway very close to the apps, made possible on the data plane level by service mesh sidecars.

Learn more about service mesh – and when you’ll be ready for one – in How to Choose a Service Mesh on our blog.

Use Kubernetes-Native Tools for Kubernetes Environments

As we heard from Mark Church in his NGINX Sprint 2.0 keynote on Kubernetes and the Future of Application Networking, “API gateways, load balancers, and service meshes will continue to look more and more similar to each other and provide similar capabilities”. We wholeheartedly agree with this statement and further add that it’s all about picking the right tool for the job based on where (and how) you’re going to use it. After all, both a machete and a butter knife are used for cutting, but you’re probably not going to use the former on your morning toast.

So how do you decide which tool is right for you? We’ll make it simple: if you need API gateway functionality inside Kubernetes, it’s usually best to choose a tool that can be configured using native Kubernetes config tooling such as YAML. Typically, that’s an Ingress controller or service mesh. But we hear you saying, “My API gateway tool has so many more features than my Ingress controller (or service mesh) – aren’t I missing out?” No! More features do not equal better tool, especially within Kubernetes where tool complexity can be a killer.

Note: Kubernetes‑native (not the same as Knative) refers to tools that were designed and built for Kubernetes. Typically, they work with the Kubernetes CLI, can be installed using Helm, and integrate with Kubernetes features.

Most Kubernetes users prefer tools they can configure in a Kubernetes‑native way because that avoids changes to the development or GitOps experience. A YAML‑friendly tool provides three major benefits:

- YAML is a familiar language to Kubernetes teams, so the learning curve is small, or even non‑existent, if you’re using an existing Kubernetes tool for API gateway functionality. This helps your teams work within their existing skill set without the need to learn how to configure a new tool that they might only use occasionally.

- You can automate a YAML‑friendly tool in the same fashion as your other Kubernetes tools. Anything that cleanly fits into your workflows will be popular with your team – increasing the probability that they use it.

- You can shrink your Kubernetes traffic‑management tool stack by using your Ingress controller, service mesh, or both. After all, every extra hop matters and there’s no reason to add unnecessary latency or single points of failure. And of course, reducing the number of technologies deployed within Kubernetes is also good for your budget and overall security.

North-South API Gateway Use Cases: Use an Ingress Controller

Ingress controllers have the potential to enable many API gateway use cases. In addition to the ones outlined in Definitions, we find organizations most value an Ingress controller that can implement:

- Offload of authentication and authorization

- Authorization‑based routing

- Layer 7 level routing and matching (HTTP, HTTP/S, headers, cookies, methods)

- Protocol compatibility (HTTP, HTTP/2, WebSocket, gRPC)

- Rate limiting

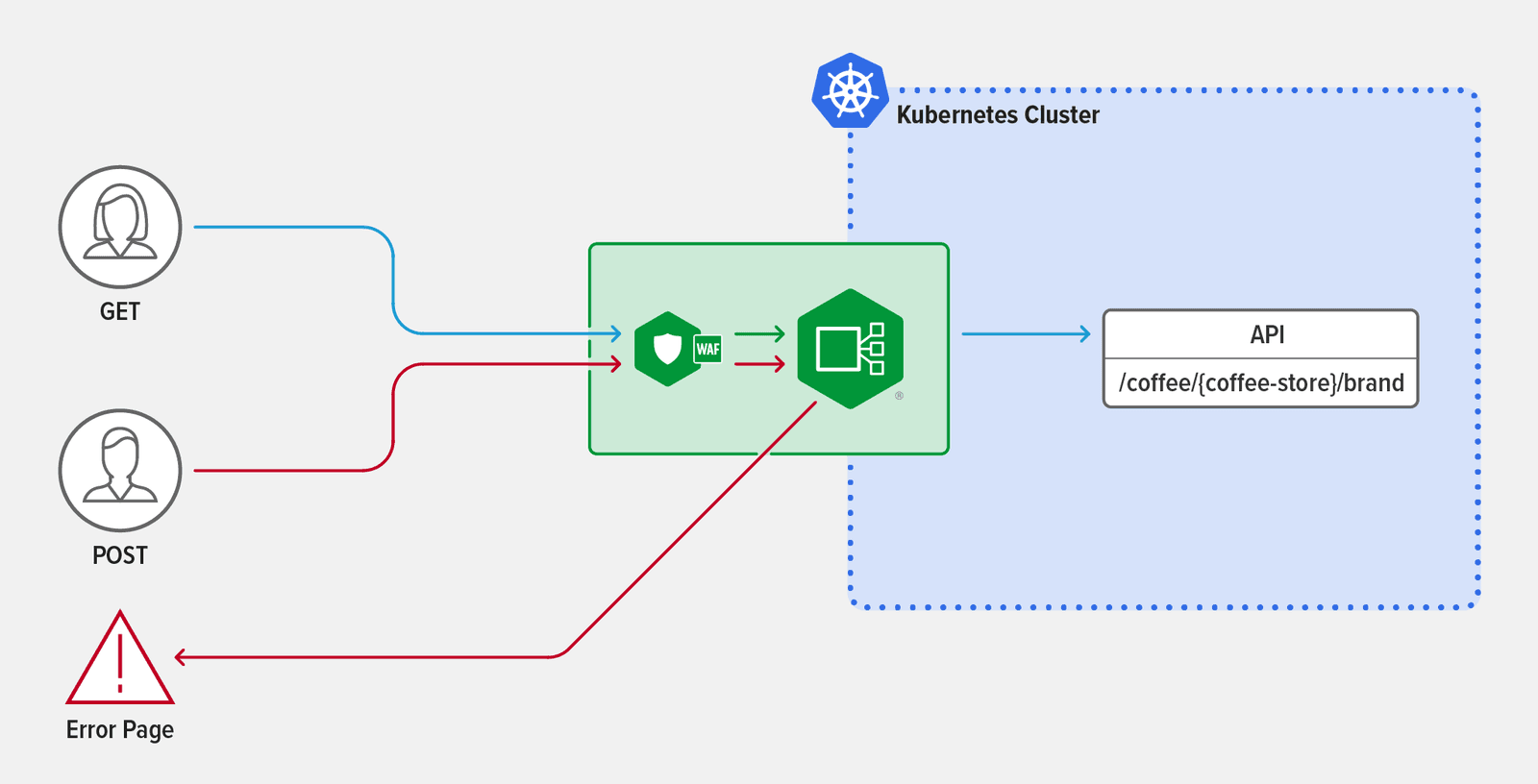

Sample Scenario: Method-Level Routing

You want to implement method‑level matching and routing, using the Ingress controller to reject the POST method in API requests.

Some attackers look for vulnerabilities in APIs by sending request types that don’t comply with an API definition – for example, sending POST requests to an API that is defined to accept only GET requests. Web application firewalls (WAF) can’t detect these kinds of attacks – they examine only request strings and bodies for attacks – so it’s best practice to use an API gateway at the Ingress layer to block bad requests.

As an example, suppose the new API /coffee/{coffee-store}/brand was just added to your cluster. The first step is to expose the API using NGINX Ingress Controller – simply by adding the API to the upstreams field.

apiVersion: k8s.nginx.org/v1kind: VirtualServer

metadata:

name: cafe

spec:

host: cafe.example.com

tls:

secret: cafe-secret

upstreams:

-name: tea

service: tea-svc

port: 80

-name: coffee

service: coffee-svc

port: 80To enable method‑level matching, you add a /coffee/{coffee-store}/brand path to the routes field and add two conditions that use the $request_method variable to distinguish between GET and POST requests. Any traffic using the HTTP GET method is passed automatically to the coffee service. Traffic using the POST method is directed to an error page with the message "You are rejected!" And just like that, you’ve protected the new API from unwanted POST traffic.

routes: - path: /coffee/{coffee-store}/brand

matches:

- conditions:

- variable: $request_method

value: POST

action:

return:

code: 403

type: text/plain

body: "You are rejected!"

- conditions:

- variable: $request_method

value: GET

action:

pass: coffee

- path: /tea

action:

pass:teaFor more details on how you can use method‑level routing and matching with error pages, check out the NGINX Ingress Controller docs. For a security‑related example of using an Ingress controller for API gateway functionality, read Implementing OpenID Connect Authentication for Kubernetes with Okta and NGINX Ingress Controller on our blog.

East-West API Gateway Use Cases: Use a Service Mesh

A service mesh is not required – or even initially helpful – for most API gateway use cases because most of what you might want to accomplish can, and ought to, happen at the Ingress layer. But as your architecture increases in complexity, you’re more likely to get value from using a service mesh. The use cases we find most beneficial are related to E2EE and traffic splitting – such as A/B testing, canary deployments, and blue‑green deployments.

Sample Scenario: Canary Deployment

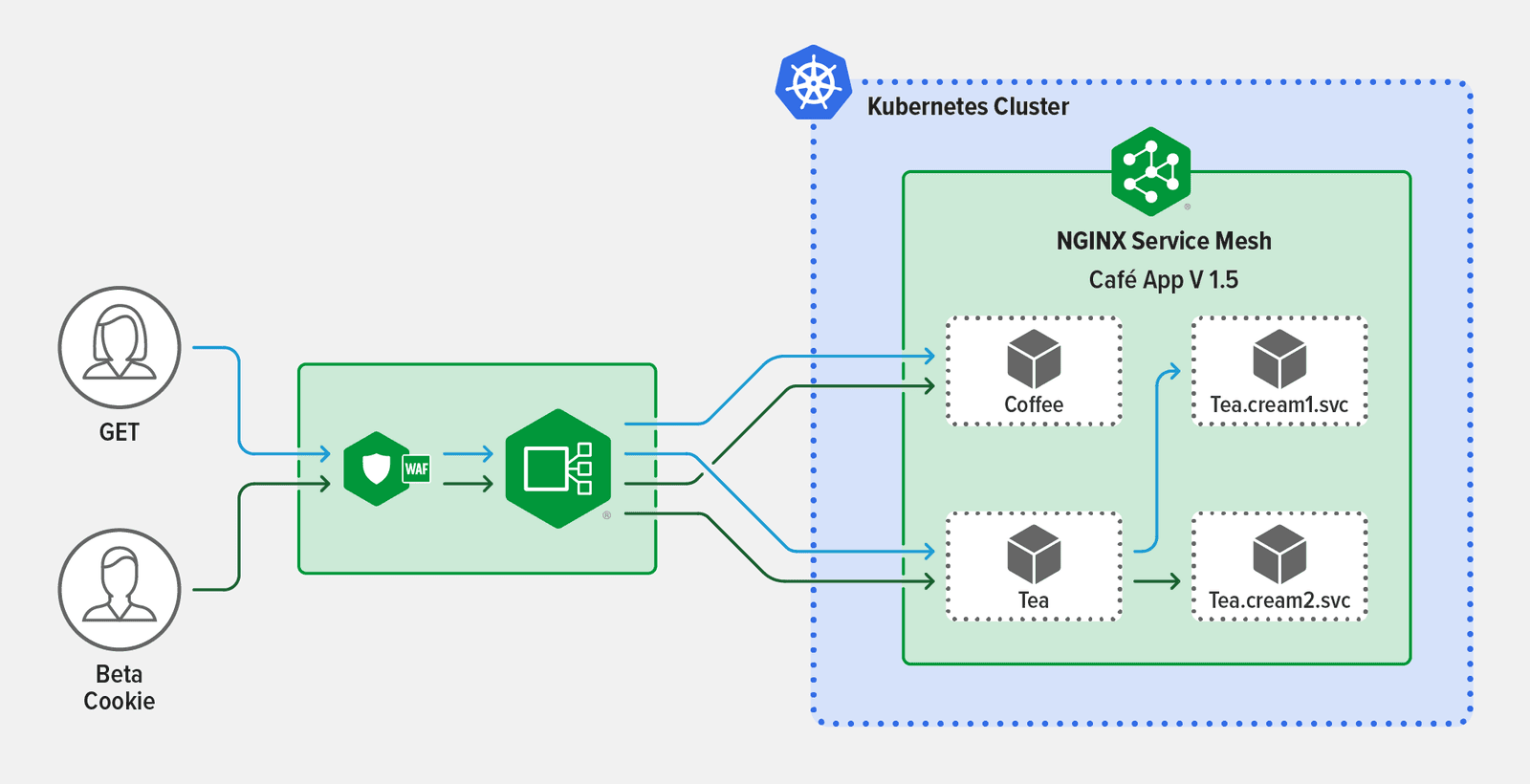

You want to set up a canary deployment between services with conditional routing based on HTTP/S criteria.

The advantage is that you can gradually roll out API changes – such as new functions or versions – without impacting most of your production traffic.

Currently, your NGINX Ingress Controller routes traffic between two services managed by NGINX Service Mesh: Coffee.frontdoor.svc and Tea.frontdoor.svc. These services receive traffic from NGINX Ingress Controller and route it to the appropriate app functions, including Tea.cream1.svc. You decide to refactor Tea.cream1.svc, calling the new version Tea.cream2.svc. You want your beta testers to provide feedback on the new functionality so you configure a canary traffic split based on the beta testers’ unique session cookie, ensuring your regular users only experience Tea.cream1.svc.

Using NGINX Service Mesh, you begin by creating a traffic split between all services fronted by Tea.frontdoor.svc, including Tea.cream1.svc and Tea.cream2.svc. To enable the conditional routing, you create an HTTPRouteGroup resource (named tea-hrg) and associate it with the traffic split, the result being that only requests from your beta users (requests with the session cookie set to version=beta) are routed from Tea.frontdoor.svc to Tea.cream2.svc. Your regular users continue to experience only version 1 services behind Tea.frontdoor.svc.

apiVersion: split.smi-spec.io/v1alpha3kind: TrafficSplit

metadata:

name: tea-svc

spec:

service: tea.1

backends:

- service: tea.1

weight: 0

- service: tea.2

weight: 100

matches:

- kind: HTTPRouteGroup

name: tea-hrg

apiVersion: specs.smi-spec.io/v1alpha3

kind: HTTPRouteGroup

metadata:

name: tea-hrg

namespace: default

spec:

matches:

- name: beta-session-cookie

headers:

- cookie: "version=beta"This example starts your canary deployment with a 0‑100 split, meaning all your beta testers experience Tea.cream2.svc, but of course you could start with whatever ratio aligns to your beta testing strategy. Once your beta testing is complete, you can use a simple canary deployment (without the cookie routing) to test the resilience of Tea.cream2.svc.

Check out our docs for more details on traffic splits with NGINX Service Mesh. The above traffic split configuration is self‑referential, as the root service is also listed as a backend service. This configuration is not currently supported by the Service Mesh Interface specification (smi-spec); however, the spec is currently in alpha and subject to change.

When (and How) to Use an API Gateway Tool for Kubernetes Apps

Though most API gateway use cases for Kubernetes can (and should) be addressed by an Ingress controller or service mesh, there are some specialized situations where an API gateway tool – such as NGINX Plus – is suitable.

Business Requirements

While multiple teams or projects can share a set of Ingress controllers, or Ingress controllers can be specialized on a per‑environment basis, there are reasons you might choose to deploy a dedicated API gateway inside Kubernetes rather than leveraging the existing Ingress controller. Using both an Ingress controller and an API gateway inside Kubernetes can provide flexibility for organizations to achieve business requirements. Some scenarios include:

- Your API gateway team isn’t familiar with Kubernetes and doesn’t use YAML. For example, if they’re comfortable with NGINX config, then it eases friction and lessens the learning curve if they deploy NGINX Plus as an API gateway in Kubernetes.

- Your Platform Ops team prefers to dedicate the Ingress controller to app traffic management only.

- You have an API gateway use case that only applies to one of the services in your cluster. Rather than using an Ingress controller to apply a policy to all your north‑south traffic, you can deploy an API gateway to apply the policy only where it’s needed.

Migrating APIs into Kubernetes Environments

When migrating existing APIs into Kubernetes environments, you can publish those APIs to an API gateway tool that’s deployed outside of Kubernetes. In this scenario, API traffic is typically routed through an external load balancer (for load balancing between clusters), then to a load balancer configured to serve as an API gateway, and finally to the Ingress controller within your Kubernetes cluster.

Supporting SOAP APIs in Kubernetes

While most modern APIs are created using REST – in part because RESTful or gRPC services and APIs are able to take full advantage of the Kubernetes platform – you may still have some SOAP APIs that haven’t been rearchitected. While SOAP APIs aren’t recommended for Kubernetes because they aren’t optimized for microservices, you may end up needing to deploy a SOAP API in Kubernetes until it can be rearchitected. It’s likely the API needs to communicate with REST‑based API clients, in which case you need a way to translate between the SOAP and REST protocols. While you can perform this functionality with an Ingress controller, we don’t recommend that because it’s extremely resource intensive. Instead, we recommend deploying an API gateway tool as a per‑pod or per‑service proxy to translate between SOAP and REST.

API Traffic Management Both Inside and Outside Kubernetes

A relatively small number of our customers are interested in managing APIs spanning inside and outside Kubernetes environments. If an API management strategy is a higher priority than selecting Kubernetes‑native tools, then a “Kubernetes‑friendly” API gateway (that can integrate with an API management solution) deployed in Kubernetes might be the right choice.

Note: Unlike Kubernetes‑native tools, Kubernetes‑friendly tools (also sometimes called Kubernetes‑accommodative) weren’t designed for Kubernetes and can’t be managed using Kubernetes configs. However, they are nimble and light, allowing them to perform in Kubernetes without adding significant latency or requiring extensive workarounds.

Getting Started with NGINX

NGINX offers options for all three types of deployment scenarios.

Kubernetes‑native tools:

- NGINX Ingress Controller – NGINX Plus-based Ingress controller for Kubernetes that handles advanced traffic control and shaping, monitoring and visibility, and authentication and single sign-on (SSO).

- NGINX Service Mesh – Lightweight, turnkey, and developer‑friendly service mesh featuring NGINX Plus as an enterprise sidecar.

Get started by requesting your free 30-day trial of NGINX Ingress Controller with NGINX App Protect WAF and DoS, and download the always‑free NGINX Service Mesh.

For a Kubernetes‑friendly API gateway inside or outside your Kubernetes environments:

- NGINX Plus – The all-in-one load balancer, reverse proxy, web server, and API gateway with enterprise‑grade features like high availability, active health checks, DNS system discovery, session persistence, and a RESTful API. Integrates with NGINX Controller [now F5 NGINX Management Suite] for a full API lifecycle solution.

To learn more about using NGINX Plus as an API gateway, request your free 30-day trial and see Deploying NGINX as an API Gateway.

About the Author

Related Blog Posts

Automating Certificate Management in a Kubernetes Environment

Simplify cert management by providing unique, automatically renewed and updated certificates to your endpoints.

Secure Your API Gateway with NGINX App Protect WAF

As monoliths move to microservices, applications are developed faster than ever. Speed is necessary to stay competitive and APIs sit at the front of these rapid modernization efforts. But the popularity of APIs for application modernization has significant implications for app security.

How Do I Choose? API Gateway vs. Ingress Controller vs. Service Mesh

When you need an API gateway in Kubernetes, how do you choose among API gateway vs. Ingress controller vs. service mesh? We guide you through the decision, with sample scenarios for north-south and east-west API traffic, plus use cases where an API gateway is the right tool.

Deploying NGINX as an API Gateway, Part 2: Protecting Backend Services

In the second post in our API gateway series, Liam shows you how to batten down the hatches on your API services. You can use rate limiting, access restrictions, request size limits, and request body validation to frustrate illegitimate or overly burdensome requests.

New Joomla Exploit CVE-2015-8562

Read about the new zero day exploit in Joomla and see the NGINX configuration for how to apply a fix in NGINX or NGINX Plus.

Why Do I See “Welcome to nginx!” on My Favorite Website?

The ‘Welcome to NGINX!’ page is presented when NGINX web server software is installed on a computer but has not finished configuring