The Challenge? Deploying Great Apps Quickly and Safely

Applications are the modern organization’s new form of capital which makes them critical to an org’s success. With this new application-centric push comes a divide between apps and infrastructure. On one hand, you have developers who are focused on moving fast and deploying often in an effort to create greater value for users. But moving fast is at odds with operations, who care about ensuring reliability, security, and performance to make sure those apps meet customer expectations.

Here's What You Can Do

In order to meet the wants and needs of both the developers and ops teams, you need a way to empower your DevOps teams to manage load balancers closer to the apps they develop and maintain while letting the NetOps teams retain control the F5 appliance sitting at the frontend. That way, you gain the agility and time-to-market benefits that your app team needs without sacrificing the reliability and security control your network teams require.

How F5 Can Help

F5’s cloud-native NGINX software load balancers help close the gap between DevOps and NetOps. With this solution, you can augment your enterprise-wide BIG-IP load balancer by deploying lightweight, portable NGINX load balancers closer to the apps themselves.

Solution Guide

Trends

According to Forrester, 50% of organizations are implementing DevOps practices to speed time to market (high feature velocity) and improve stability (lower incidence of outages and faster issue resolution).

Alongside the growth of DevOps practices, enterprises are modernizing apps using microservices architectures, where different applications are broken up into discrete, packaged services. Nearly 10% of apps are built net‑new as microservices, while another 25% are hybrid applications (monolithic with attached microservices, sometimes referred to as “miniservices”).

The move toward DevOps principles and adoption of microservices architectures are having a profound impact on all aspects of application development and infrastructure. Download the NGINX Solution Guide to get all the details.

Devops Transformation

These trends are changing the way we think about—and develop—applications.

- People

Control shifts from infrastructure teams to application teams. To achieve speed to market, DevOps wants to have control over the infrastructure that supports the apps they develop and maintain.

- Process

DevOps speeds up provisioning time. Modern app infrastructure must be automated and provisioned orders of magnitude faster—or you risk delaying the deployment of crucial fixes and enhancements.

- Technology

Infrastructure decouples software from hardware. Software‑defined infrastructure, infrastructure as code, and composable infrastructure all describe new deployment architectures where programmable software runs on commodity hardware or public cloud computing resources.

Challenge

While DevOps and microservices affect all aspects of application infrastructure, they specifically change the way enterprises deploy load balancer technology as the load balancer is the intelligent point of control that sits in front of all your apps.

However, different teams in your organization need to access load balancing technology in different ways.

- Enterprise

Enterprises employ a central load balancer with advanced features to manage all application traffic, improving deployment throughput and stability. The F5 appliance sitting at the front door of your environment does the heavy lifting—providing advanced application services like local traffic management, global traffic management, DNS management, bot protection, DDoS mitigation, SSL offload, and identity and access management. - Devops

DevOps teams often need to implement changes to the load balancer in order to introduce new apps, add new features to existing apps, or improve scale. In traditional processes, DevOps has to rely on infrastructure and operations (I&O) teams to modify the configuration of the load balancer and redeploy it in production. - Operations

I&O teams typically take a cautious approach as they have to support hundreds or possibly thousands of applications using a centralized load balancer. Any errors could have disastrous performance and security implications across the enterprise’s entire app landscape. So, the I&O team makes changes in test environments first—and then eventually rolls them out in production. While these operations procedures help ensure that changes don’t negatively affect your application portfolio, following them can slow the pace of development and innovation.

Solution

You can improve the velocity of software delivery and operational performance by deploying lightweight, flexible load balancers that can be easily integrated with your application code closer to your apps.

F5’s cloud-native ADC solution, NGINX, is a software load balancer that can help you bridge the divide between DevOps and NetOps.

- How It Works

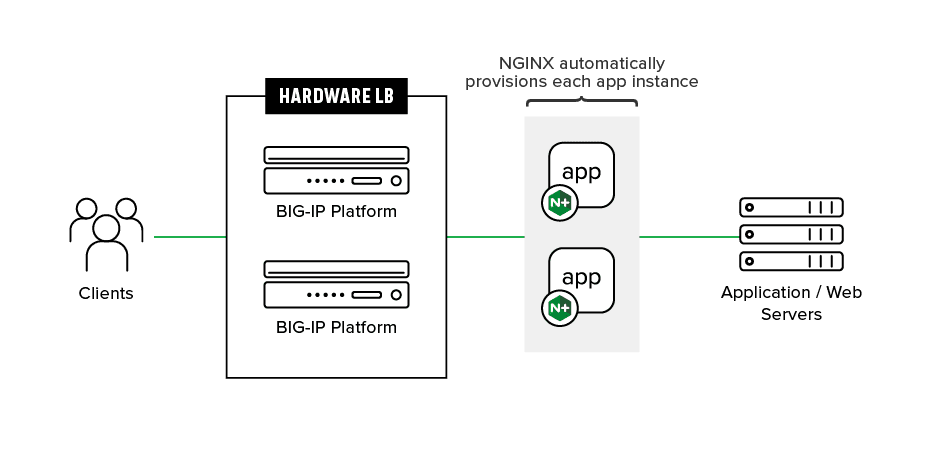

There are three common deployment models for augmenting your F5 BIG-IP infrastructure with NGINX:

- Deploy NGINX behind the F5 appliance to act as a DevOps‑friendly abstraction layer.

- Provision an NGINX instance for each of your apps, or even for each of your customers.

- Run NGINX as your multi‑cloud application load balancer for cloud‑native apps.

Because the programmable NGINX load balancer is lightweight, it consumes very few compute resources and imposes little to no additional strain on your infrastructure.

Conclusion

By layering your F5 and NGINX load balancers, you can boost speed to market while not sacrificing security or reliability.

With this approach, I&O teams get to retain the frontend F5 infrastructure to provide advanced application services to the large numbers of mission‑critical apps it’s necessary to protect and scale. At the same time, you empower your DevOps and application teams to directly manage configuration changes on the software load balancer, often automating them as part of a CI/CD framework.

The combined solution enables you to achieve the agility and time-to-market benefits that your app teams need—without sacrificing the reliability and security controls your network teams require.

Get Started

Take a deep dive

Learn more about key capabilities of NGINX Controller.

Read the NGINX blog ›

Get app delivery insights

Learn how F5 and NGINX can help you bridge the divide between Dev and Ops.

Watch the webinar ›

Test out NGINX

Get a free trial and test out NGINX Controller for 30 days.

Try NGINX for free ›