Understanding Observability

In the rapidly evolving artificial intelligence (AI) ecosystem, large language models (LLMs) have emerged as the most powerful, transformative tools in the AI toolkit. Designed to process and comprehend human language, these models are revolutionizing fields such as customer service, tech, pharma, finance, retail, and myriad others, and also changing the face of Software as a Service (SaaS).

As these AI models grow in capability and complexity, they present unprecedented potential for productivity and innovation. Their deployment in business environments also introduces unique challenges, the most serious of which is a vastly expanded attack surface, closely followed by siloed visibility.

Simply put, when multiple models, including multimodal models, are deployed in an organization, they operate in parallel, rather than integrating, bringing with them a panoply of vulnerabilities. This means that security teams have a fragmented view of the system they must protect and defend, when what they really need is full, seamless visibility into and across all models in operation.

In an enterprise, these could conceivably include big external models such as ChatGPT: targeted models such as BloombergGPT; models embedded in SaaS applications such as Salesforce; retrieval-augmented generation (RAG) models; and small, internal models fine-tuned on company data. Together, these models can number in the dozens or into the hundreds—and that growth is not slowing down any time soon.

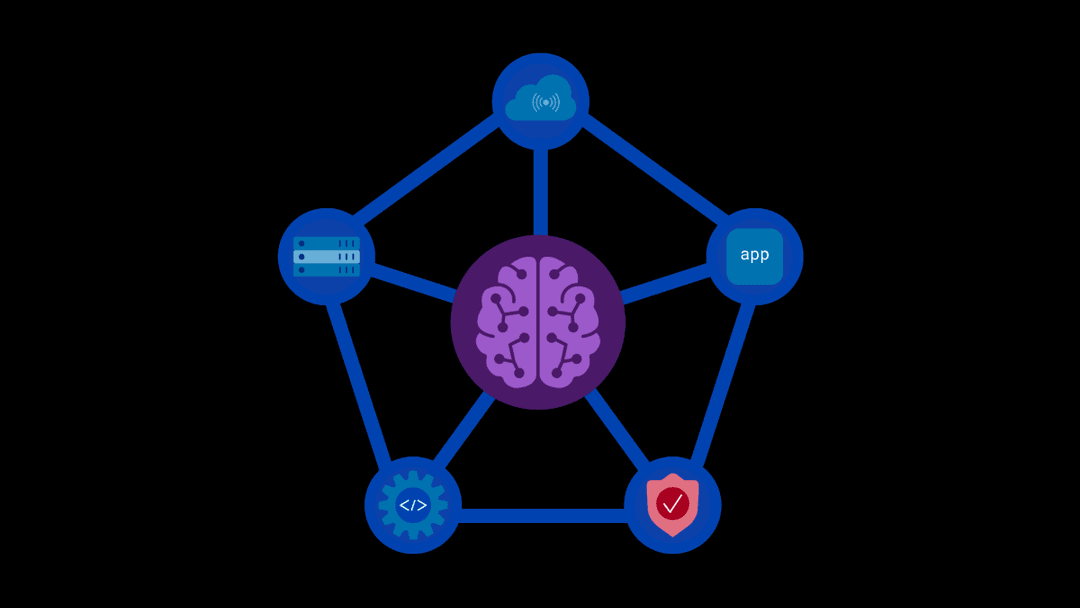

Enter the concept of Observability. Observability addresses the imperative of being able to see, evaluate, and synthesize insights about models’ performance and behavior; for instance, decision-making processes, inherent limitations, reliability, efficiency, and effectiveness. Integrating an Observability layer into the model deployment framework establishes a strong foundation for the successful use, security, and maintenance of AI-powered applications.

The Benefits of Model Observability

The ability to observe model behavior can provide information about possible attack trends and patterns, as well as more granular analytics related to finance, operations, and potentially even personnel issues. Visibility into the following key focus areas can translate into enhanced resource allocation, productivity, innovation, and competitive advantage.

- Performance Assurance: Observing models in action—from the API connection to the model’s query response—allows organizations to continuously monitor and compare vital performance metrics, such as latency, throughput, and accuracy (within and across the models). This real-time oversight facilitates the immediate identification and mitigation of bottlenecks, ensuring consistent user experiences of AI systems and adherence to performance criteria.

- Error Management: A robust Observability framework will capture and log detailed metrics to provide administrators with an in-depth view of models’ decision-making processes, facilitating rapid error identification and resolution, and promoting trust in model performance.

- Usage Analytics: Observability tools enable review of real-world engagement scenarios, from tracking response times and API calls to analyzing traffic patterns and identifying recurring use cases. Real-time monitoring of model functionality (error rates, token usage, etc.) and user interactions (scanning prompts and responses for sensitive or prohibited content, attempted attacks, etc.) can provide insights to help organizations continually refine and realign models based on actual usage.

- Model Drift: Clear visibility into AI model performance can allow administrators and developers to identify degradation, or ‘model drift’, due to evolving data landscapes. Knowing when this has occurred is critical to making sure models are retrained or fine-tuned in a timely manner to ensure trust in the model’s output.

- Resource Optimization and Scaling: Deploying LLMs across an organization can entail high computational demands, such as memory and CPU/GPU usage, making resource utilization insights important data points when considering cost optimization or scaling for an increase in users or workload surges.

- Compliance: Knowing the state of system and user compliance with internal acceptable use, privacy, and other policies—as well as industry standards and governmental regulations—is critical in every organization, and especially so in regulated sectors such as healthcare and finance. Utilizing solutions that enable visibility into and auditability of detailed logs and other metrics can ensure that model deployments meet data privacy, security, and other compliance benchmarks.

The Importance of Observability in the Enterprise

Innovation will drive the need for greater Observability. LLMs and other generative AI models will continue to become more complex and automated, and real-time monitoring of all aspects of performance, usage, and security will become increasingly important.

At the same time, governmental bodies have stepped up their game, passing regulations into law, such as the European Union’s General Data Protection Regulation (GDPR); issuing draft regulation, such as the EU AI Act; and providing direction, such as the Biden administration’s Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence and the National Institute of Standards and Technology (NIST) Risk Management Framework (RMF). In addition to those mandates and guidelines, regulated industries have their own labyrinthine rules, non-regulated industries have standards that must be met, and organizations have policies that reflect and reinforce their own corporate values.

As more entities issue directives addressing the deployment and use of LLMs in the business ecosystem, enabling, enforcing, monitoring, and reporting compliance with those directives will become extremely important. Being out of compliance could result in significant financial and reputational consequences, with downstream fallout (shareholder confidence, competitive advantage, customer trust, etc.). Knowing how, how well, and how securely models are operating in the production environment will be ever more critical.

AI Security With Full Observability

Clearly, observing, analyzing, and evaluating AI model performance and behavior is crucial for improving performance and producing reliable and trustworthy output. Integrating a platform that seamlessly enables full Observability across the organization’s digital security infrastructure sounds like the very definition of a game-changing decision. Our AI runtime security and enablement solution is that game-changer.

Our solution serves as more than just a trust layer that insulates models from both internal and external threats with its first-to-market features, such as more than a dozen customizable content scanners and detailed user and usage auditability. It has a clear, intuitive, easy-to-use interface designed to appeal to a wide audience of users, but it also provides equally easy-to-use API accessibility for streamlined use by developers and other technical users.

The use of APIs, including those established for specific individuals and identified teams, provides additional means of gaining insight into the system and monitoring and analyzing security status, model performance, and user analytics. Administrators can track API calls and review endpoints to identify those most commonly used, track latency and error rates, monitor version compatibility, identify emerging or unique behavior patterns, monitor dependencies, and analyze traffic to identify potential bottlenecks and ensure routing rules are intact.

Our platform works as a virtually invisible, bi-directional security superstructure that encompasses your entire AI system configuration without adding “weight” or latency to model response times. Our solution is scalable, model-agnostic, and customizable, and provides policy-based access controls that can be applied at the individual, team, or organizational levels. This is the robust, flexible, responsive component every AI security framework needs to meet the present and future challenges of GenAI model deployment.

About the Author

Related Blog Posts

Kubernetes-native WAF for the gateway era: F5 WAF for NGINX now integrates with F5 NGINX Gateway Fabric

F5 extends WAFs to deliver consistent, scalable protection across clusters and environments with F5 NGINX Gateway Fabric and F5 NGINX Ingress Controller.

From dashboard fatigue to operational excellence: Why XOps needs F5 Insight for ADSP

Learn how F5 Insight for ADSP lays the visibility foundation for XOps—turning fragmented signals across applications and infrastructure into actionable intelligence.

The hidden cost of unmanaged AI infrastructure

AI platforms don’t lose value because of models. They lose value because of instability. See how intelligent traffic management improves token throughput while protecting expensive GPU infrastructure.

Govern your AI present and anticipate your AI future

Learn from our field CISO, Chuck Herrin, how to prepare for the new challenge of securing AI models and agents.

F5 recognized as one of the Emerging Visionaries in the Emerging Market Quadrant of the 2025 Gartner® Innovation Guide for Generative AI Engineering

We’re excited to share that F5 has been recognized in 2025 Gartner Emerging Market Quadrant(eMQ) for Generative AI Engineering.

Self-Hosting vs. Models-as-a-Service: The Runtime Security Tradeoff

As GenAI systems continue to move from experimental pilots to enterprise-wide deployments, one architectural choice carries significant weight: how will your organization deploy runtime-based capabilities?