As organizations increasingly integrate generative AI (GenAI) models and large language models (LLMs) into their digital systems, they face new and heightened risks. Unsurprisingly, the risks most often at the top of a security team’s list are those associated with data exfiltration—the unauthorized transfer of data from within an organization to an external destination. Understanding and mitigating these risks are critical tasks for cybersecurity decision-makers to ensure their organization’s sensitive information and system integrity remain secure.

Data exfiltration poses a significant threat to organizations utilizing GenAI and LLMs. These sophisticated models often handle vast amounts of sensitive data, making them attractive targets for cybercriminals, and the consequences of an attack can be severe, ranging from financial losses and reputational damage to legal liabilities and regulatory penalties. High-profile incidents, such as data breaches at healthcare and financial services companies, highlight the critical need for robust security measures. The routes for exfiltration incidents include internal and external human or systematic threats, as well as threats and vulnerabilities within the supply chain, such as:

- Insider Threats: Employees with access to sensitive data can intentionally or unintentionally cause data breaches. Insider threats are particularly challenging to mitigate due to the trusted nature of the individuals involved.

- External Threats: Cyberattacks targeting AI infrastructure are becoming increasingly sophisticated. Attackers may exploit vulnerabilities in AI models, software, or hardware to gain unauthorized access to data.

- Supply Chain Vulnerabilities: Risks from third-party vendors and partners can introduce weaknesses in the security of AI systems. Ensuring the security of the entire supply chain is essential to prevent data exfiltration.

Thankfully, help is at hand—strategies that can be effective in combating data exfiltration incidents include:

- Access Control and Authentication: Implementing robust access controls and multi-factor authentication (MFA) is crucial for managing who can access AI systems and data. Policy-based access controls (PBAC) ensure that employees have only the necessary permissions required for their roles, reducing the risk of unauthorized access.

- Monitoring and Anomaly Detection: Continuous monitoring of AI systems for unusual activity is essential. Leveraging AI and machine learning for real-time anomaly detection can help identify and respond to potential data exfiltration attempts before they cause significant harm.

- Regular Audits and Penetration Testing: Conducting regular security audits helps identify vulnerabilities within AI systems. Penetration testing, in which ethical hackers attempt to breach a system, provides valuable insights into potential weaknesses and areas for improvement.

- Supply Chain Security: Assessing and mitigating risks from third-party vendors is crucial for maintaining the security of AI systems. Organizations should implement security measures throughout the supply chain, including rigorously vetting vendors and continually monitoring their security practices.

- Employee Training and Awareness: Cybersecurity training for employees is essential to create a culture of security awareness. Educating staff about the risks of data exfiltration and best practices for preventing it can significantly reduce the likelihood of insider threats and human error.

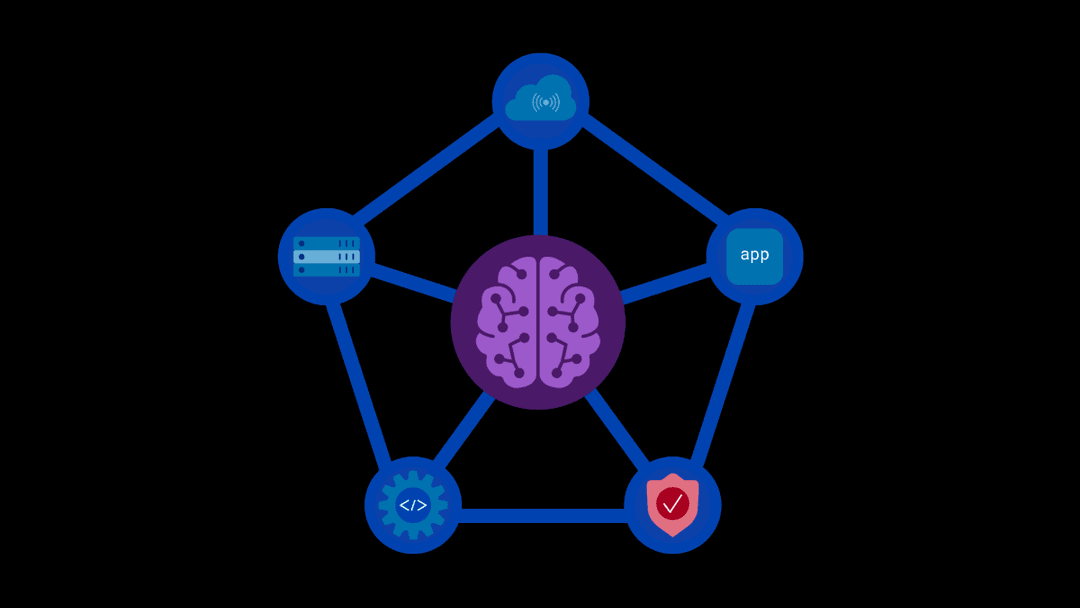

Our AI Runtime Security Solutions address the challenges and embrace these strategies to enable organizations to harness the power of GenAI while maintaining the highest standards of security. Our model-agnostic “weightless” trust layer resides between your organization’s digital infrastructure and your models to provide 360° protection without introducing latency. A broad set of customizable scanners review every prompt to ensure that private information does not leave the system and malicious content, for example, embedded code or links, does not enter. PBAC can be applied to individuals, teams, and the models, so only personnel who need access to a model have it.

Because it is external to the system, our solution affords security teams observability into every AI tool in use, enabling real-time detection of anomalous activity that could indicate a threat or attack. Every interaction with every model and every administrator interaction with the F5 solution is tracked for review and auditing, providing detailed insights about user behavior and model usage. The key to successfully striking the right balance between data security, model performance, and operational functionality lies in selecting the right tools. Adding F5 to your organization’s AI security apparatus ensures your GenAI deployments are and remain transparent, secure, and stable. If you are attending the BlackHat conference in Las Vegas, August 6-8, click here to contact us and find out how our AI Runtime Security solutions can transform your organization’s security profile.

About the Author

Related Blog Posts

Kubernetes-native WAF for the gateway era: F5 WAF for NGINX now integrates with F5 NGINX Gateway Fabric

F5 extends WAFs to deliver consistent, scalable protection across clusters and environments with F5 NGINX Gateway Fabric and F5 NGINX Ingress Controller.

From dashboard fatigue to operational excellence: Why XOps needs F5 Insight for ADSP

Learn how F5 Insight for ADSP lays the visibility foundation for XOps—turning fragmented signals across applications and infrastructure into actionable intelligence.

The hidden cost of unmanaged AI infrastructure

AI platforms don’t lose value because of models. They lose value because of instability. See how intelligent traffic management improves token throughput while protecting expensive GPU infrastructure.

Govern your AI present and anticipate your AI future

Learn from our field CISO, Chuck Herrin, how to prepare for the new challenge of securing AI models and agents.

F5 recognized as one of the Emerging Visionaries in the Emerging Market Quadrant of the 2025 Gartner® Innovation Guide for Generative AI Engineering

We’re excited to share that F5 has been recognized in 2025 Gartner Emerging Market Quadrant(eMQ) for Generative AI Engineering.

Self-Hosting vs. Models-as-a-Service: The Runtime Security Tradeoff

As GenAI systems continue to move from experimental pilots to enterprise-wide deployments, one architectural choice carries significant weight: how will your organization deploy runtime-based capabilities?