One emerging trend in the continuously evolving AI landscape is the move toward adopting smaller, more specialized large language models (LLMs). Understanding this movement and its causes and implications is important. Here’s an overview of the benefits, challenges, and use cases of these smaller models and some insight into how model-agnostic tools are facilitating their integration into the digital workplace.

Benefits of Smaller Models

- Efficiency and Cost-Effectiveness: Smaller models require less compute power and storage, making them more cost-effective to deploy and maintain, especially for organizations operating at scale. For example, Microsoft’s Phi-2, a 2.7-billion parameter language model, outperforms significantly larger models on key benchmarks.

- Faster Training and Inference: Their reduced size means smaller models can be trained and fine-tuned more quickly, which accelerates the development cycle and allows businesses to iterate and deploy updates faster. This increased speed can be a competitive advantage, enabling quicker adaptation and responsiveness to new data and requirements with fewer latency issues.

- Domain Specialization: Fine-tuning smaller models on specific datasets allows for highly specialized applications. When focused on niche areas, these models can offer more precise and contextually relevant outputs compared to their larger, more generalized counterparts.

Challenges of Smaller Models

- Data Quality and Bias: Ensuring the quality of the training data is critical, especially with regard to the accuracy of its data representations. Smaller models are particularly sensitive to biases present in their datasets. As noted by McKinsey and others, models trained on non-diverse data can perpetuate existing biases, leading to flawed outputs.

- Security and Privacy Concerns: Smaller models are not inherently more secure than large models and face significant security risks, including data leaks and susceptibility to adversarial attacks. Effective governance and robust security measures are necessary to mitigate these risks.

- Integration Complexity: Integrating smaller models into existing systems can be challenging, requiring careful orchestration and compatibility checks. Model-agnostic tools can help alleviate some of these issues by providing a standardized interface for deploying various models.

Use Cases for Smaller Models

- Customer Support and Service Automation: Fine-tuned models are being used to enhance customer service by providing accurate and timely responses. McKinsey reports that companies have seen improvements in customer satisfaction and efficiency when they have used smaller, fine-tuned models to automate routine inquiries, freeing up human representatives for more complex tasks.

- Healthcare Applications: Smaller and fine-tuned models are particularly useful in healthcare applications. By leveraging specific medical data, these models can provide valuable insights that support diagnostic assistance, personalized treatment plans, and clinical decision-making.

- Drug Discovery: Smaller multimodal models are used in the pharmaceutical industry to analyze complex datasets, such as microscopy images, to accelerate drug discovery processes. This approach speeds up research and enhances the accuracy of predictions and outcomes.

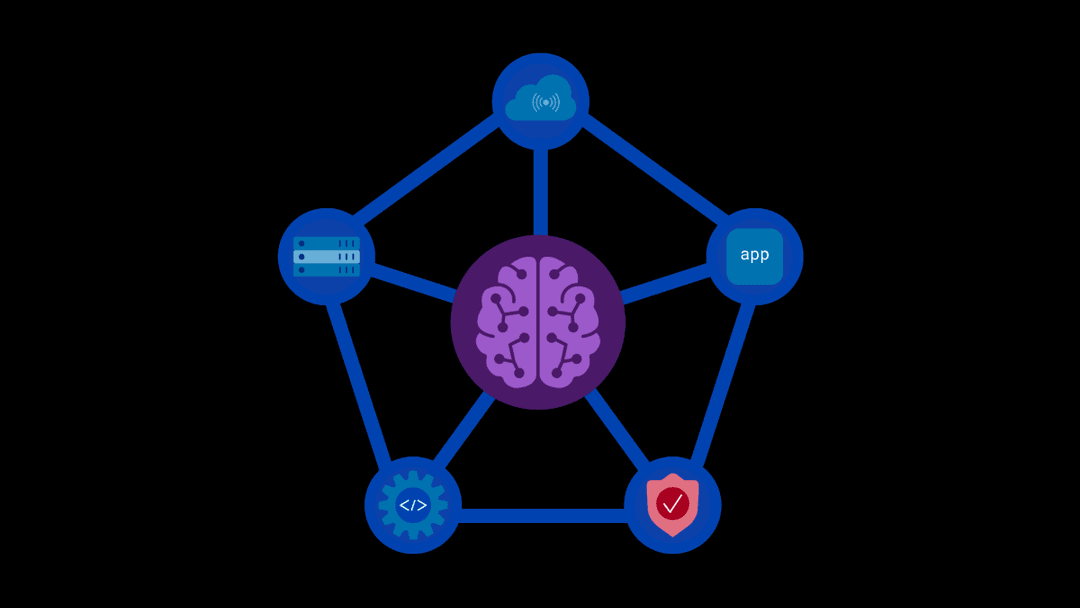

Facilitating Integration with Model-Agnostic Tools

Using model-agnostic tools, such as F5’s AI Runtime Security solutions, plays a pivotal role in integrating these smaller models into the digital workplace by providing a unified platform that supports multiple models, enabling seamless integration and deployment. For instance, platforms like IBM’s Watsonx.ai allow organizations to train, tune, and deploy various models efficiently, ensuring that they can leverage the best model for each specific task without being locked into a single vendor or technology stack.

Conclusion

The move toward smaller models represents a significant shift in the AI landscape, offering numerous benefits in terms of efficiency, cost, and specialization. However, it also presents challenges that must be addressed through careful data management, security measures, and robust integration strategies. By understanding and embracing these smaller models and leveraging model-agnostic tools, such as F5 runtime security, organizations can successfully integrate these models into their operations and position themselves at the leading edge of AI innovation. Click here to contact us and find out more about our comprehensive AI Runtime Security solutions.

About the Author

Related Blog Posts

F5 Distributed Cloud Services: Security innovation built for operational scale

Learn how the latest upgrade to F5 Distributed Cloud Services advances AI driven security while strengthening the operational foundations teams need to run at scale.

From dashboard fatigue to operational excellence: Why XOps needs F5 Insight for ADSP

Learn how F5 Insight for ADSP lays the visibility foundation for XOps—turning fragmented signals across applications and infrastructure into actionable intelligence.

The hidden cost of unmanaged AI infrastructure

AI platforms don’t lose value because of models. They lose value because of instability. See how intelligent traffic management improves token throughput while protecting expensive GPU infrastructure.

Govern your AI present and anticipate your AI future

Learn from our field CISO, Chuck Herrin, how to prepare for the new challenge of securing AI models and agents.

F5 recognized as one of the Emerging Visionaries in the Emerging Market Quadrant of the 2025 Gartner® Innovation Guide for Generative AI Engineering

We’re excited to share that F5 has been recognized in 2025 Gartner Emerging Market Quadrant(eMQ) for Generative AI Engineering.

Self-Hosting vs. Models-as-a-Service: The Runtime Security Tradeoff

As GenAI systems continue to move from experimental pilots to enterprise-wide deployments, one architectural choice carries significant weight: how will your organization deploy runtime-based capabilities?