Multi‑cloud deployments are here to stay. According to F5’s State of Application Strategy in 2022 report, 77% of enterprises operate applications across multiple clouds. The adoption of multi‑cloud and hybrid architectures unlocks important benefits, like improved efficiency, reduced risk of outages, and avoidance of vendor lock‑in. But these complex architectures also present unique challenges.

The software and IT leaders surveyed by F5 named these as their top multi‑cloud challenges:

- Visibility (45% of respondents)

- Security (44%)

- Migrating apps (41%)

- Optimizing performance (40%)

Managing APIs for microservices in multi‑cloud environments is especially complex. Without a holistic API strategy in place, APIs proliferate across public cloud, on‑premises, and edge environments faster than Platform Ops teams can secure and manage them. We call this problem API sprawl and in an earlier post we explained why it’s such a significant threat.

You need a multi‑cloud API strategy so you can implement a thoughtful approach to unifying your microservices – now distributed across multiple clouds – to ensure end-to-end connectivity. Two of the common scenarios for multi‑cloud and hybrid deployments are:

- Different services in multi‑cloud/hybrid environments – You need to operate different applications and APIs in different locations, perhaps for cost efficiency or because different services are relevant to different groups of users.

- Same services in multi‑cloud/hybrid environments – You need to ensure high availability for the same applications deployed in different locations.

In the following tutorial we show step-by-step how to use API Connectivity Manager, part of F5 NGINX Management Suite, in the second scenario: deploying the same services in multiple environments for high availability. This eliminates a single point of failure in your multi‑cloud or hybrid production environment: if one gateway instance fails, another gateway instance takes over and your customers don’t experience an outage, even if one cloud goes down.

API Connectivity Manager is a cloud‑native, run‑time–agnostic solution for deploying, managing, and securing APIs. From a single pane of glass, you can manage all your API operations for NGINX Plus API gateways and developer portals deployed across public cloud, on‑premises, and edge environments. This gives your Platform Ops teams full visibility into API traffic and makes it easy to apply consistent governance and security policies for every environment.

Enabling High Availability for API Gateways in a Multi-Cloud Deployment

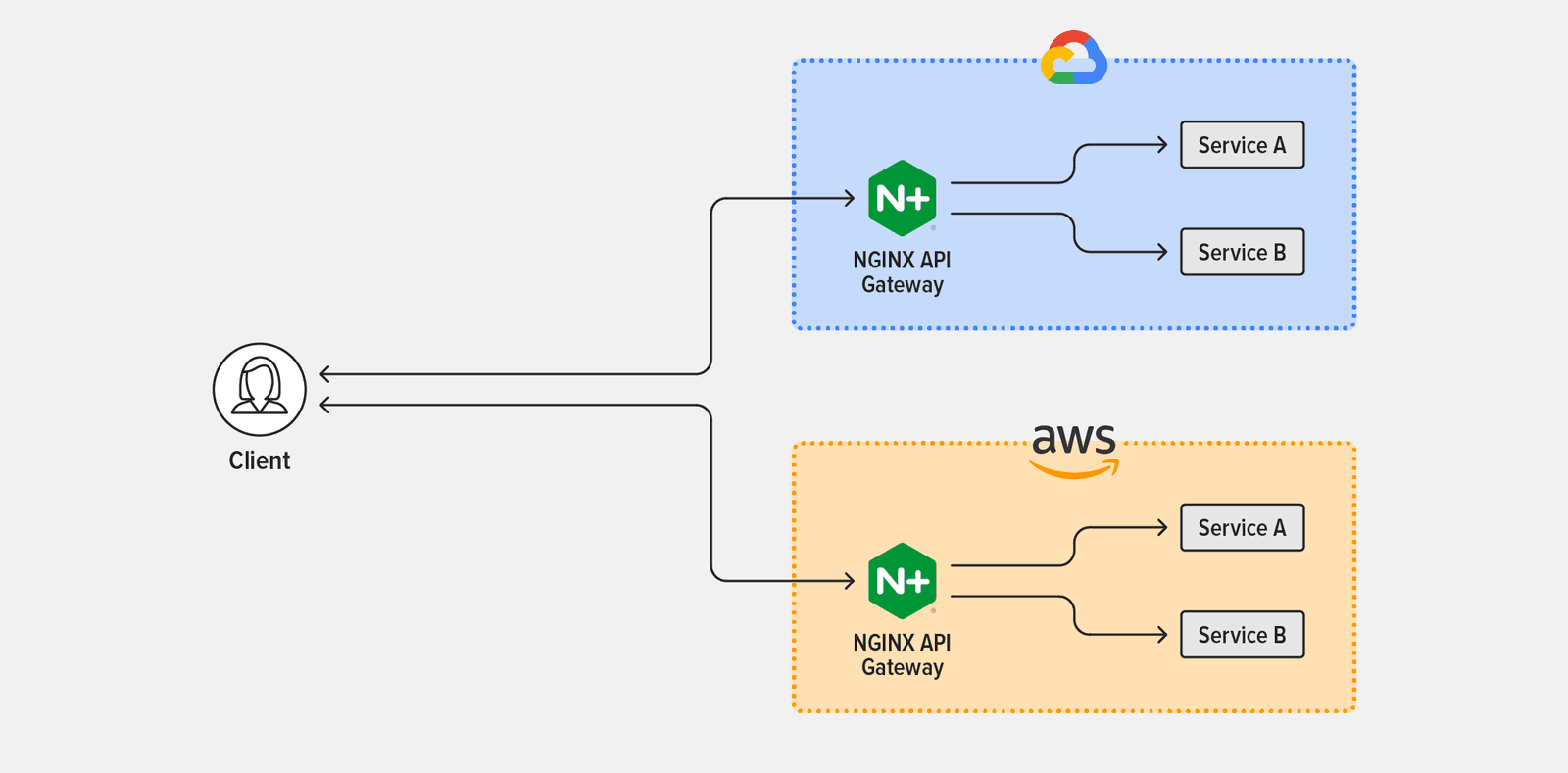

As mentioned in the introduction, in this tutorial we’re configuring API Connectivity Manager for high availability of services running in multiple deployment environments. Specifically, we’re deploying NGINX Plus as an API gateway routing traffic to two services, Service A and Service B, which are running in two public clouds, Google Cloud Platform (GCP) and Amazon Web Services (AWS). (The setup applies equally to any mix of deployment environments, including Microsoft Azure and on‑premises data centers.)

Figure 1 depicts the topology used in the tutorial.

Follow the steps in these sections to complete the tutorial:

- Install and Configure API Connectivity Manager

- Deploy NGINX Plus Instances as API Gateways

- Set Up an Infrastructure Workspace

- Create an Environment and API Gateway Clusters

- Apply Global Policies

Install and Configure API Connectivity Manager

- Obtain a trial or paid subscription for NGINX Management Suite, which includes Instance Manager and API Connectivity Manager along with NGINX Plus as an API gateway and NGINX App Protect to secure your APIs. Start a free, 30-day trial of NGINX Management Suite to get started.

- Install NGINX Management Suite. In the Install Management Suite Modules section, follow the instructions for API Connectivity Manager (and optionally other modules).

- Add the license for each installed module.

- (Optional.) Set up TLS termination and mTLS, to secure client connections to NGINX Management Suite and traffic between API Connectivity Manager and NGINX Plus instances on the data plane, respectively.

Deploy NGINX Plus Instances as API Gateways

Select the environments that make up your multi‑cloud or hybrid infrastructure. For the tutorial we’ve chosen AWS and GCP and are installing one NGINX Plus instance in each cloud. In each environment perform these steps on each data‑plane host that will act as an API gateway:

- Install NGINX Plus on a supported operating system.

- Install the NGINX JavaScript module (njs).

- Add the following directives in the main (top‑level) context in /etc/nginx/nginx.conf:

load_module modules/ngx_http_js_module.so;load_module modules/ngx_stream_js_module.so;- Restart NGINX Plus, for example by running this command:

$ nginx -s reloadSet Up an Infrastructure Workspace

You can create multiple Infrastructure Workspaces (up to 10 at the time of writing) in API Connectivity Manager. With segregated Workspaces you can apply policies and authentication/authorization requirements that are specific to different lines of business, teams of developers, external partners, clouds, and so on.

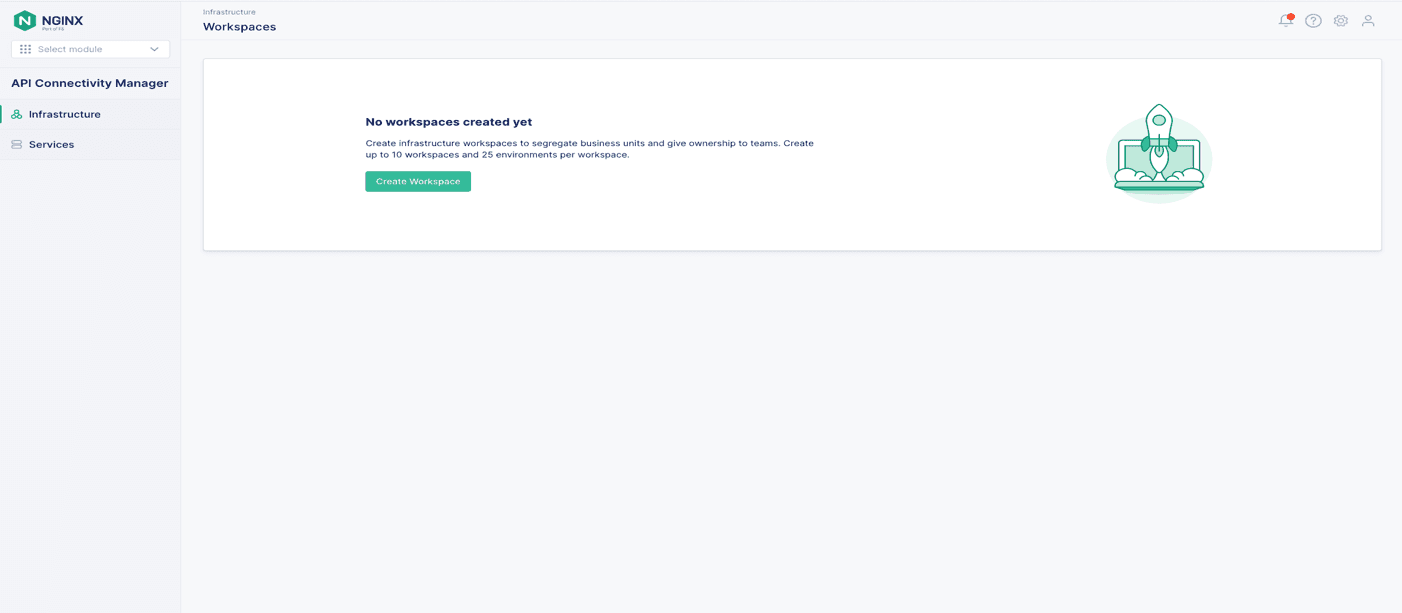

Working in the API Connectivity Manager GUI, create a new Workspace:

- Click Infrastructure in the left navigation column.

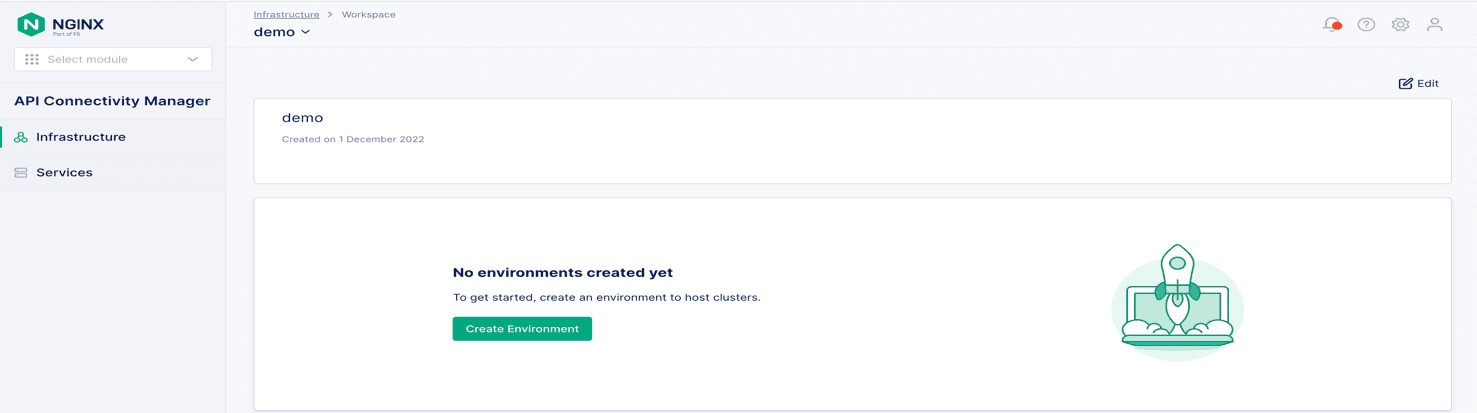

- Click the + Create button to create a new workspace, as shown in Figure 2. Figure 2: Creating a new Infrastructure Workspace

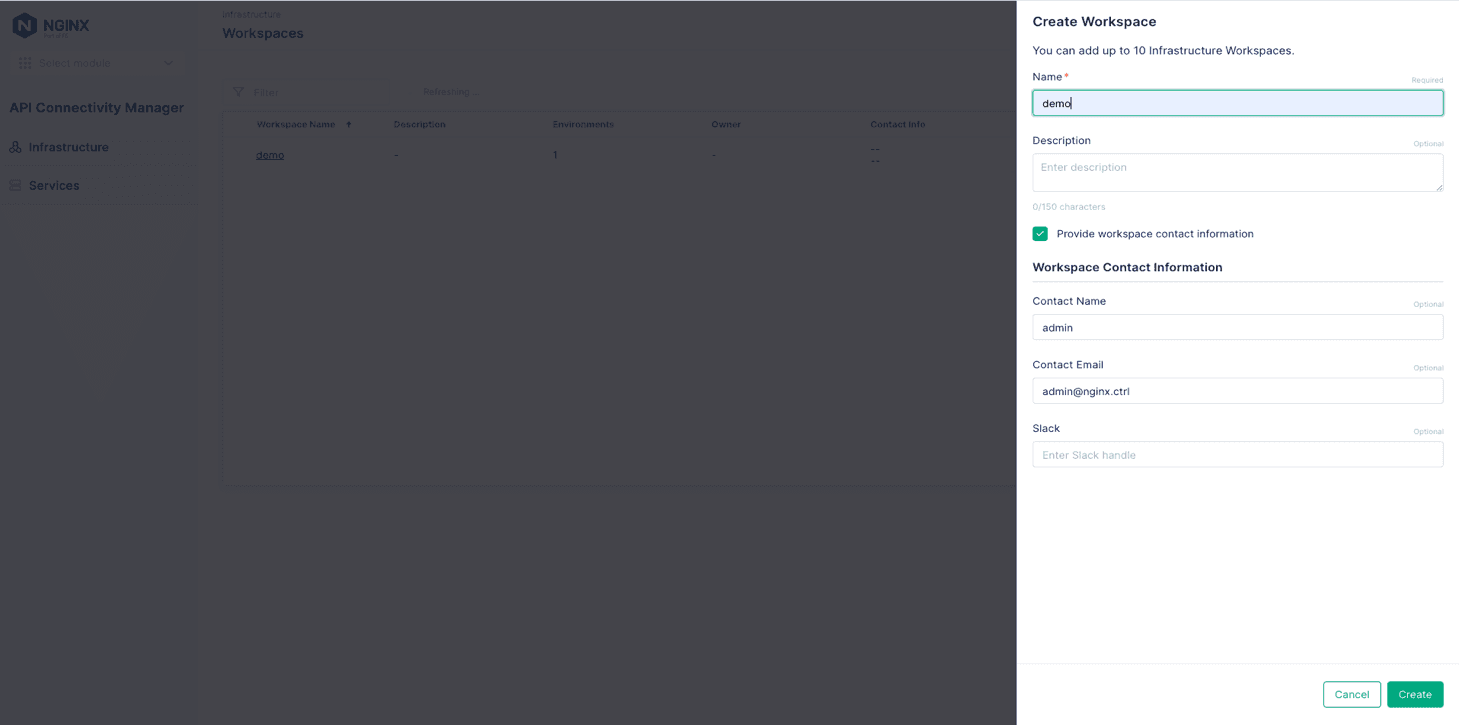

- In the Create Workspace panel that opens, fill in the Name field (demo in Figure 3). Optionally, fill in the Description field and the fields in the Workspace Contact Information section. The infrastructure admin (your Platform Ops team, for example) can use the contact information to provide updates about status or issues to the users of the Workspace. Figure 3: Naming a new Infrastructure Workspace and adding contact information

- Click the Create button.

Create an Environment and API Gateway Clusters

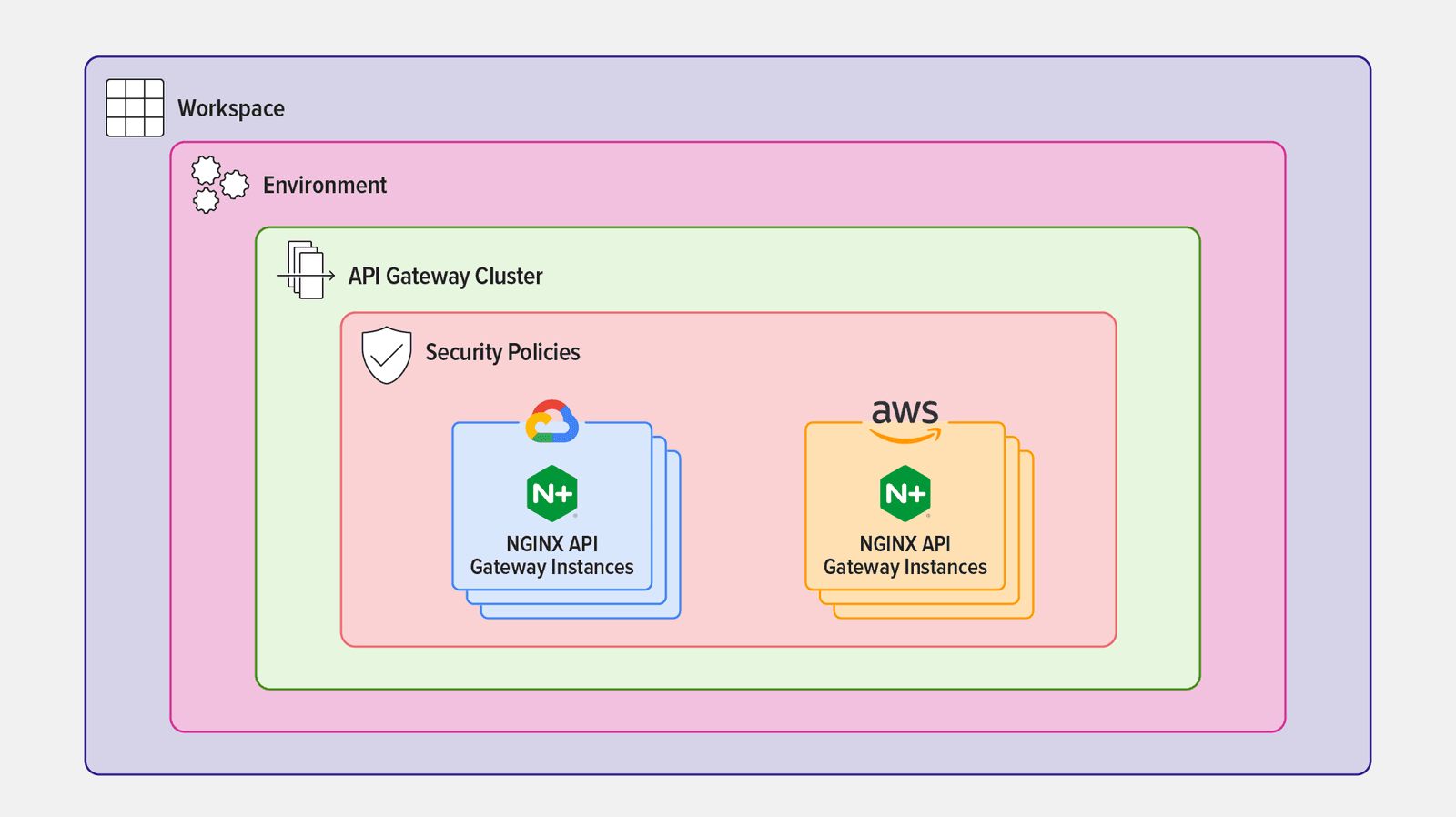

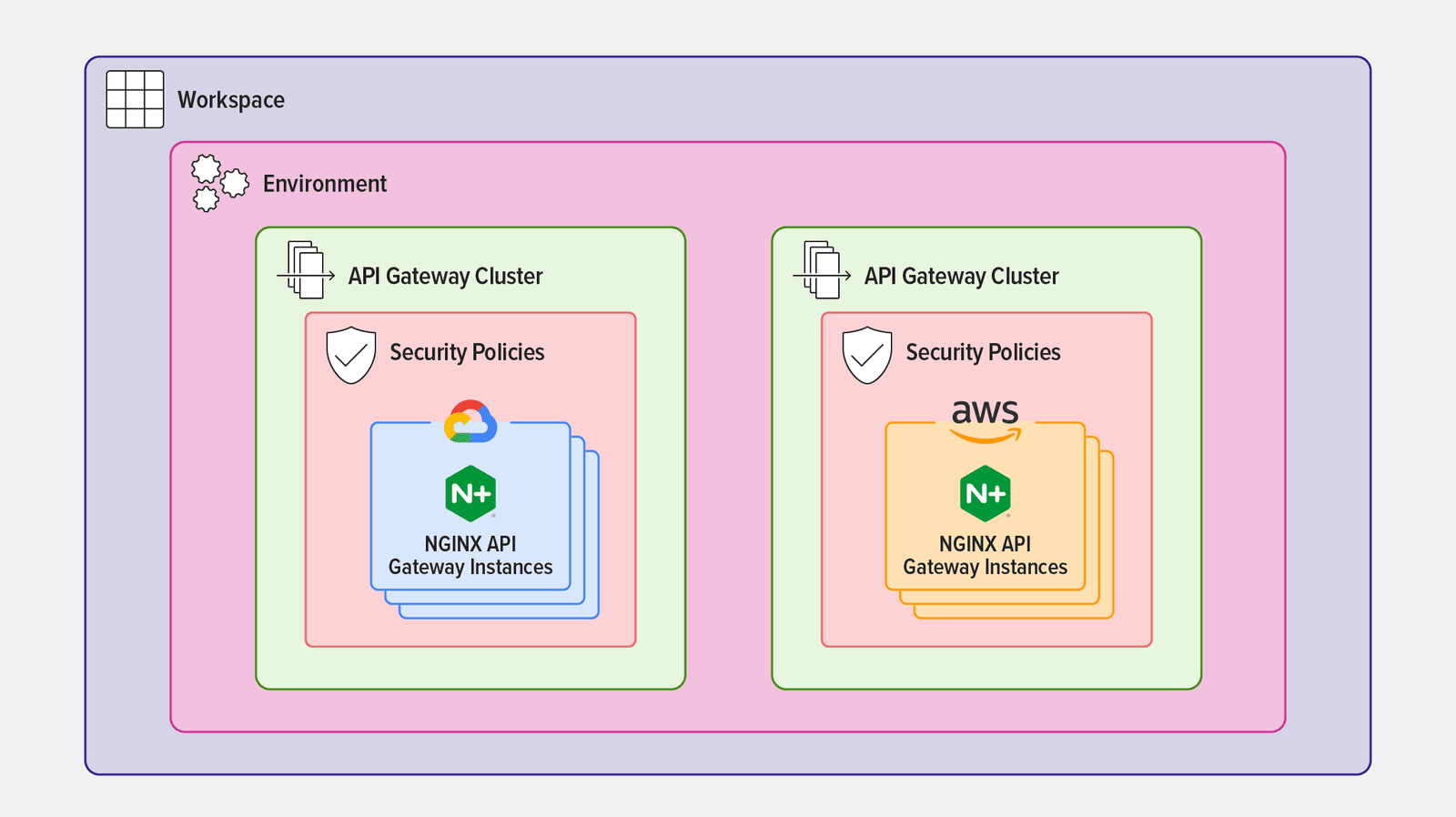

In API Connectivity Manager, an Environment is a logical grouping of dedicated resources (such as API gateways or API developer portals). You can create multiple Environments per Workspace (up to 25 at the time of writing); they usually correspond to different stages of app development and deployment such as coding, testing, and production, but they can serve any purpose you want.

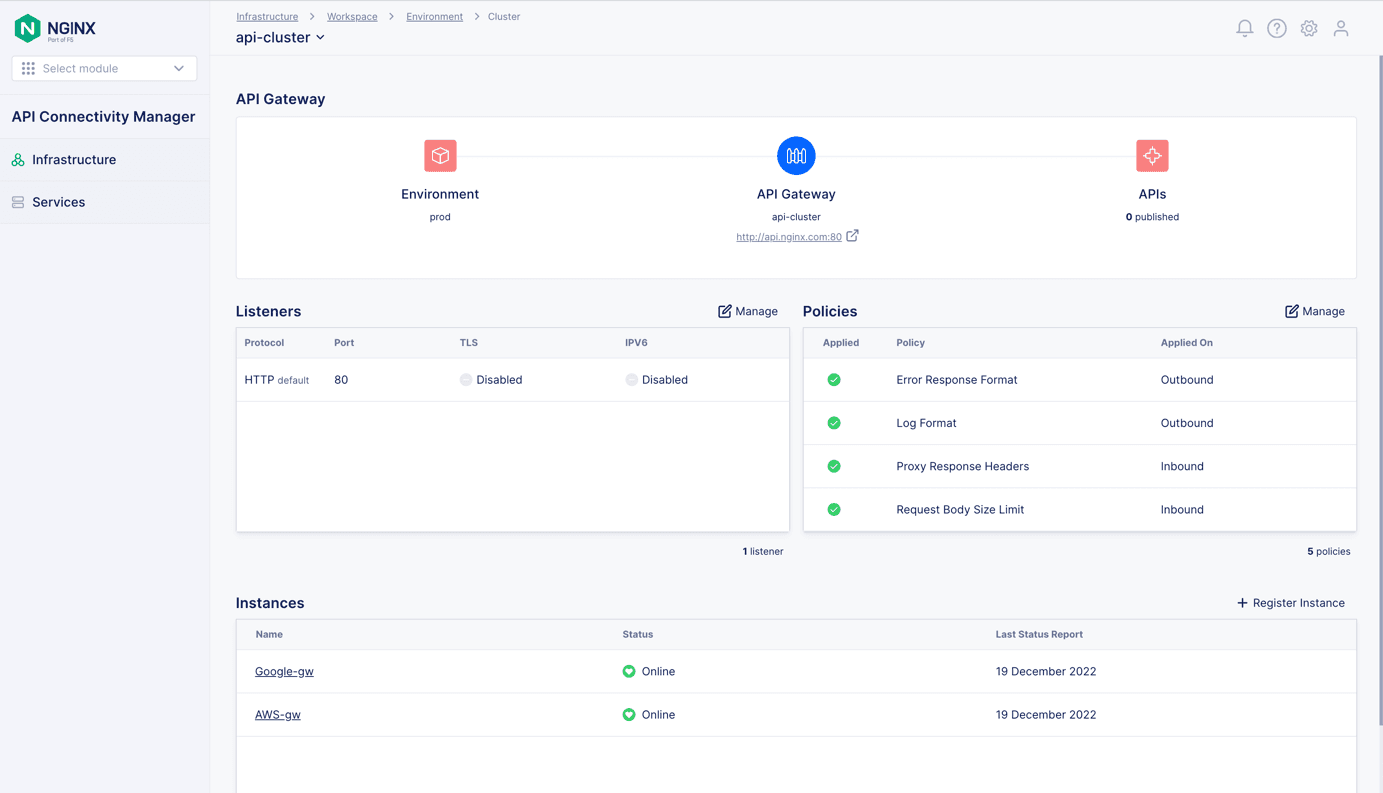

Within an Environment, an API Gateway Cluster is a logical grouping of NGINX Plus instances acting as API gateways. A single Environment can have multiple API Gateway Clusters which share the same hostname (for example, api.nginx.com, as in this tutorial). The NGINX Plus instances in an API Gateway Cluster can be located in more than one type of infrastructure, for example in multiple clouds.

There are two ways to configure an Environment in API Connectivity Manager for active‑active high availability of API gateways:

The primary reason to deploy multiple API Gateway Clusters is so that you can apply a different set of security policies to each cluster.

In Deploy NGINX Plus Instances as API Gateways, we deployed two NGINX Plus instances – one in AWS and the other in GCP. The tutorial uses the same instances to illustrate both Environment types (with a single API Gateway Cluster or with multiple API Gateway Clusters); if you want to deploy both Environment types in a single Workspace you would need to create additional NGINX Plus instances for the second Environment.

Deploy an Environment with One API Gateway Cluster

For an Environment with one API Gateway Cluster, the same security policies apply to all NGINX Plus API gateway instances, as shown in Figure 4.

Create an Environment and API Gateway Cluster

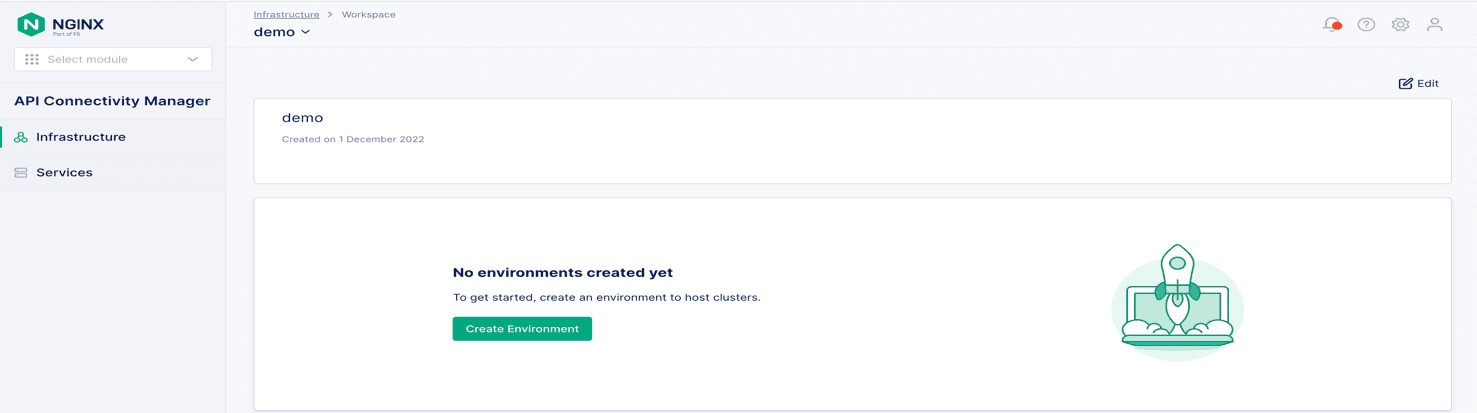

- Navigate to your Workspace and click the Create Environment button, as shown in Figure 5. Figure 5: Creating a new Environment in an Infrastructure Workspace

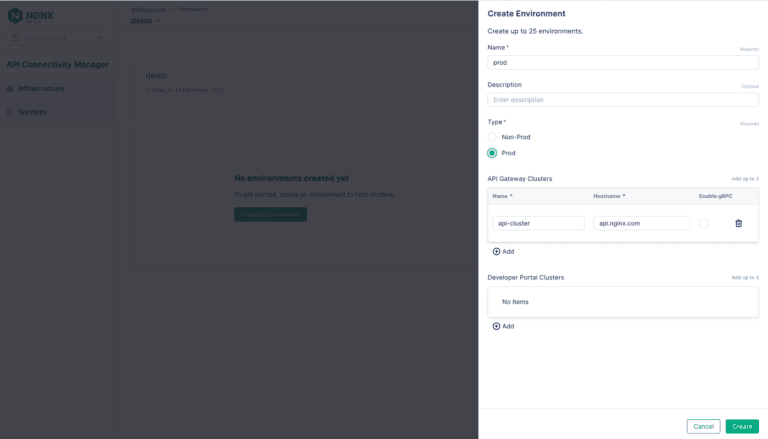

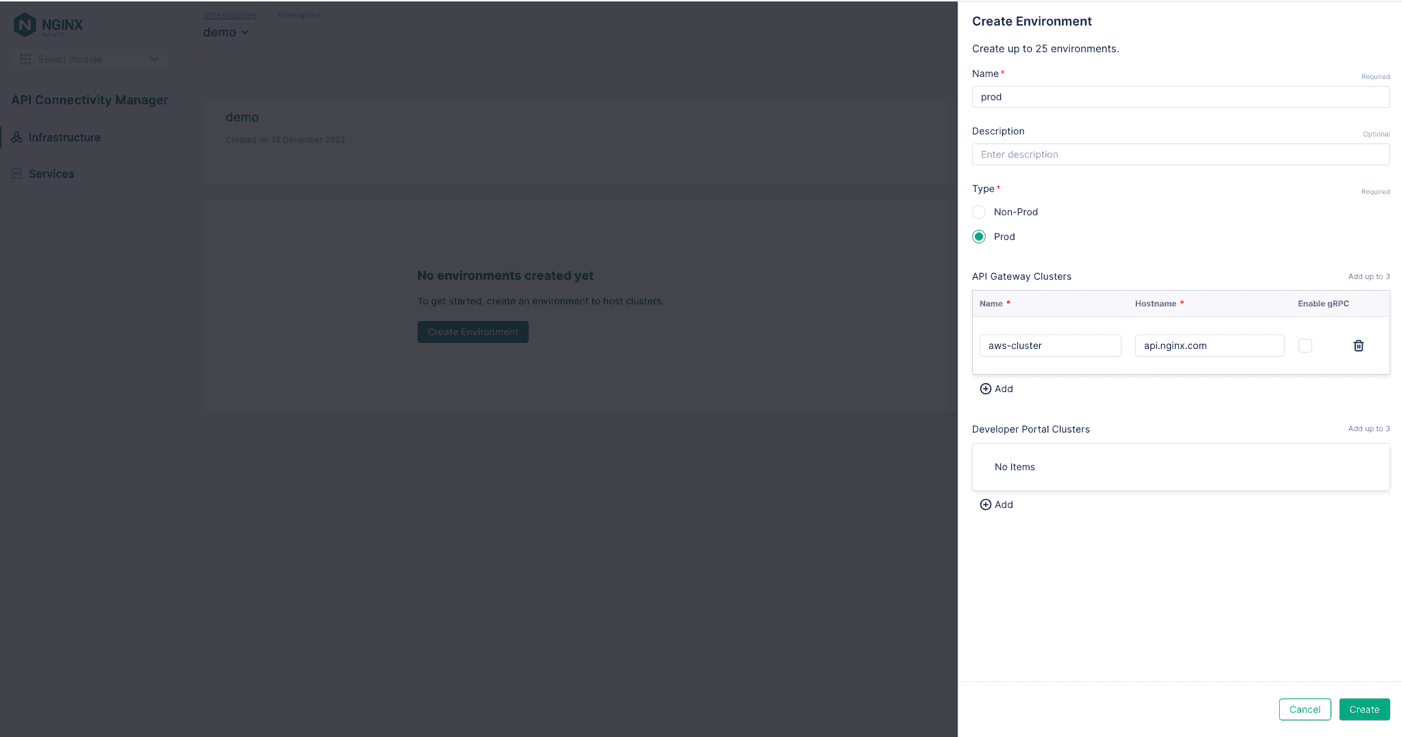

- In the Create Environment panel that opens, fill in the Name field (prod in Figure 6) and optionally the Description field, and select the Environment type (here we’re choosing Prod). Figure 6: Naming a new Environment and assigning an API Gateway Cluster to it

- In the API Gateway Clusters section, fill in the Name and Hostname fields (api-cluster and api.nginx.com in Figure 6).

- Click the Create button. The Environment Created panel opens to display the command you need to run on each NGINX Plus instance to assign it to the API Gateway Cluster. For convenience, we show the commands in Step 7 below.

Assign API Gateway Instances to an API Gateway Cluster

Repeat on each NGINX Plus instance:

- Use

sshto connect and log in to the instance. - If NGINX Agent is already running, stop it:

$ systemctl stop nginx-agent- Run the command of your choice (either

curlorwget) to download and install the NGINX Agent package:

$ curl [-k] https://<NMS_FQDN>/install/nginx-agent > install.sh && sudo sh -install.sh -g <cluster_name> && sudo systemctl start nginx-agent- or

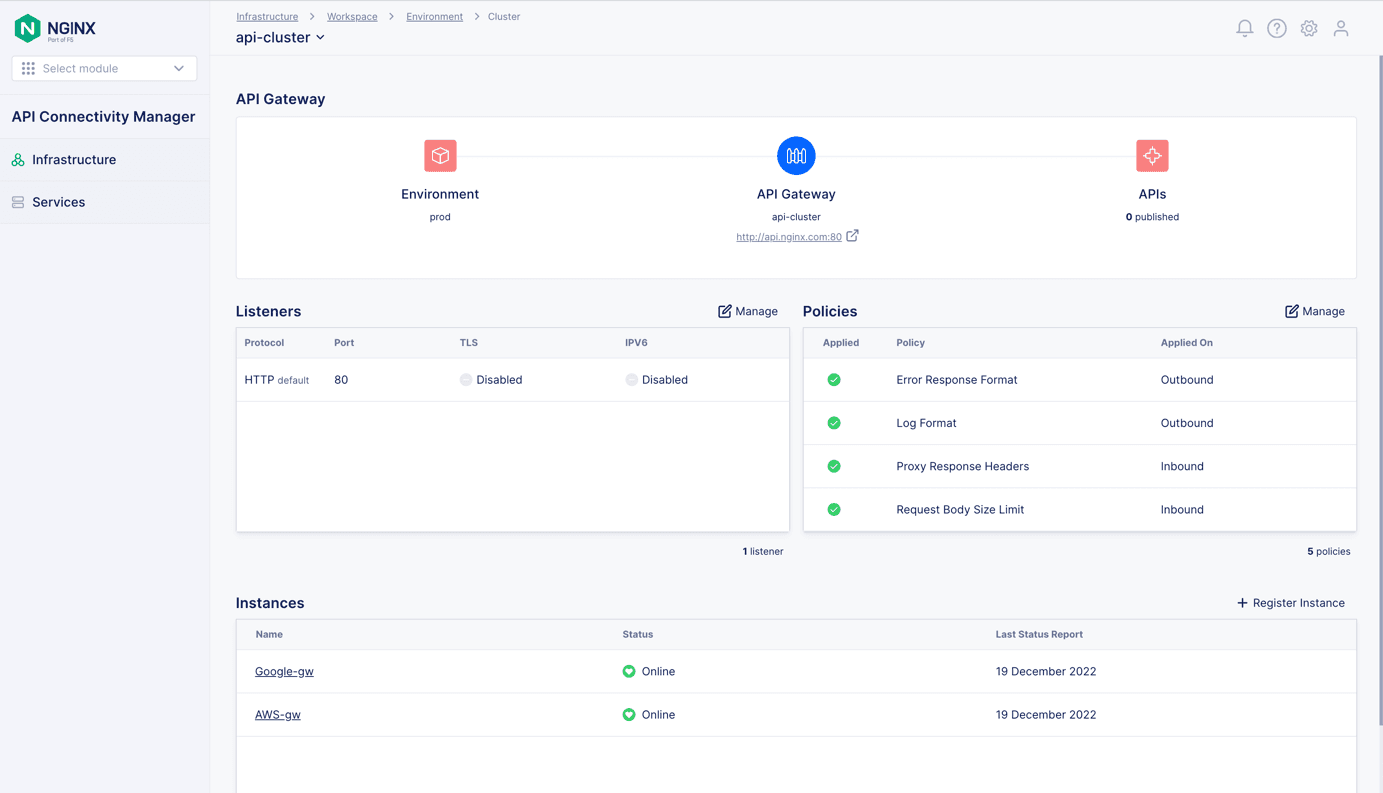

$ wget [--no-check-certificate] https://<NMS_FQDN>/install/nginx-agent --no-check-certificate -O install.sh && sudo sh install.sh -g <cluster_name> && sudo systemctl start nginx-agent- The NGINX Plus instances now appear in the Instances section of the Cluster window for api-cluster, as shown in Figure 7. Figure 7: A single API Gateway Cluster groups NGINX Plus instances deployed in multiple clouds

- If you didn’t enable mTLS in Install and Configure API Connectivity Manager, add:

- The

‑kflag to thecurlcommand - The

--no-check-certificateflag to thewgetcommand

- The

- For

<NMS_FQDN>, substitute the IP address or fully qualified domain name of your NGINX Management Suite server. - For

<cluster_name>, substitute the name of the API Gateway Cluster (api-clusterin this tutorial).

- If you didn’t enable mTLS in Install and Configure API Connectivity Manager, add:

- Proceed to Apply Global Policies.

Deploy an Environment with Multiple API Gateway Clusters

For an Environment with multiple API Gateway Clusters, different security policies can apply to different NGINX Plus API gateway instances, as shown in Figure 8.

separate API Gateway Clusters

Create an Environment and API Gateway Cluster

- Navigate to your Workspace and click the Create Environment button, as shown in Figure 9. Figure 9: Creating a new Environment in an Infrastructure Workspace

- In the Create Environment panel that opens, fill in the Name field (prod in Figure 10) and optionally the Description field, and select the Environment type (here we’re choosing Prod). Figure 10: Naming a new Environment and assigning the first API Gateway Cluster to it

- In the API Gateway Clusters section, fill in the Name and Hostname fields (in Figure 10, they are aws-cluster and api.nginx.com).

- Click the Create button. The Environment Created panel opens to display the command you need to run on each NGINX Plus instance to assign it to the API Gateway Cluster. For convenience, we show the commands in Step 10 below.

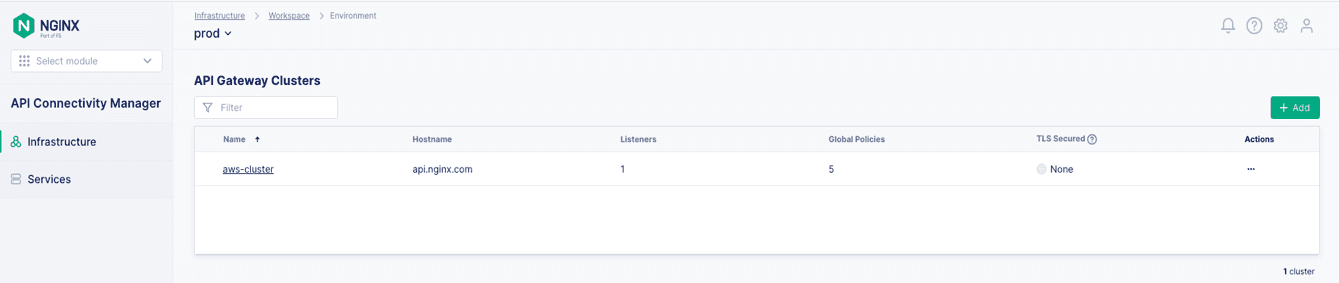

- Navigate back to the Environment tab and click the + Add button in the upper right corner of the API Gateway Clusters section, as shown in Figure 11. Figure 11: Adding another API Gateway Cluster to an Environment

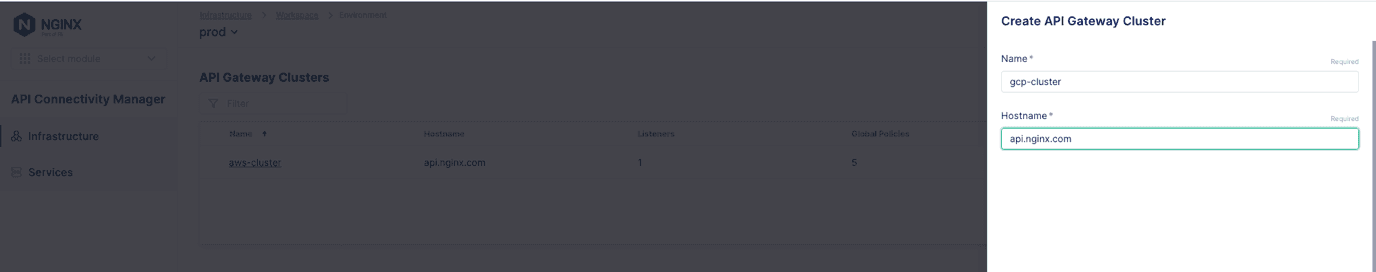

- On the Create API Gateway Cluster panel, fill in the Name field with the second cluster name (gcp-cluster in Figure 12) and the Hostname field with the same hostname as for the first cluster (api.nginx.com). Figure 12: Adding the second API Gateway Cluster to an Environment

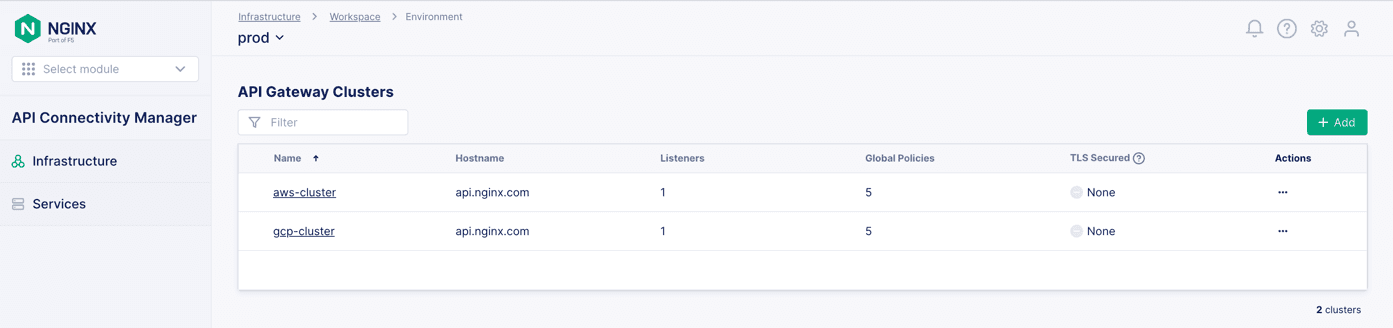

The two API Gateway Clusters now appear on the API Gateway Clusters for the Prod Environment, as shown in Figure 13.

Assign API Gateway Instances to an API Gateway Cluster

Repeat on each NGINX Plus instance:

- Use

sshto connect and log in to the instance. - If NGINX Agent is already running, stop it:

$ systemctl stop nginx-agent- Run the command of your choice (either

curlorwget) to download and install the NGINX Agent package:

$ curl [-k] https://<NMS_FQDN>/install/nginx-agent > install.sh && sudo sh -install.sh -g <cluster_name> && sudo systemctl start nginx-agent- or

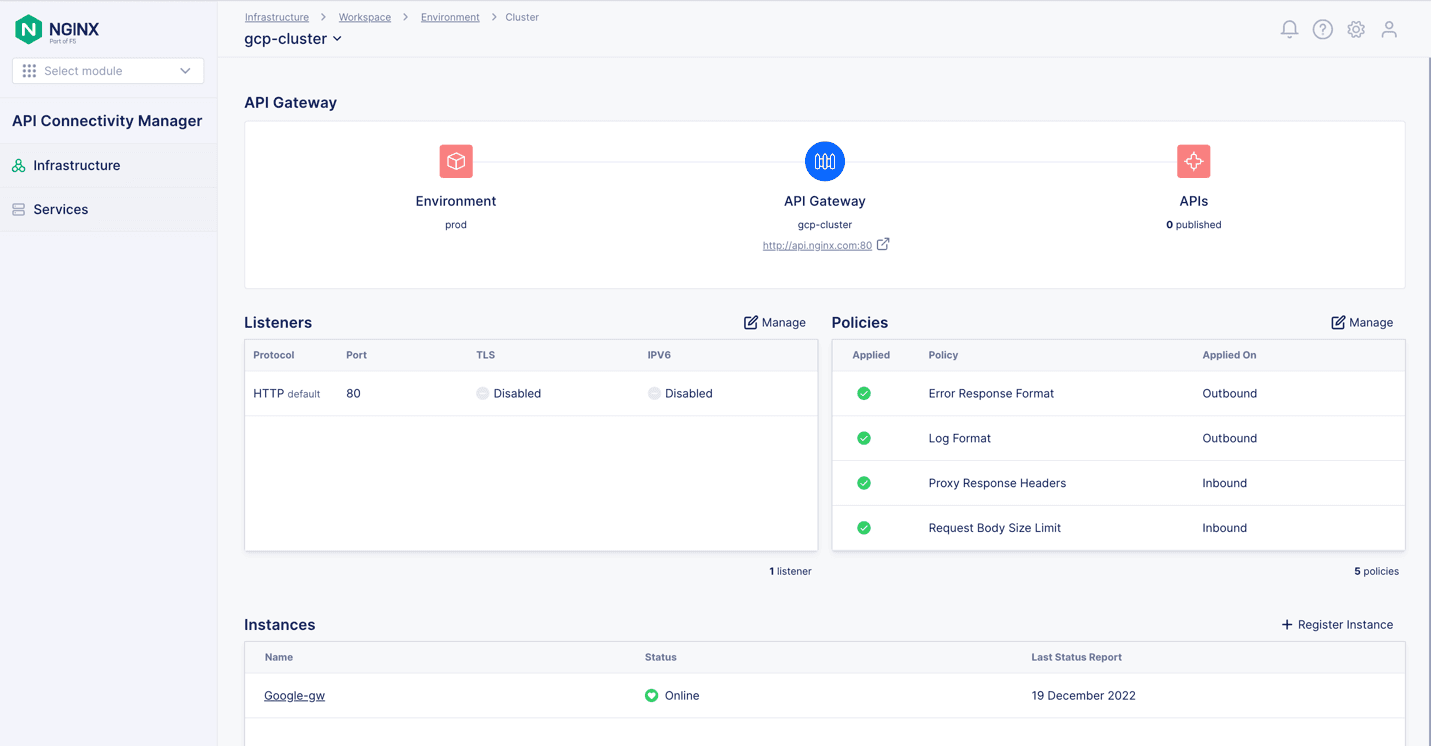

$ wget [--no-check-certificate] https://<NMS_FQDN>/install/nginx-agent --no-check-certificate -O install.sh && sudo sh install.sh -g <cluster_name> && sudo systemctl start nginx-agent- The appropriate NGINX Plus instance now appears in the Instances section of the Cluster windows for aws‑cluster (Figure 14) and gcp‑cluster (Figure 15). Figure 14: The first of two API Gateway Clusters in an Environment that spans multiple clouds

Figure 15: The second of two API Gateway Clusters in an Environment that spans multiple clouds Apply Global Policies Now you can add global policies which apply to all the NGINX Plus instances in an API Gateway Cluster. For example, to secure client access to your APIs you can apply the OpenID Connect Relying Party or TLS Inbound policy. To secure the connection between an API gateway and the backend service which exposes the API, apply the TLS Backend policy. For more information about TLS policies, see the API Connectivity Manager documentation. Conclusion Managing multi‑cloud and hybrid architectures is no easy task. They are complex environments with fast‑changing applications that are often difficult to observe and secure. With the right tools, however, you can avoid vendor lock‑in while retaining the agility and flexibility you need for delivering new capabilities to market faster. As a cloud‑native tool, API Connectivity Manager from NGINX gives you the scalability, visibility, governance, and security you need to manage APIs in multi‑cloud and hybrid environment. Start a 30‑day free trial of NGINX Management Suite, which includes access to API Connectivity Manager, NGINX Plus as an API gateway, and NGINX App Protect to secure your APIs.

Figure 15: The second of two API Gateway Clusters in an Environment that spans multiple clouds Apply Global Policies Now you can add global policies which apply to all the NGINX Plus instances in an API Gateway Cluster. For example, to secure client access to your APIs you can apply the OpenID Connect Relying Party or TLS Inbound policy. To secure the connection between an API gateway and the backend service which exposes the API, apply the TLS Backend policy. For more information about TLS policies, see the API Connectivity Manager documentation. Conclusion Managing multi‑cloud and hybrid architectures is no easy task. They are complex environments with fast‑changing applications that are often difficult to observe and secure. With the right tools, however, you can avoid vendor lock‑in while retaining the agility and flexibility you need for delivering new capabilities to market faster. As a cloud‑native tool, API Connectivity Manager from NGINX gives you the scalability, visibility, governance, and security you need to manage APIs in multi‑cloud and hybrid environment. Start a 30‑day free trial of NGINX Management Suite, which includes access to API Connectivity Manager, NGINX Plus as an API gateway, and NGINX App Protect to secure your APIs.

- If you didn’t enable mTLS in Install and Configure API Connectivity Manager, add:

- The

‑kflag to thecurlcommand - The

--no-check-certificateflag to thewgetcommand

- The

- For

<NMS_FQDN>, substitute the IP address or fully qualified domain name of your NGINX Management Suite server. - For

<cluster_name>, substitute the name of the appropriate API Gateway Cluster (in this tutorial,aws‑clusterfor the instance deployed in AWS andgcp‑clusterfor the instance deployed in GCP).

- If you didn’t enable mTLS in Install and Configure API Connectivity Manager, add:

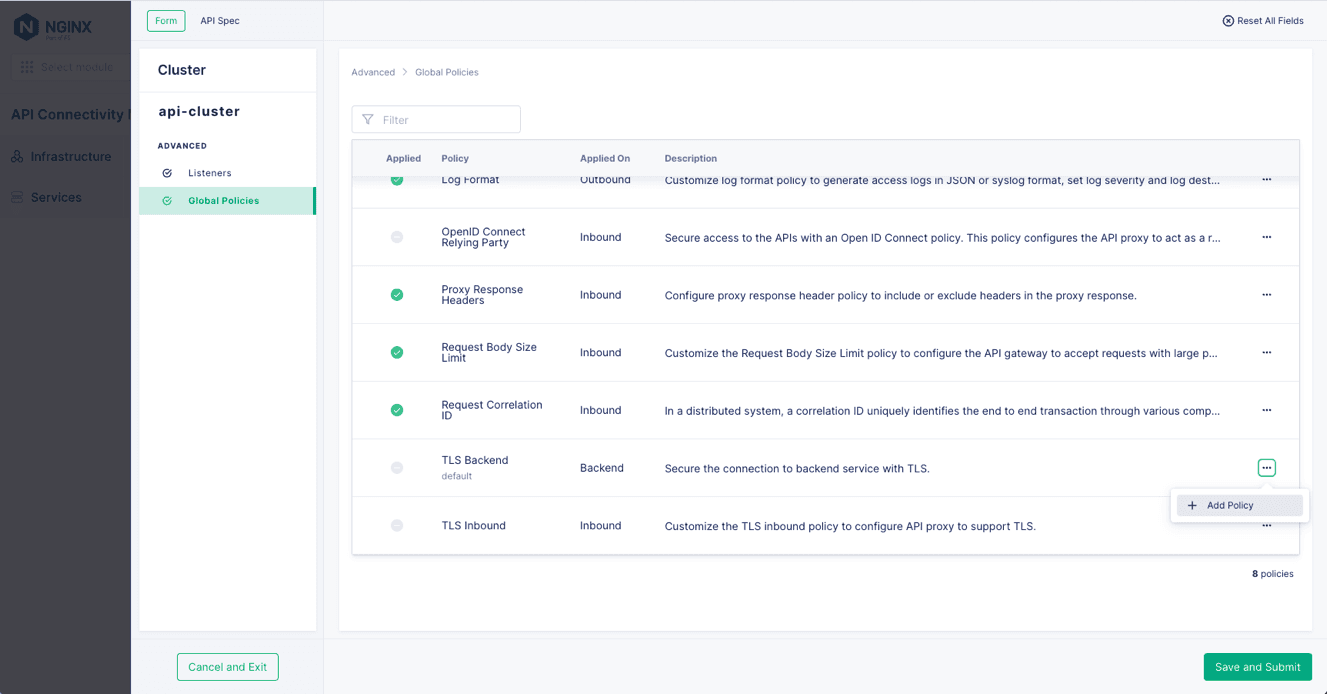

- Navigate to the Cluster tab for the API Gateway where you want to apply a policy (api-cluster in Figure 16). Click the Manage button that’s above the right corner of the Policies table. Figure 16: Managing policies for an API Gateway Cluster

- Click Global Policies in the left navigation column, and then the … icon in the rightmost column of the row for the policy (TLS Backend in Figure 17.) Select + Add Policy from the drop‑down menu. Figure 17: Adding a global policy to an API Gateway Cluster

About the Author

Related Blog Posts

Automating Certificate Management in a Kubernetes Environment

Simplify cert management by providing unique, automatically renewed and updated certificates to your endpoints.

Secure Your API Gateway with NGINX App Protect WAF

As monoliths move to microservices, applications are developed faster than ever. Speed is necessary to stay competitive and APIs sit at the front of these rapid modernization efforts. But the popularity of APIs for application modernization has significant implications for app security.

How Do I Choose? API Gateway vs. Ingress Controller vs. Service Mesh

When you need an API gateway in Kubernetes, how do you choose among API gateway vs. Ingress controller vs. service mesh? We guide you through the decision, with sample scenarios for north-south and east-west API traffic, plus use cases where an API gateway is the right tool.

Deploying NGINX as an API Gateway, Part 2: Protecting Backend Services

In the second post in our API gateway series, Liam shows you how to batten down the hatches on your API services. You can use rate limiting, access restrictions, request size limits, and request body validation to frustrate illegitimate or overly burdensome requests.

New Joomla Exploit CVE-2015-8562

Read about the new zero day exploit in Joomla and see the NGINX configuration for how to apply a fix in NGINX or NGINX Plus.

Why Do I See “Welcome to nginx!” on My Favorite Website?

The ‘Welcome to NGINX!’ page is presented when NGINX web server software is installed on a computer but has not finished configuring