5 things CISOs should know about AI

Explore 2026 trends in AI inferencing and multi-model AI to plan for their infrastructure and security implications.

Explore 2026 trends in AI inferencing and multi-model AI to plan for their infrastructure and security implications.

Self-managed inferencing and multi-model AI are exploding, as shown in survey results from more than 1,100 global IT decision makers. Consider these five infrastructure implications that security leaders can’t afford to ignore:

Organizations today use an average of seven AI models, with 52% chaining or orchestrating multiple models. That brings new security risks such as routing manipulation, data exfiltration through model chains, and inconsistent policy enforcement across models. Model routing represents infrastructure and requires observability and controls similar to those for routing application traffic.

Machine-triggered automation, such as API calls, policy updates, configurations, and even infrastructure changes, is about to exceed human-triggered operational traffic. But most environments are not prepared to monitor or control those requests at machine speeds and volumes. A new category of system activity—automation traffic—requires new management solutions.

More than half of organizations already use shared infrastructure, such as gateways and load balancers, to deliver and protect AI inference. Another 37% plan to do so within a year. Security teams, largely still focused on model governance, must realize that the security boundary has moved to the inference path. When prompts act like requests, responses like app outputs, and models like services, that inference traffic needs to be delivered, inspected, authenticated, and governed like any other critical app interaction.

No longer an experiment or isolated capability, AI models today are dependencies inside production applications, and inference endpoints are accessed the same way apps call APIs or microservices. But once AI sits in the application path, it must be delivered, observed, and protected like any other runtime dependency.

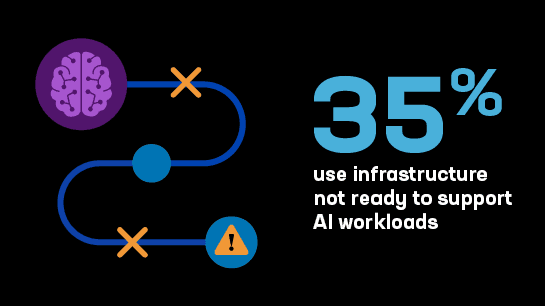

More than a third of organizations—and 40% of senior IT leaders—say their current infrastructure lacks compute, storage, networking, or other performance requirements to support AI at scale. Their security architectures may be even more lacking. Environments not designed to support inference or agent orchestration were also not designed to enable observation and protection of related traffic. Those blind spots create security risks.

Protect your organization and plan ahead to successfully deliver AI apps at scale. Download these insights as an infographic to share with decision makers in your organization.

Learn more about current AI trends and their security implications in the full 2026 F5 State of Application Strategy Report.

Get the report