AI Security Trends: Key Insights from Our Recent Webinar

Want to consider how far AI has advanced? Just consider the Will Smith Eating Spaghetti test. In less than five years, we’ve gone from limited, text-based AI to increasingly widespread agentic AI usage.

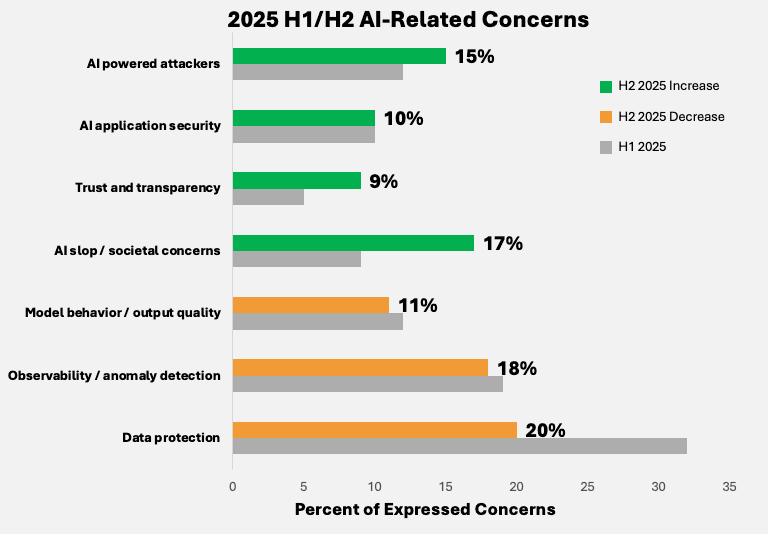

Today, many organizations have incorporated AI in some capacity into their web applications. But how do they adequately protect their AI applications, especially in such a rapidly-changing environment? As global AI maturity increased, AI-related concerns become more evenly distributed among security practitioners.

During our recent webinar, "AI Security That Keeps Pace," Mark Toler and David Warburton shared groundbreaking insights about the challenges and solutions shaping this new reality. Here’s a summary of the key takeaways from their thought-provoking session.

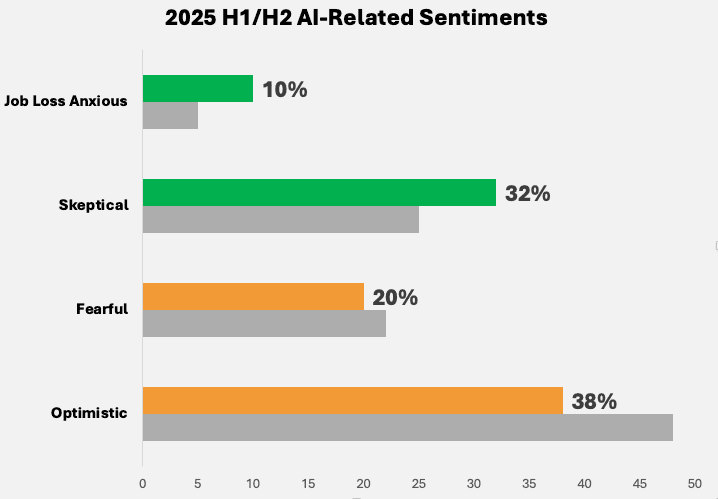

1. The Evolving Sentiment in AI Security

Mark began by exploring the current sentiment among security practitioners regarding AI. Through an ensemble of AI language models trained to analyze user conversations in top cybersecurity forums, his team distilled pressing concerns.

Overall, he noted an industry shift from unmanaged AI deployments to customer-managed solutions, signifying a growing maturity in handling AI integrations. However, concerns like shadow AI and excessive agency in AI systems remain at the forefront.

2. The Rapid Expansion of AI-Induced Threats

The webinar highlighted the evolving threat landscape, drawing attention to how AI systems are now enabling more sophisticated attacks. From using AI to conduct blind SQL injection attacks to automating vulnerability discovery and exploitation at scale, attackers are leveraging these advanced tools in unprecedented ways. This has democratized complex cyberattacks, enabling even lower-skilled attackers to pose significant threats.

3. Practical Solutions: Guardrails and Red Teaming

To counter these threats, solutions such as F5 AI Guardrails and AI Red Team play a crucial role. While traditional penetration testing struggles to keep up with the sheer breadth of AI attack patterns, AI red teaming is emerging as a foundational tool. This AI-driven approach accelerates testing and simulates real-world attack scenarios, helping practitioners bolster their defenses proactively.

Additionally, guardrails serve as a critical tool for preventing AI systems from exceeding their programmed scope. Mark explained the importance of "least privilege access" as an internal practice for AI security, ensuring that systems only have access to what’s necessary for their function. This, combined with customizable AI guardrails, provides a robust defense against prompt injection, jailbreak attacks, and other vulnerabilities.

4. The Next Frontier: Distinguishing Human and AI Traffic

Another pressing subject discussed was the challenge of distinguishing between human and AI traffic. As non-human identities become more prevalent, new protocols like Model Context Protocol (MCP) and Agent Gateway Protocol (AGP) are promising to address this growing concern. However, there is still no industry consensus, making this an area to watch as it evolves.

5. Quantifying LLM Security

During the webinar, David highlighted F5’s month CASI and ARS scores. These can be used to assess dozens of major LLMs for performance, vulnerability, and security efficacy. These scores help organizations compare models based on cost, speed, and security features, ensuring they select models best suited for their use cases. David elaborated on various LLMs in the market, emphasizing the importance of balancing affordability with high performance and security.

Conclusion:

AI holds immense potential but comes with equally significant risks. And, security teams need to distinguish between hype and reality when it comes to both what their AI and AI security tools can really provide.

By leveraging cutting-edge tools such as AI Guardrails and AI Red Team, and by adopting principles like least privilege access, organizations can stay ahead in this rapidly changing landscape. The future of AI security lies in proactive measures, strong governance, and an evolving set of best practices.

Interested in delving deeper? Check out the full webinar on demand to hear directly from Mark and David for yourself.