The State of Application Strategy in 2026

Explore the state of AI and app strategy in 2026, including trends in AI inferencing, multi-model AI, and AI security.

Get the report delivered to your inbox

Explore the state of AI and app strategy in 2026, including trends in AI inferencing, multi-model AI, and AI security.

Get the report delivered to your inbox

Self-managed inferencing and multi-model AI are now the norm:

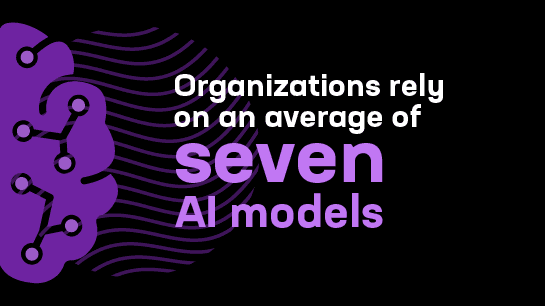

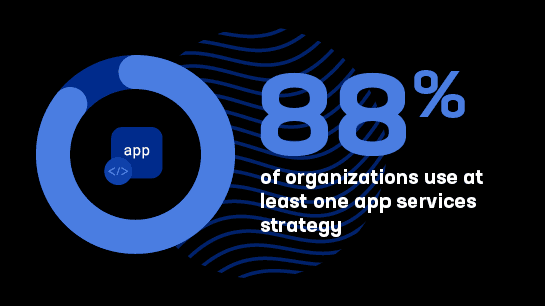

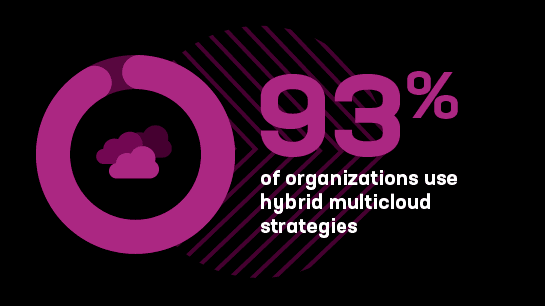

Multi-model AI offers benefits but adds complexity and creates delivery, security, and management challenges. That’s particularly true for the 93% of organizations operating in hybrid multicloud environments. Failure to plan for current trends—or deploying inadequate point solutions—will likely cause AI deployments to stall or present scalability challenges along the AI application journey.

Read the report to learn how to respond as AI becomes a crucial infrastructure layer and agentic AI invokes new security, observability, and management challenges.

More than 1,100 IT decision makers at global organizations responded to our 12th annual survey to share their current progress, most persistent concerns, and plans for the near future as their businesses evolve. Key findings include:

With 52% of organizations chaining or orchestrating multiple AI models, distributed inferencing parallels distributed app deployment as a source of complexity and security risk. As a result, AI has become a new infrastructure layer to be managed.

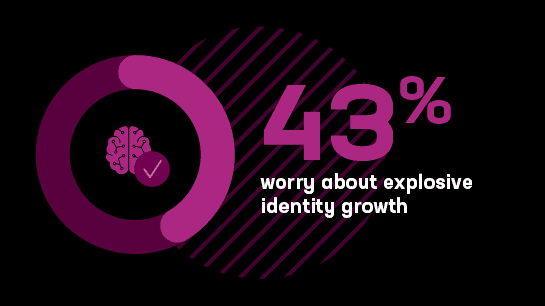

More than nine in 10 respondents report that agentic AI at production levels brings a new set of challenges, which range from credential stuffing and other security issues to auditing the actions of AI agents.

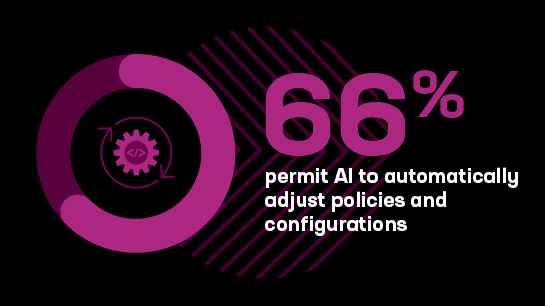

Two-thirds of organizations already use AI in IT operations to automatically adjust policies and configurations. Meanwhile, 67% use it to accelerate automation efforts.

With nearly everyone self-hosting at least some of their inferencing infrastructure, delivering and securing it at scale becomes important. The No. 1 strategy in place today, used by 55% of organizations, is managing authentication for AI services and API access. Observability services rank a close second.

More than nine in 10 organizations operate across at least two types of public cloud, private cloud, and colocation environments. 86% operate across all three.

Discover the implications of these and other fascinating results on current approaches to app delivery and security in the inferencing age.

Read how 1,100 global IT decision makers are deploying AI at scale

Understand trends and evaluate strategies to keep up with leaders

Identify data-based approaches to reduce management costs and relieve IT burdens

Rapid AI deployments bring critical implications for infrastructure and security. Explore the data to successfully deliver AI apps at scale.

Get the infographic2026 State of App Strategy key findings ›

5 things CISOs should know about AI ›

From packets to prompts: Inference adds a new layer to the stack ›

F5 Report 2026: AI inferencing has arrived, complicating an already complex IT landscape ›

Three moves CISOs can't afford to delay ›

2025 State of AI Application Strategy Report: AI Readiness Index ›