Over the past year, chief information security officers (CISOs) have watched the rise of agentic browsing with equal parts curiosity and concern. Unlike traditional browsers, agentic browsers do not just load a webpage; they act on it. They read content, click links, summarize documents, extract data, fill out forms, and even make purchases on users' behalf. Essentially, these browsers “surf the web on your behalf” and perform autonomous tasks like sending emails, creating calendar events, or even shopping.

“Agentic browsers blend perception (reading and understanding) with execution (taking actions): what looks like inert content to a human can become an executable instruction to an AI agent.”

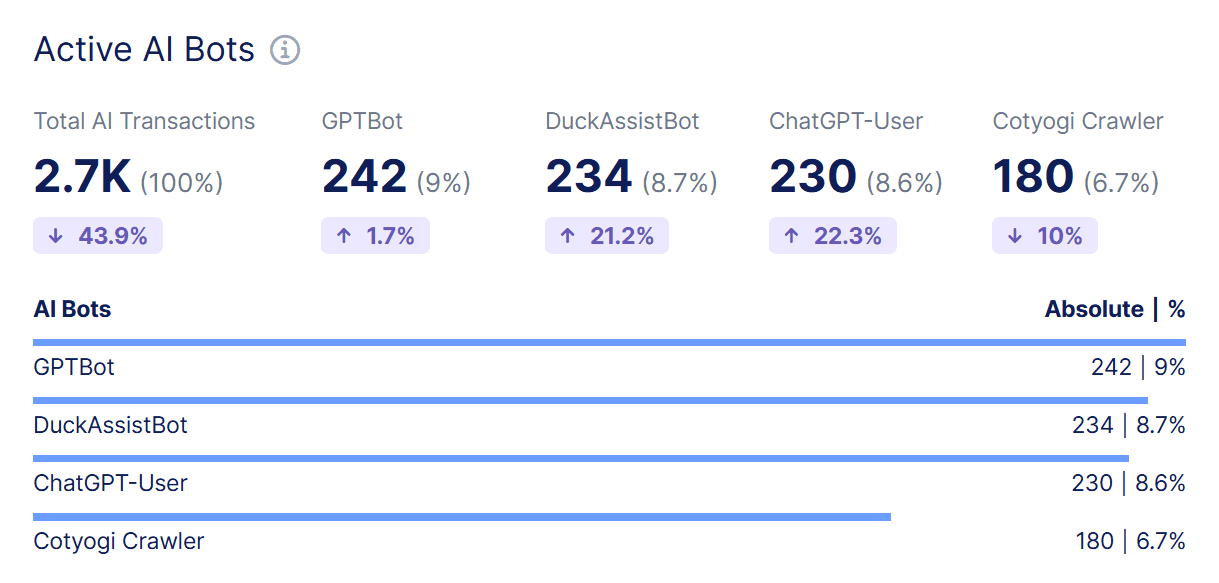

As F5 Distributed Cloud Bot Defense classifies traffic patterns across real applications, one question keeps coming up from security leaders: how is agentic browsing different—and does it introduce more abuse and risk—than traditional browsing?

Based on independent, publicly documented research into agentic browsers, the answer appears to be yes: agentic browsing creates new abuse pathways and greater risk, because these tools interpret first- and third-party content as literal instructions. This means they can be hijacked to perform malicious actions with a user’s existing credentials.

How agentic browsing changes the threat model

Traditional browsers render content and rely on human decisions to act. Agentic browsers, by design, blend perception (reading/understanding) with execution (taking actions). That combination changes the trust boundary: what looks like inert content to a human can become an executable instruction to an AI agent. Researchers have shown that attackers can hide instructions in URLs, HTML, comments, and even screenshots. When an agent ingests them, the instructions can be executed inside authenticated sessions.

This is precisely why distinguishing agentic traffic from human traffic matters. Agentic sessions tend to exhibit non-human patterns (machine-speed parsing, autonomous form interactions, non-linear navigation), giving bot mitigation systems the ability to detect and treat them differently, an essential control as the ecosystem hardens.

Examples of attacks targeting agentic behavior

Following are some of the security and privacy risks introduced by agentic browsers.

CometJacking is a prompt-injection method in which a malicious URL secretly converts a helpful browser assistant into a data thief, using a single URL as a data-exfiltration instruction. The link contains hidden commands instructing the agent to collect data (such as emails and calendar entries), obfuscate the data (as with Base64 encoding), and exfiltrate it to an attacker-controlled endpoint. There’s no need for credential theft, since the agent already has legitimate access.

Hidden instructions embedded in webpages are another security risk. When users ask a browser to “summarize this webpage,” the browser can send content to its LLM without reliably distinguishing untrusted page content—which may contain embedded instructions—from user commands. This allows indirect prompt injections through invisible or hidden text to access email addresses and one-time passwords, and perform unauthorized actions across tabs.

Steganographic screenshot attacks result when a browser's screenshot optical character recognition (OCR) ability captures hidden, machine-readable text invisible to humans. After extraction through OCR, these “ghost instructions” are interpreted as commands, bypassing traditional browser protections (such as the same-origin policy) and allowing unauthorized access and data theft. A website linking to third-party images might be under an active attack with no perceptible difference in the content it’s serving.

These examples of attacks on agentic behavior point to systemic—not isolated—issues that are structural to agentic browsing: combining content and command channels invites prompt injection and instruction hijacking unless strict boundaries are in place. Many AI‑native browsers remain insufficiently “battle tested,” and that compromise could extend to any integrated application or connector.

The key takeaway? Traditional browsing does not treat content as commands; however, agentic browsing does, and recent research indicates that attackers can exploit this difference.

Best practices for mitigating agentic browsing risks

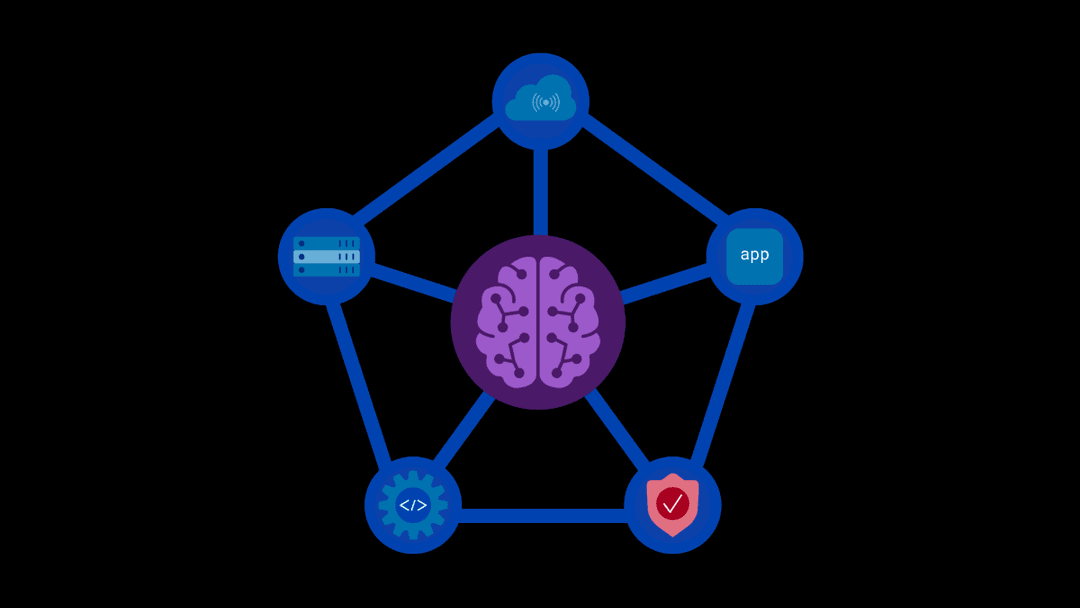

Given the demonstrated exploits against agentic browsers and the likelihood that similar risks exist across other agentic tools, CISOs should adopt an explicit policy and control framework for agentic browsing.

- Enact access and policy controls. This includes restricting or conditionally allowing agentic browsers. Be sure to adopt explicit allow/deny lists for AI-driven browsers at the edge, web application firewalls (WAFs), and application layers. Treat agentic clients as higher risk due to autonomous actions and instruction processing. Also, require step‑up authentication for sensitive operations. Trigger MFA/reauthorization for data exports, profile/credential changes, payment events, and administrative actions to limit the damage if an agent is hijacked in an authenticated session.

- Detect and monitor. LeverageDistributed Cloud Bot Defense to detect and classify agentic traffic by distinguishing human sessions from agentic ones using behavioral and signal-based indicators. Apply adaptive policies to agentic sessions. Also, employ continuous behavioral anomaly monitoring for sequences that are too fast or too consistent for humans. Pair with risk scoring to drive challenges or to contain temporary sessions.

- Apply application‑level hardening. Be sure to validate all state-changing requests and enforce server-side checks (and never rely solely on client signals). This reduces the success rate of hidden instruction attacks that trick agents into performing harmful actions. Rate‑limit sensitive endpoints to throttle authentication attempts, financial operations, and export/download endpoints to blunt automated abuse at machine speed.

- Minimize sensitive data exposure per view. Design pages so that agents cannot scrape massive quantities of sensitive data in a single request. In addition, inventory AI tools and agentic browsers and maintain an endpoint/application map of which AI tools are installed and which systems they can access. Treat this as part of your attack surface management.

- Use red team tactics for agentic threats. Add test cases for indirect prompt injection, steganographic payloads, malicious URL parameters, and cross-tab data extraction to your offensive security program. This reveals control gaps unique to agentic browsing. Explore F5 AI Red Team to identify attack surfaces and deploy agents that target vulnerabilities in AI models and applications.

Agentic browsing requires a new defensive posture

Because agentic browsing creates distinctive patterns, such as non-human timing, autonomous navigation, and repeated summarization or extraction loops, Distributed Cloud Bot Defense can identify these sessions and respond appropriately through increased authentication, rate limiting, feature downgrades, or outright blocks, based on risk. This visibility is essential as the agentic ecosystem evolves and browser vendors better understand content isolation.

The referenced exploits against agentic browsers, including weaponized URLs, hidden-page instructions, and steganographic screenshot prompts, show that agentic browsing introduces risks that traditional web security was not designed to handle. In agentic models, seemingly harmless content can become executable instructions, and an authenticated session can turn an AI agent into a powerful insider threat.

Until agentic browsers implement strong boundaries between content and commands, CISOs should treat these clients as high risk by default, enforce clear policies, and rely on advanced bot defense from F5 to detect and manage agentic traffic. Both visibility and enforcement are essential in this new era.

Assess your exposure today—schedule an agentic‑risk review with our security team.

About the Authors

Related Blog Posts

Kubernetes-native WAF for the gateway era: F5 WAF for NGINX now integrates with F5 NGINX Gateway Fabric

F5 extends WAFs to deliver consistent, scalable protection across clusters and environments with F5 NGINX Gateway Fabric and F5 NGINX Ingress Controller.

From dashboard fatigue to operational excellence: Why XOps needs F5 Insight for ADSP

Learn how F5 Insight for ADSP lays the visibility foundation for XOps—turning fragmented signals across applications and infrastructure into actionable intelligence.

The hidden cost of unmanaged AI infrastructure

AI platforms don’t lose value because of models. They lose value because of instability. See how intelligent traffic management improves token throughput while protecting expensive GPU infrastructure.

Govern your AI present and anticipate your AI future

Learn from our field CISO, Chuck Herrin, how to prepare for the new challenge of securing AI models and agents.

F5 recognized as one of the Emerging Visionaries in the Emerging Market Quadrant of the 2025 Gartner® Innovation Guide for Generative AI Engineering

We’re excited to share that F5 has been recognized in 2025 Gartner Emerging Market Quadrant(eMQ) for Generative AI Engineering.

Self-Hosting vs. Models-as-a-Service: The Runtime Security Tradeoff

As GenAI systems continue to move from experimental pilots to enterprise-wide deployments, one architectural choice carries significant weight: how will your organization deploy runtime-based capabilities?