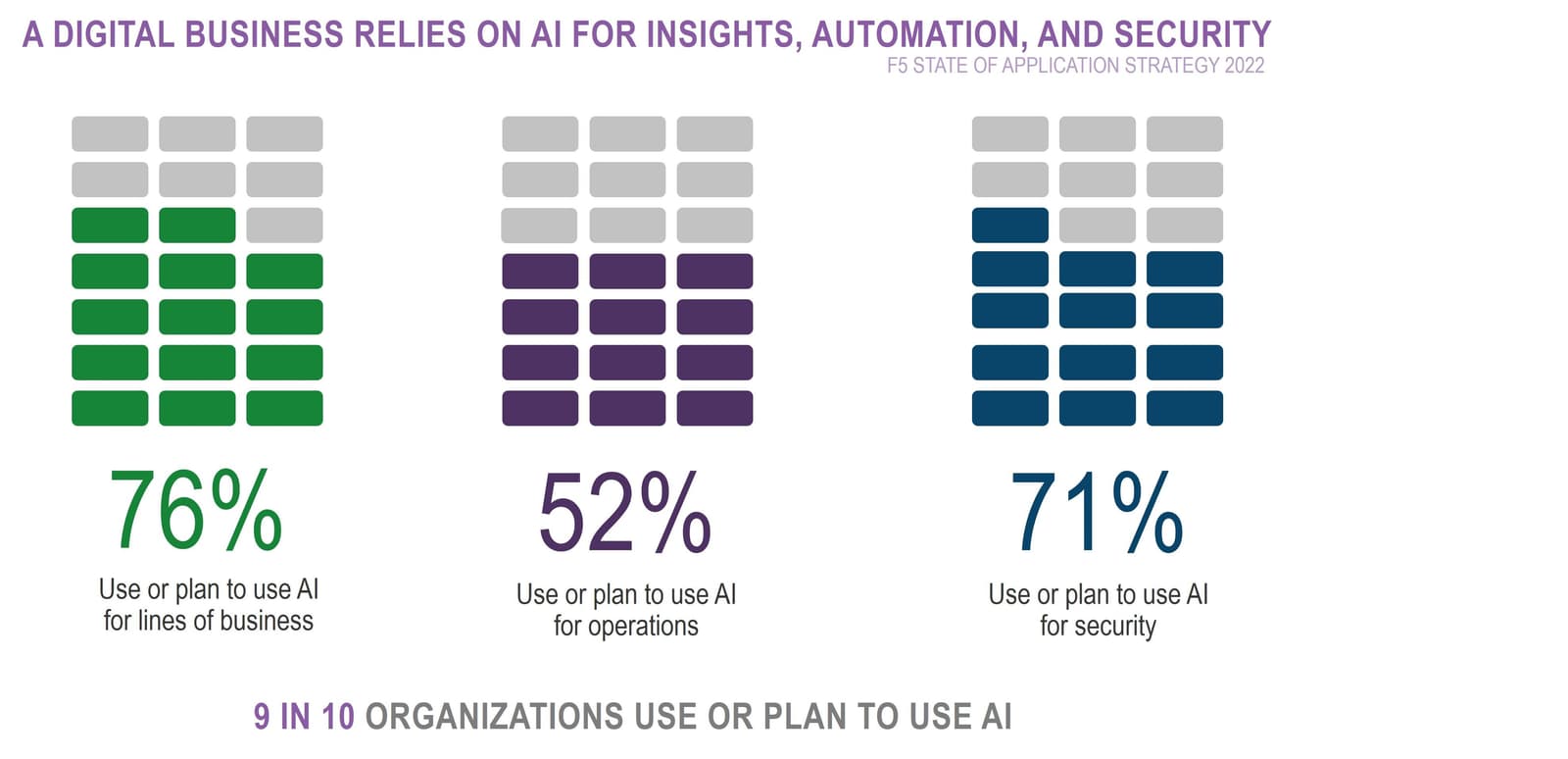

There’s a lot of hype around the use of AI in everything from marketing to recruiting to operations to security. Our annual research indicates that most organizations are eagerly eyeing AI across business, operations, and security.

None of that is a surprise. There is survey upon survey indicating a healthy adoption of AI to support a wide variety of business and IT functions.

What’s not always talked about is how AI is being incorporated into development.

The core premise of AI’s value to the business and security is in its ability to recognize patterns and relationships that produce actionable insights. Many don’t consider the “engine” behind that capability, so never really dig into the details of what AI technologies are being used to magically uncover valuable insights.

Machine learning is a specific branch of AI that focuses on data analysis and modeling. Its usage is applicable to security because, given enough time and data, it’s capable of identifying patterns that indicate anomalous behavior in real time. Similarly, it can find obscure relationships in business data that represent opportunities to market products and services.

But machine learning also excels at modeling; that is, executing hundreds of “what if” scenarios to produce a better understanding of the complex relationship between multiple variables. In development—engineering—those variables could be the size of data, memory allocated, speed of I/O, network bandwidth, and virtual machine parameters. Machine learning is quite flexible and if you identify the variables, you can use machine learning to model various combinations of those variables to discover an ”optimal” set.

For example, F5 Distinguished Engineer Laurent Querel and F5 Sr. Architect Sebastien Soudan teamed up and recently published a piece describing how they designed a model to “build an efficient way to get data from PubSub to BigQuery.”

They also explain why the use of machine learning is a better choice for software optimizing today, and did it so well I’m just going to quote them:

“Today, software optimization is an iterative and mostly manual process where profilers are used to identify the performance bottlenecks in software code. Profilers measure the software performance and generate reports that developers can review and further optimize the code. The drawback of this manual approach is that the optimization depends on [a] developer's experience and hence is very subjective. It is slow, non-exhaustive, error prone and susceptible to human bias. The distributed nature of cloud native applications further complicates the manual optimization process.

An under-utilized and more global approach is another type of performance engineering that relies on performance experiments and black-box optimization algorithms. More specifically, we aim to optimize the operational cost of a complex system with many parameters.”

The driving factors behind the use of AI—specifically machine learning—in development is much the same as the factors driving its adoption across IT operations: manual processes are slow, error prone, and susceptible to human bias.

When we talk about the modernization of IT and the steady march toward a fully digital business, that includes development/engineering.

I encourage a read of “Optimize your applications using Google Vertex AI Vizier” even if it’s just to get a feel for the process of designing an appropriate model and what they learned from their experience.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.