Along with the larger container ecosystem, service meshes continue to plow forward toward maturity. We're still in early days, though, and there are a variety of approaches being applied to solving the problem of intra-container traffic management with service meshes.

We (that's the corporate We) fielded many questions leading up to our official acquisition of NGINX this spring. Several of them focused on areas of "overlap" in technology and solutions. After all, both NGINX and F5 offer proxy-based application delivery solutions. Both NGINX and F5 are building a service mesh. The question was, which one would win?

Because that's the way it often works with acquisitions.

As my counterpart from NGINX and I often reiterated, the technologies pointed to as overlapping were, in our estimation, more complementary than competitive. That's true with our service mesh solutions, as well.

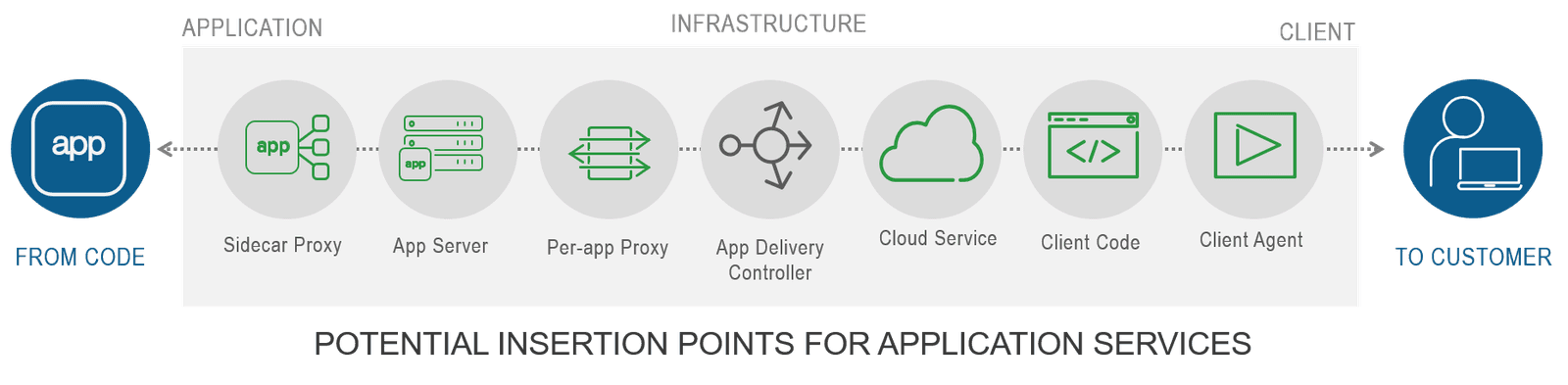

Our logic follows from a shared vision of application delivery. We both see the impact containers and cloud, microservices and a preponderance of security breaches are having on application delivery architectures and models. In the same way there is no longer "one data path to deliver all applications" there is no longer "one application delivery model to deliver all application services." Cloud introduces multiple data paths. Containers introduce a new data path. Both broaden the possible placement of application services from a network-based proxy (an ADC) to a lengthy list of locations ranging from client to network to server to container to cloud.

As we noted in a post focusing on architectures in our Bridging the Divide series, the choice of where and how one delivers application services depends on many factors. It's not just a choice between vendor implementations or "enterprise versus FOSS"; it's a choice that must factor in location (cloud or on-premises), operational model, and even ease of implementation versus required functionality. Considering the breadth of the delivery path, this provides multiple options for inserting application services.

This is why We view our combined F5 and NGINX portfolio as complementary and not competitive. Because the overall market for application delivery is no longer competing for placement in a single location but is instead competing for placement in multiple locations.

Service meshes are designed to scale, secure, and provide visibility into container environments. Being a nascent and rapidly evolving technology, there are multiple models emerging. One is based on the use of a sidecar proxy (Envoy has emerged as a leading CNCF project and the industry standard sidecar proxy) and the other takes advantage of per-app proxies, a la NGINX Plus.

We currently plan on supporting both because customers have very strong opinions about their infrastructure choices when it comes to containers. Some prefer Istio and Envoy and others are standardized on everything NGINX.

The number of components that must be operated and managed in a container environment is such that existing expertise in technology is an important factor in choosing a service mesh. Organizations that have standardized on NGINX for their infrastructure are naturally likely to gravitate toward an NGINX service mesh solution because it comprises all NGINX software, from the NGINX proxy or NGINX Unit to NGINX Controller. Existing operational expertise in NGINX and its open source ecosystem can mean less friction and delays in deployment.

Other organizations have the same views on alternative open source solutions like Istio and Envoy. Aspen Mesh makes use of Envoy and implements atop Istio, so it's a more natural fit for organizations with existing investments in the underlying technology. It is a tested, hardened, packaged and vetted distribution of Istio. Aspen Mesh adds several features on top of Istio that include a simpler user experience through the Aspen Mesh dashboard, a policy framework that allows users to specify, measure and enforce business goals, and tools such as Istio Vet and Traffic Claim Enforcer. Aspen Mesh, like NGINX, also integrates well with F5 BIG-IP.

Both NGINX and Aspen Mesh offer management and visualization of Kubernetes clusters. Aspen Mesh and NGINX both offer their solution as an on-premises option. Both provide tracing and metrics that are critical to addressing the issue of visibility, a top production challenge noted by 37% of organizations in the State of Kubernetes Report from Replex.

Organizations that prefer a sidecar proxy-based approach to service mesh will prefer Aspen Mesh. Organizations who believe per-app proxy-based service meshes best suit their needs will prefer NGINX.

Your choice depends on a variety of factors and We think this emerging space is important enough to continue to support choices that address different combinations of needs and requirements.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

F5 joins the Dell AI Ecosystem Program to help enterprises operationalize AI

F5 joins the Dell AI Ecosystem Program to help enterprises deploy production AI with greater performance, security, and control.

Why sub-optimal application delivery architecture costs more than you think

Discover the hidden performance, security, and operational costs of sub‑optimal application delivery—and how modern architectures address them.

Keyfactor + F5: Integrating digital trust in the F5 platform

By integrating digital trust solutions into F5 ADSP, Keyfactor and F5 redefine how organizations protect and deliver digital services at enterprise scale.

Architecting for AI: Secure, scalable, multicloud

Operationalize AI-era multicloud with F5 and Equinix. Explore scalable solutions for secure data flows, uniform policies, and governance across dynamic cloud environments.

AppViewX + F5: Automating and orchestrating app delivery

As an F5 ADSP Select partner, AppViewX works with F5 to deliver a centralized orchestration solution to manage app services across distributed environments.

F5 NGINX Gateway Fabric is a certified solution for Red Hat OpenShift

F5 collaborates with Red Hat to deliver a solution that combines the high-performance app delivery of F5 NGINX with Red Hat OpenShift’s enterprise Kubernetes capabilities.