As AI agents mature from copilots into autonomous actors, the industry is converging on a shared abstraction: agent skills.

Agent skills formalize what an agent knows how to do. They package instructions, expected behaviors, and declared tool usage into portable artifacts that can be shared, versioned, and reused across agents and platforms. Conceptually, they serve the same role plugins once did for IDEs or extensions for browsers.

What gives this model real weight is that it is no longer experimental or proprietary. Anthropic has published an open Agent Skills standard, positioning skills as a portable, interoperable layer rather than a framework-specific construct. Much like MCP standardized how agents talk to tools, Agent Skills are standardizing how agents acquire capability.

That matters. Open standards have gravity. They attract ecosystems, tooling, registries, and eventually enterprise adoption. When vendors align on a common abstraction, it stops being a curiosity and starts being infrastructure. The launch in December 2025 included partner skills from a variety of vendors (Atlassian, Figma, Canva, Stripe, Notion, and Zapier) demonstrating the standard already has momentum.

Which means the security implications are no longer hypothetical.

Why agent skills change the security model

Agent skills are intentionally lightweight. They are designed to be human-readable, easy to author, and simple to distribute. Most implementations rely on Markdown for behavior and YAML for metadata, because frictionless sharing is the goal.

From a developer productivity standpoint, that’s a win.

From a security standpoint, it creates a familiar but dangerous pattern: executable intent delivered as content.

Skills are loaded at runtime. They operate inside the agent’s reasoning loop. They can influence planning, tool selection, and execution order. They are often fetched dynamically and composed transitively. In other words, they behave less like configuration files and more like supply-chain inputs.

Crucially, skills do not enforce anything. They declare what an agent would like to do. They do not constrain what the agent can actually do.

That distinction is everything.

Once a skill is active, the traditional “shift left” controls developers rely on become largely irrelevant. There is no pull request for an agent’s internal plan. There is no human code review before a tool call fires. There is no pause between reasoning and execution.

Development, for agents, happens at runtime.

The enforcement gap skills don’t fill

It’s tempting to treat agent skills as a security surface. After all, they list required tools and expected behavior. It feels like a natural place to insert guardrails.

But skills live in the wrong place in the stack.

They sit inside the agent. They are interpreted by the same model that is optimizing for completion and progress. Asking a skill to enforce security is equivalent to asking the agent to restrain itself. That has never worked reliably, and it never will.

More importantly, skills have no authority. They cannot revoke access, throttle execution, inspect side effects, or prevent data exfiltration. At best, they provide hints. At worst, they provide a false sense of control.

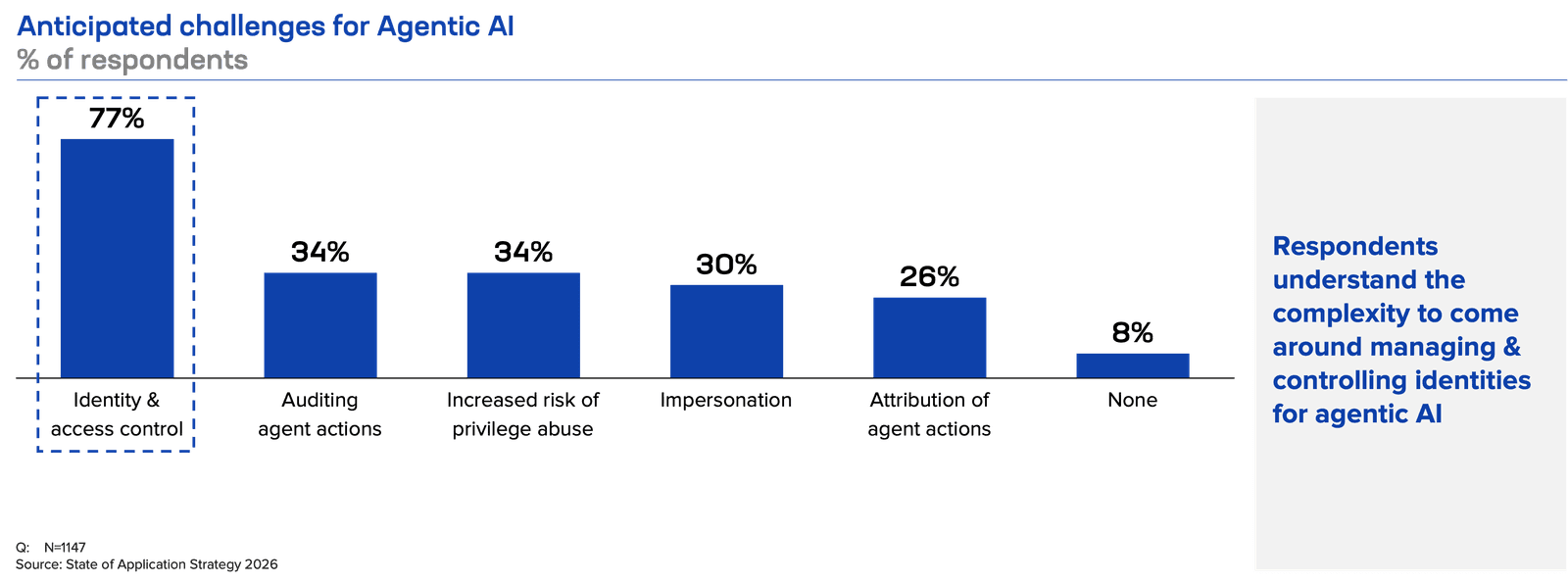

Security cannot live at the level of intent description. It has to live at the level of capability execution. In more familiar terms, this is access control and identity management applied to autonomous systems. Our customers recognize this as a significant challenge related to agentic AI.

Why the tool layer is the only real enforceable boundary

Agents don’t cause harm by thinking incorrectly. They cause harm when thought turns into action at the tool boundary.

Every meaningful agent action from calling an API to writing code, from deploying infrastructure to querying a database or sending data to another system, crosses a tool interface. That interface is where autonomy meets reality. It is also the last place an agent cannot bypass if the system is designed correctly.

This is why a “tool firewall” emerges as the best control plane for agent security.

A tool firewall mediates every tool invocation. It sits between agents and the systems they touch, enforcing policy before execution rather than auditing after damage is done. Unlike prompt-level controls or skill metadata, it operates outside the agent’s reasoning loop and is therefore not subject to model compliance.

This is the “MCP security” meets “AI guardrails” layer. MCP defines how agents talk to tools. The tool firewall decides whether they’re allowed to and under what constraints. If tools are what allow agents to learn “new tricks,” then the tool firewall is the control that prevents those tricks from burning down the house.

This is not a new idea conceptually. It is the same pattern that underpins API gateways, service meshes, and zero trust architectures. What’s new is the actor on the other side of the interface.

In practice, a tool firewall functions as a broker. Agents never hold direct credentials to external systems. They request actions. The firewall decides whether those actions are allowed, under what conditions, and with what constraints.

A tool firewall evaluates identity context (which agent, which skill, which environment), inspects parameters and payloads, enforces policy-as-code, and issues short-lived, least-privilege credentials only when appropriate. It observes outcomes, records provenance, and provides a verifiable audit trail for autonomous behavior.

This turns skills into what they should be: requests for capability, not grants of authority.

What’s left is for the market to decide what the form of a tool firewall will take. But its emergence as part of the security toolbox for agent architectures is inevitable.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Behavior and boundaries: The agentic security shift

Agents create emergent, unbounded sequences where risk accumulates over time. Security must shift from single-request validation to continuous behavioral governance across multi-step, evolving flows.

AI is driving the emergence of new traffic types

AI adoption is creating new first-class traffic types: inference requests plus machine-driven automation traffic and high-volume telemetry traffic that feed control loops.

Sessions are sticky, context is clingy: How inference cheats to maintain conversations

“Stateless” inference isn’t truly stateless—conversation state is hauled along in tokens each request. That replay drives bandwidth, compute, and latency as context grows.

Compression isn’t about speed anymore, it’s about the cost of thinking

In the AI era, compression reduces the cost of thinking—not just bandwidth. Learn how prompt, output, and model compression control expenses in AI inference.

The top five tech trends to watch in 2026

Explore the top tech trends of 2026, where inference dominates AI, from cost centers and edge deployment to governance, IaaS, and agentic AI interaction loops.

The influence of inference: APIs, DPUs, and context chaos

AI inference reshapes infrastructure, multiplying APIs, stressing compute, and complicating context. Learn why smarter architecture and runtime policy are essential.