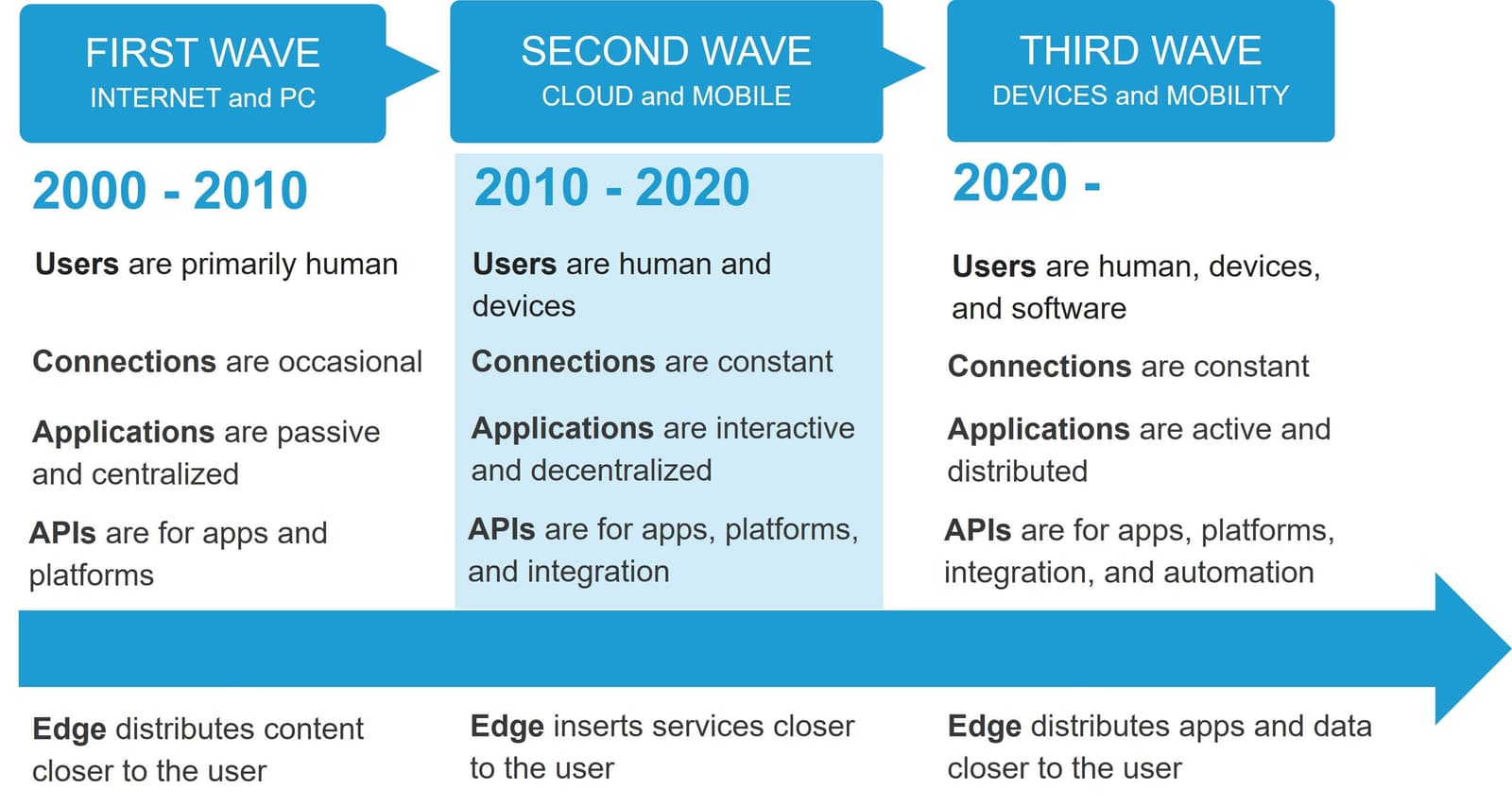

Edge has always existed. Well, at least since the first wave of the Internet drove the need to solve the “last mile” problem. With consumers eagerness to explore the seemingly endless Internet hampered by unreliable, dial-up connections, the first iteration of edge emerged with a solution: move static content closer to the user.

Since then, two additional waves of Internet evolution have put pressure on edge computing to also evolve.

Each wave of the Internet eliminates obstacles to ubiquitous, real-time computing. Yet each wave introduces new challenges, too. F5 CTO Geng Lin covers this evolutionary path in more detail in his latest paper, “The Third Wave of the Internet.”

In that paper we come to the inescapable conclusion that, until recently, there’s been no need for a platform at the edge. Challenges with connections were solved by advances in networking. Application design and architecture readily adapted to cloud, but the growing digital economy attracted bad actors. Volumetric attacks disrupted business while malicious code and malware became a path to profit.

There was still no need for a platform at the edge because its evolutionary path was to protect business and applications by inserting services closer to the user. This meant bad actors were detected and neutralized before they could disrupt business or manage to breach a company’s defenses.

But today we’re riding the third wave of the Internet, and while it is bringing new capabilities it is also introducing new challenges. While broadband connectivity is nearly ubiquitous, the number of devices and users constantly communicating over the network still poses a performance challenge. Attackers have grown even more devious and seek to exploit the pervasiveness of applications and devices, as well as consumers' seemingly insatiable appetite for digital engagement.

The response to these challenges is the inevitable evolution of edge. But the only thing we can move closer to the user now are the apps and data they need to engage in digital activities.

Edge, as it has evolved, was not built to support the distribution of apps and data. The ability to support such capabilities requires a platform. An edge application platform.

Designing an Edge 2.0 Application Platform

One does not simply throw together such a platform. Bolting on the ability to deploy compute on existing edge networks does not fully address the challenges posed by the third wave of the Internet. Nor does it fully take advantage of one of the significant shifts in computing: the ability of devices and endpoints to participate in solutions.

Applications and devices are no longer passive receivers of information. They are active participants, often initiating connections and dictating decisions. Existing edge platform approaches are based on applications as passive receivers of information. A new approach is necessary to fully leverage the power of distributed compute.

That approach is one that ensures the need for security, scale, and speed of applications at the edge are met without sacrificing developer and operational experiences. It also requires attention to parallel trends in technology around observability and the use of AI and machine learning for business, security, and operational automation.

While broad characteristics, such as described by our CTO Geng Lin in his Edge 2.0 manifesto, provide overarching guidance for an Edge 2.0 platform, design considerations at the architectural level are also needed.

It’s easy to say that such a platform should be “secure by default” and “provide native observability” as well as “deliver autonomy,” but what do those mean in terms of technology and approaches that must be considered? More importantly, how should they be incorporated into an Edge 2.0 application platform?

These questions—and more—are answered in our latest paper, “Edge 2.0 Core Principles,” authored by Distinguished Technologist Rajesh Narayanan and Mike Wiley, F5 CTO, Applications.

The path to taking advantage of the evolution of the edge ecosystem is clear, and that path is through an Edge 2.0 application platform.

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.