In the second half of 2025, AI moved from decision aid to decision maker, and security teams are anxiously preparing for the consequences of that autonomy. That’s at least the prevailing sentiment extracted from the latest update to our F5 AI sentiment research, a comprehensive study of how the top 500 contributors in the Internet’s largest cybersecurity community, Reddit’s r/cybersecurity, feel about AI.

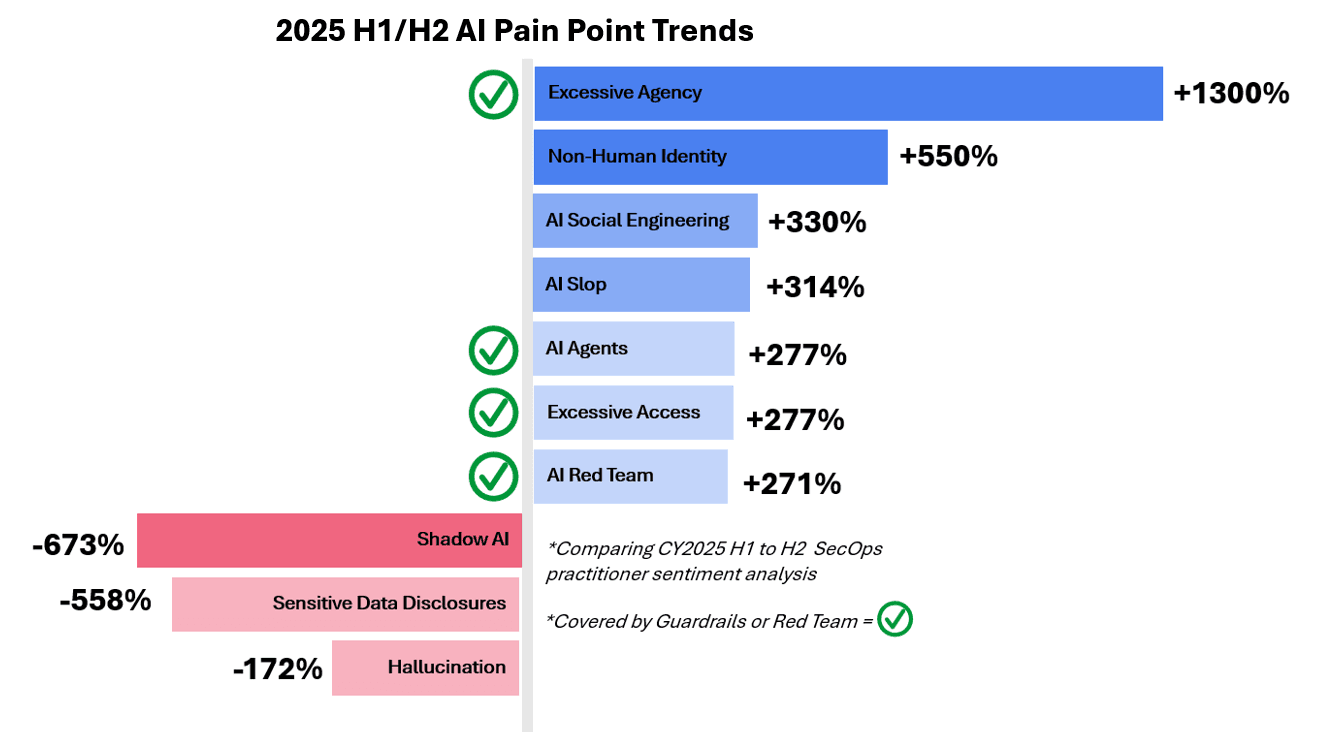

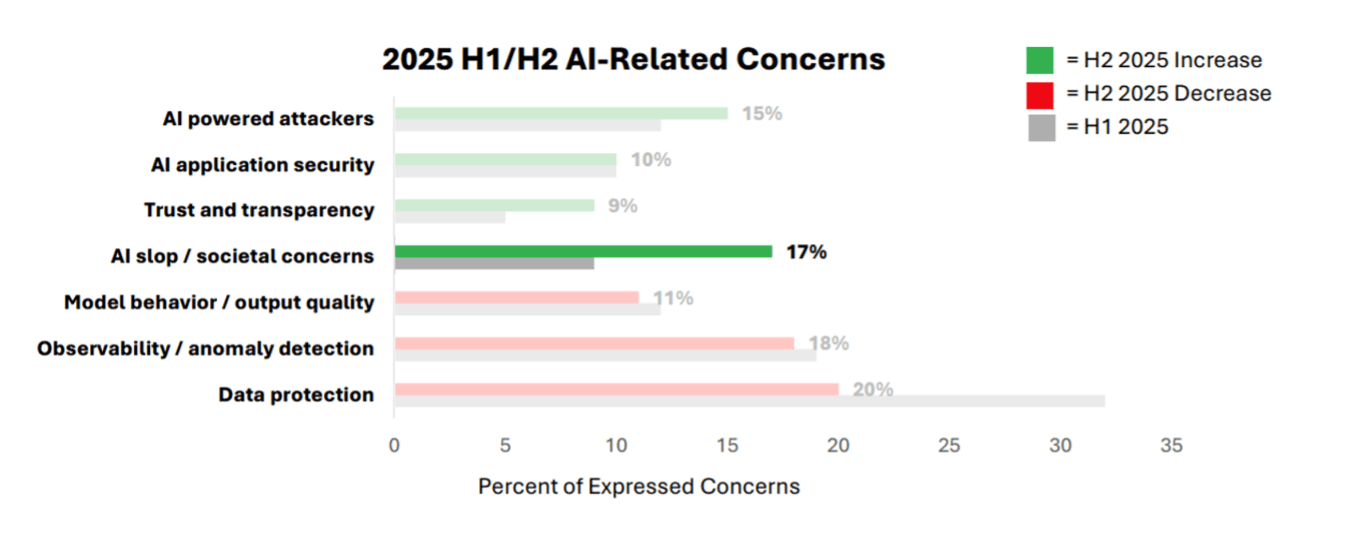

In our last analysis, shadow AI concerns and data security for third-party AI services dominated practitioner mindshare, but in the second half of 2025, the locus of concerns shifted to the excessive agency and access provisioned to their own enterprises’ AI deployments.

Paired with this fear is a rapidly growing skepticism of the true value AI will deliver relative to these risks. Practitioners are not advocating that the world bring the “AI train” to a screeching halt. But with the introduction of autonomous agents, the demand for dedicated safeguards is at an all-time high.

Excessive agency overtakes shadow AI as the top concern

Mentions of “excessive agency” in Reddit’s r/cybersecurity community grew 13x in H2 2025 over H1 2025, making it the top concern cited by security professionals, according to F5’s sentiment analysis. This comes at time when AI systems are being given greater decision-making autonomy and tool access in critical workflows as well as widespread access to sensitive data. Broadly defined as AI systems taking actions beyond the intended scope or oversight, excessive agency surfaced not only as anticipatory anxiety for the implications of autonomous AI agents, but more commonly as the direct or indirect influence AI has on critical workflows.

With every exciting, innovative use case in healthcare, financial services, or technology, the blast radius of worst-case implications expands disproportionally. One practitioner demonstrates this idea in the over-reliance of AI tools for summarization:

“We could be approaching an era where instead of exfiltrating raw data, bad actors will now exfiltrate AI summaries which can give detailed analysis of a company’s mission, shortcomings, vulnerabilities, and skeletons in the closet.”

-r/cybersecurity member

The most notable example of excessive agency discussed was the July Replit coding agent incident in which an agent deleted an entire customer database during an active code freeze. AI security failures don’t always “scream” as they did in this case; often they are soft semantic whispers that are difficult to mitigate in traditional LLM interactions, but pose an even greater challenge in agentic workflows with more limited visibility.

AI slop is a security concern

Doubts of AI’s current, near-term, or long-term value were rampant in H2 2025. The prevalence of “AI slop,” high-volume, low-quality AI-generated content that pollutes information channels and increases operational noise and risk, doubled in mind share in H2 2025. This surfaced as subpar AI generated code, increased distrust in content suspected to be AI-generated, and societal concerns like brain rot from AI dependance.

“Have you tried our Next Gen Zero Trust AI Cloud pencil? It’s like a regular pencil, but only works on a subscription model that costs $6000 a month.”

-r/cybersecurity member

Security practitioners demand demonstrable, repeatable value from AI-enabled applications and features. This is not a group wholly opposed to AI adoption; they’ve been using AI/ML in threat detection and triage with generally high satisfaction for years. What’s more, they frequently note that overly verbose AI-generated code can introduce vulnerabilities and reduce the number of engineers who fully understand the systems they maintain.

This phenomenon, defined as “insecure design,” saw a 3.1x increase in H2 mentions. As a result of this cumulative fatigue, products marketed with AI capabilities are subject to stricter scrutiny than less mature implementations might have received previously.

AI red teaming drives optimism

Despite the inundation of over-promising, under-delivering AI tools, dedicated AI red teaming tools saw a 2.71x increase in mentions, over 95% of which were positive endorsements of the practice as a new foundational requirement. As one practitioner aptly framed it:

“The skill floor for attackers just got obliterated. Our defense has to be faster than an LLM on caffeine.”

-r/cybersecurity member

With fewer barriers to entry for bad actors, many of whom are empowered by their own AI tools, defenses must keep pace with the unique prompt injection and jailbreak techniques attackers develop every day.

The dedicated red teaming tools designed to address this arms race were initially met with mixed reception earlier in 2025, with most questioning their efficacy compared to human offensive security teams. That skepticism converted to one of the greatest drivers of optimism in H2 as offerings matured, and the breadth of adversarial techniques became too vast for traditional, manual testing alone.

How F5 aligns to the latest trends

The most obvious takeaway of the latest sentiment trends is how quickly everything is moving: the risks, pains, needs all move fast. Securing AI workloads is a complex task, and the requirements vary significantly for every organization.

No vendor can fully capture the nuance and context required to secure every case of excessive access and agency out-of-the-box, but with F5 AI Guardrails, security teams get immediate protection against the most prevalent threats to AI systems, and custom guardrail creation to rapidly tailor defenses to unique needs.

We also align with the notion that AI red teaming is no longer a luxury, but a foundational requirement for securing AI systems. Backed by our preeminent AI vulnerability database of over 130,000 attack patterns, F5 AI Red Team helps teams find and fix vulnerabilities before attackers exploit them.

Interested in learning more about F5’s research on practitioner sentiment and AI threat research? Watch our webinar, “AI security that keeps pace.”

Also, please read our previous blog posts analyzing security professionals attitudes toward AI, “How does SecOps feel about AI” Part 1 and Part 2.

About the Author

Related Blog Posts

The patch window has closed. Here is how F5 is built for what comes next.

As AI models have changed software security, the industry needs to adapt.

Responsible AI: Guardrails align innovation with ethics

AI innovation moves fast. But without the right guardrails, speed can come at the cost of trust, accountability, and long-term value.

Best practices for optimizing AI infrastructure at scale

Optimizing AI infrastructure isn’t about chasing peak performance benchmarks. It’s about designing for stability, resiliency, security, and operational clarity

Datos Insights: Securing APIs and multicloud in financial services

New threat analysis from Datos Insights highlights actionable recommendations for API and web application security in the financial services sector

Secrets to scaling AI-ready, secure SaaS

Learn how secure SaaS scales with application delivery, security, observability, and XOps.

How AI inference changes application delivery

Learn how AI inference reshapes application delivery by redefining performance, availability, and reliability, and why traditional approaches no longer suffice.