As organizations accelerate their journey to become a digital business, CIOs are taking the wheel and kicking the technology engine into high gear to power organizations through the second phase of digital transformation. Performance, as usual, is a significant obstacle to realizing the benefits of multi-cloud strategies and definitively driving businesses to extend to the edge.

I recently lost an hour or so of my life following threads on the polling rate of gaming mice versus that of a console controller. I learned that although my Xbox controller is capable of processing 24 MIPs, it still only polls input at 125 Hz. That means it reports back to the game on any changes about once every 8 milliseconds. Conversely, a gaming mouse polls input at 1000 Hz. It reports movement once every millisecond.

Now, you might think that’s insignificant. But in modern gaming—especially the competitive scene—every millisecond counts. And if you’re on a controller, engaged in a competition with someone using a mouse, the difference between one millisecond and eight milliseconds is a lifetime. By the way, I also discovered you can overclock a controller to poll at a rate commensurate with mice if you’re using it to game on a PC. Controller players will find it much more difficult to address this performance gap.

Performance matters, and not just for gaming. Our analysis of responses to the State of Application Strategy 2022 found performance impacting everything from security decisions to workload distribution strategies. Performance continues to reign supreme.

The Performance-Security Conundrum

It may be the worst kept secret of the digital age: organizations will, in fact, turn off security controls in exchange for performance.

Obviously there have been—and continue to be—situations in which security services are overwhelmed during an attack and to prevent an outage, they’ve been disabled. But that’s a pretty rare occurrence these days, with expertise in distributed scaling strategies neatly addressing the scenario in most cases—without losing security controls. So the question isn’t really “will you turn off security to gain a performance improvement” but “how much performance improvement would entice you to disable security controls”?

That’s exactly what we asked.

Because we’re “in” on the secret, we expected there would be an inflection point at which a majority of respondents would, in fact, turn off security controls. But we did not expect it to be such a small improvement in performance.

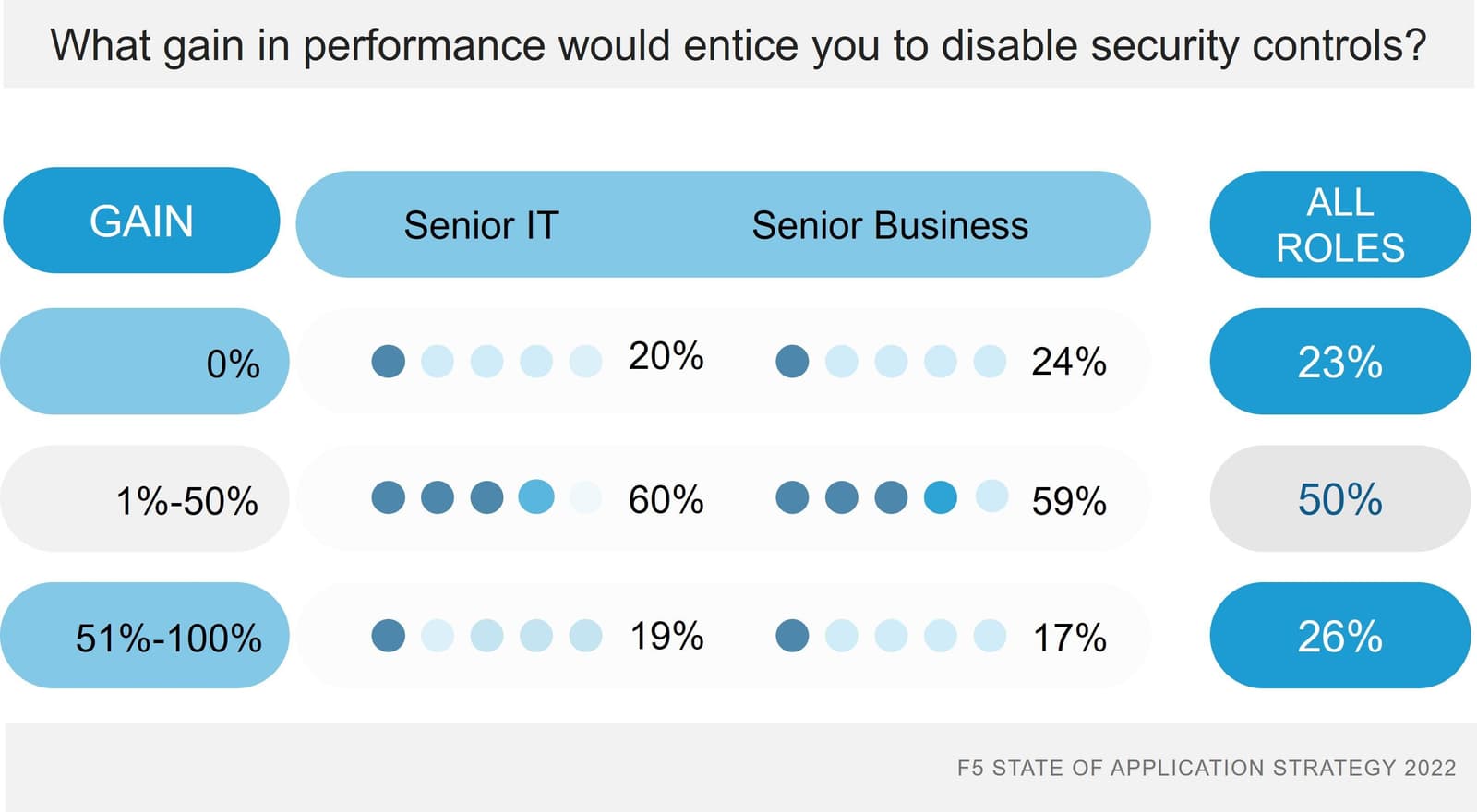

More than three-quarters—76%—would turn off security controls in exchange for an improvement in performance. Roughly two-thirds of that group would do so for between a 1% and 50% performance boost. The remainder would require a more substantial improvement—more than 51%—before they’d cut the cord on security controls.

When we dug in and considered responses from senior IT and business leadership—the folks who have the ability to make these decisions—the results were even more startling. Just about 60% of both IT and business leaders would turn off security controls for between a 1% and 50% gain in performance. Only one in five (20%) decision maker leaders could not be enticed to disable security in exchange for performance.

This should not necessarily be viewed as terrifying. Rather this attitude is indicative of a more mature view of security in which the focus shifts to managing risk over a constant state of defend and neutralize. Adaptive security will be one way to balance the battle between security and performance, and we’re already seeing a shift toward a preference for more adaptive security methods over more traditional ones.

The Performance-Cloud Conundrum

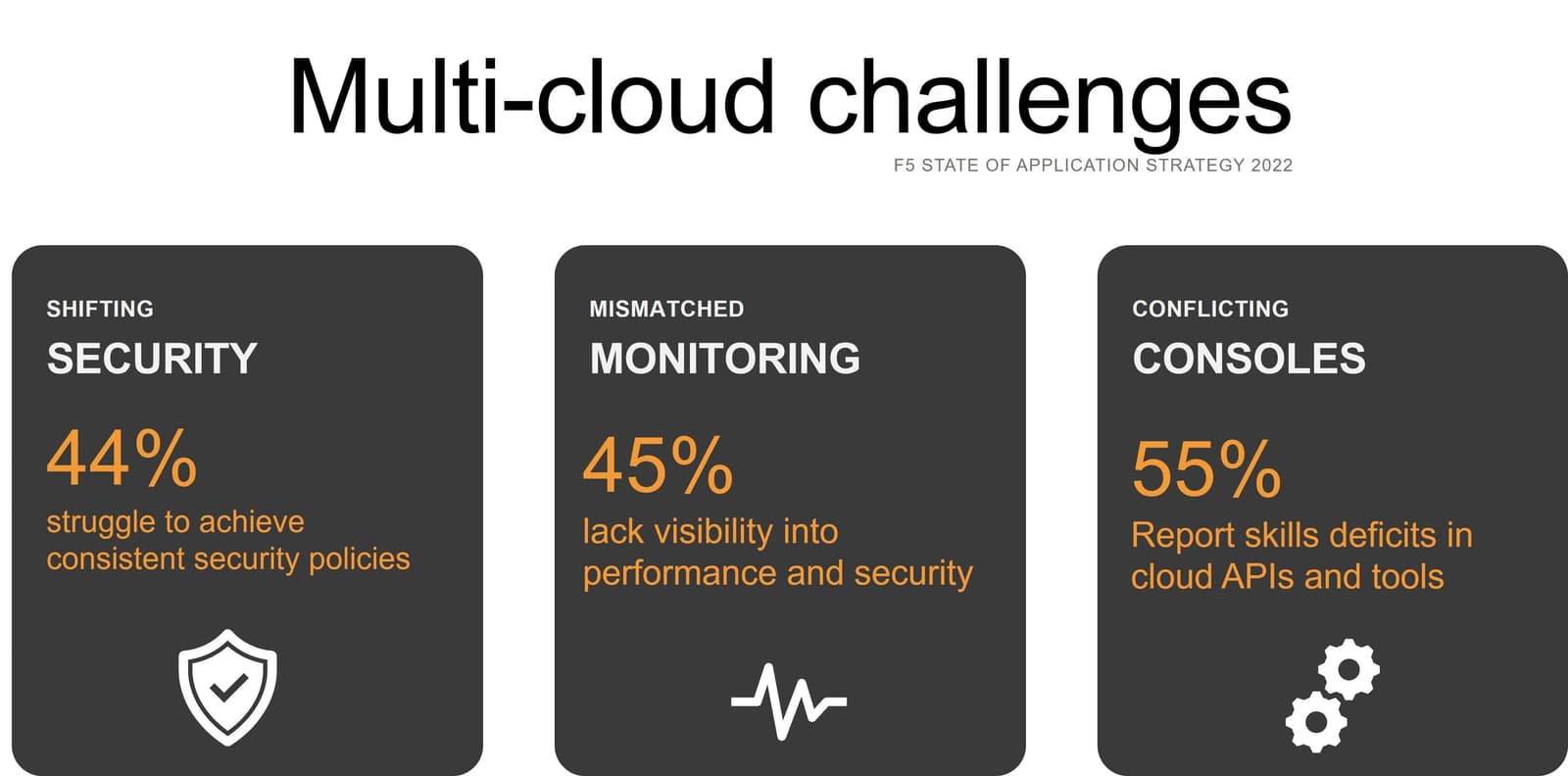

Security isn’t the only area in which we see conflicts with performance. One of the top challenges operating in a multi-cloud environment has been and continues to remain consistent performance across cloud properties. At 40% of respondents choosing it as a significant challenge, it was narrowly beat out by 41% who struggle with migrating apps across clouds, 44% challenged by applying consistent security policies, and the 45% who are frustrated by a lack of visibility into performance and security.

It is not lost on us that a lack of visibility across multi-cloud properties likely contributes to the struggle with consistent performance. After all, if you can’t measure it, you can’t optimize it either, let alone establish a consistent digital experience for customers and employees. And one of the top three insights respondents are missing? You guessed it, root cause of performance degradations.

Thus, you won’t be surprised to learn that “mining for root causes” and “mining to improve the customer experience” are the top two drivers of data analytics projects today. Traditional monitoring methods are not able to address the needs of modern business and its dependence on digital signals to enable the real-time operations and rapid response to changes in conditions across core and cloud properties.

If you can’t measure, you can’t know about it, and if you don’t know about, you can’t resolve it. The performance-cloud conundrum is primarily a problem of status and signal silos; a condition in which a cornucopia of monitoring solutions and services store digital health data in silos and prevent organizations from leveraging a more comprehensive data and observability strategy.

Sadly, a data and observability strategy is only half the solution. Being able to act quickly—even proactively—to resolve a situation is the other half. That, too, is challenged by the heterogenous nature of the services and solutions that address performance and security and scale: app security and delivery technologies. With a veritable buffet of solutions and services spread across core and cloud, there’s no single, simple way to close the loop between knowing about a problem and acting on it quickly. Mismatched monitoring and conflicting consoles continue to get in the way of delivering the consistent performance needed for extraordinary digital experiences.

The Performance-Edge Connection

Delivering extraordinary digital experiences is touted as one of the key benefits of edge computing. We noted last year that the top use cases planned for the edge were, in fact, related to performance. So it was no surprise that the same results turned up again this year. A mere 10% indicated they had no plans to deploy anything at the edge. For the rest, performance is a key driver.

More than four in ten (42%) plan to leverage edge computing to address application performance. More than one-third (35%) need the edge to support real-time data processing with latency requirements of less than 20 milliseconds

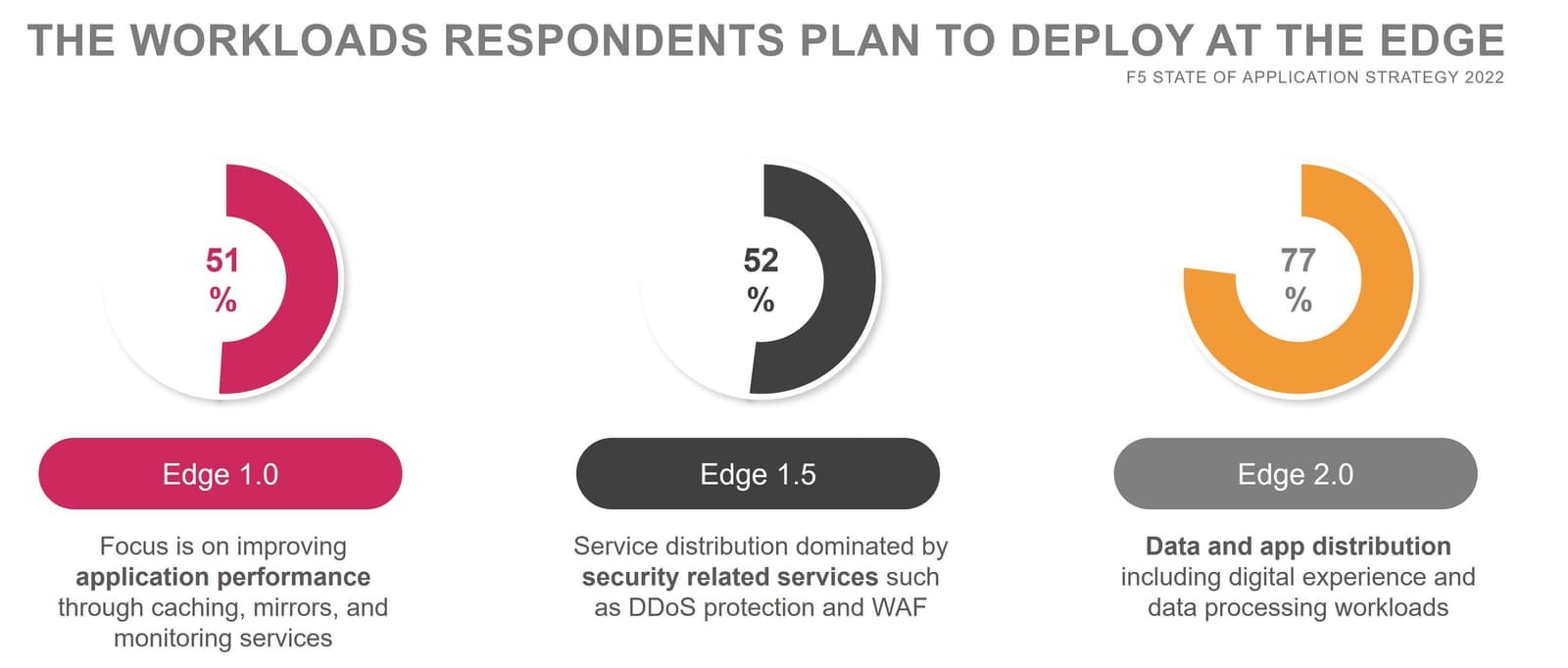

Not content with general use cases, however, we dug into the specific workloads planned for deployment at the edge.

We were not disappointed by the results, which demonstrate not only a desire to improve performance but the willingness to deploy application workloads at the edge.

Over half (51%) of planned edge workloads are related to application performance and include traditional edge services such as caching, mirroring, and monitoring. But more interesting is that more than three in four (77%) plan to deploy data and digital experience workloads at the edge. This perception of edge indicates a growing maturity in understanding that the evolution of edge moves its capabilities from content (1.0) to services (1.5) to workload (2.0) distribution, almost exclusively in the quest to improve the employee and customer experience.

Performance remains regnant

The months we spend analyzing the results of our annual research are exciting, sometimes surprising, and always fascinating. The thread of performance runs through nearly every aspect of IT and business today, driving deployment decisions and impacting the way organizations operate across core, cloud, and edge.

Performance remains regnant, and based on the behavior and expectations of my youngest, digital-native son, performance will remain on the throne for many years to come.

For more, download your own copy of our 2022 State of Application Strategy Report.

Check out related blogs for deeper dives on select topics:

State of Application Strategy 2022: Unpacking 8 Years of Trends ›

State of Application Strategy 2022: Security Shifts to Identity ›

State of Application Strategy 2022: Edge Workloads Expanding to Apps and Data ›

State of Application Strategy 2022: Digital Innovators Highlight the Importance of Modernizing ›

State of Application Strategy 2022: Multi-Cloud Complexity Continues ›

State of Application Strategy 2022: Time to Modernize Ops ›

State of Application Strategy 2022: The Future of Business Is Adaptive ›

About the Author

Lori MacVittie is a Distinguished Engineer and Chief Evangelist in F5’s Office of the CTO with deep expertise in application delivery, automation strategy, and infrastructure. She is known for turning complexity into clarity whether she’s defining guardrails for AI agents, dissecting brittle multicloud architectures, or probing the limits of scalable systems. She brings more than thirty years of industry experience across application development, IT architecture, and network and systems operations. Before joining F5, she served as an award-winning technology editor. MacVittie holds an M.S. in Computer Science and is a prolific author whose publications span security, cloud, and enterprise architecture. She is also an avid tabletop and video gamer with unapologetically strong opinions about cheese.

More blogs by Lori Mac VittieRelated Blog Posts

Multicloud chaos ends at the Equinix Edge with F5 Distributed Cloud CE

Simplify multicloud security with Equinix and F5 Distributed Cloud CE. Centralize your perimeter, reduce costs, and enhance performance with edge-driven WAAP.

At the Intersection of Operational Data and Generative AI

Help your organization understand the impact of generative AI (GenAI) on its operational data practices, and learn how to better align GenAI technology adoption timelines with existing budgets, practices, and cultures.

Using AI for IT Automation Security

Learn how artificial intelligence and machine learning aid in mitigating cybersecurity threats to your IT automation processes.

Most Exciting Tech Trend in 2022: IT/OT Convergence

The line between operation and digital systems continues to blur as homes and businesses increase their reliance on connected devices, accelerating the convergence of IT and OT. While this trend of integration brings excitement, it also presents its own challenges and concerns to be considered.

Adaptive Applications are Data-Driven

There's a big difference between knowing something's wrong and knowing what to do about it. Only after monitoring the right elements can we discern the health of a user experience, deriving from the analysis of those measurements the relationships and patterns that can be inferred. Ultimately, the automation that will give rise to truly adaptive applications is based on measurements and our understanding of them.

Inserting App Services into Shifting App Architectures

Application architectures have evolved several times since the early days of computing, and it is no longer optimal to rely solely on a single, known data path to insert application services. Furthermore, because many of the emerging data paths are not as suitable for a proxy-based platform, we must look to the other potential points of insertion possible to scale and secure modern applications.